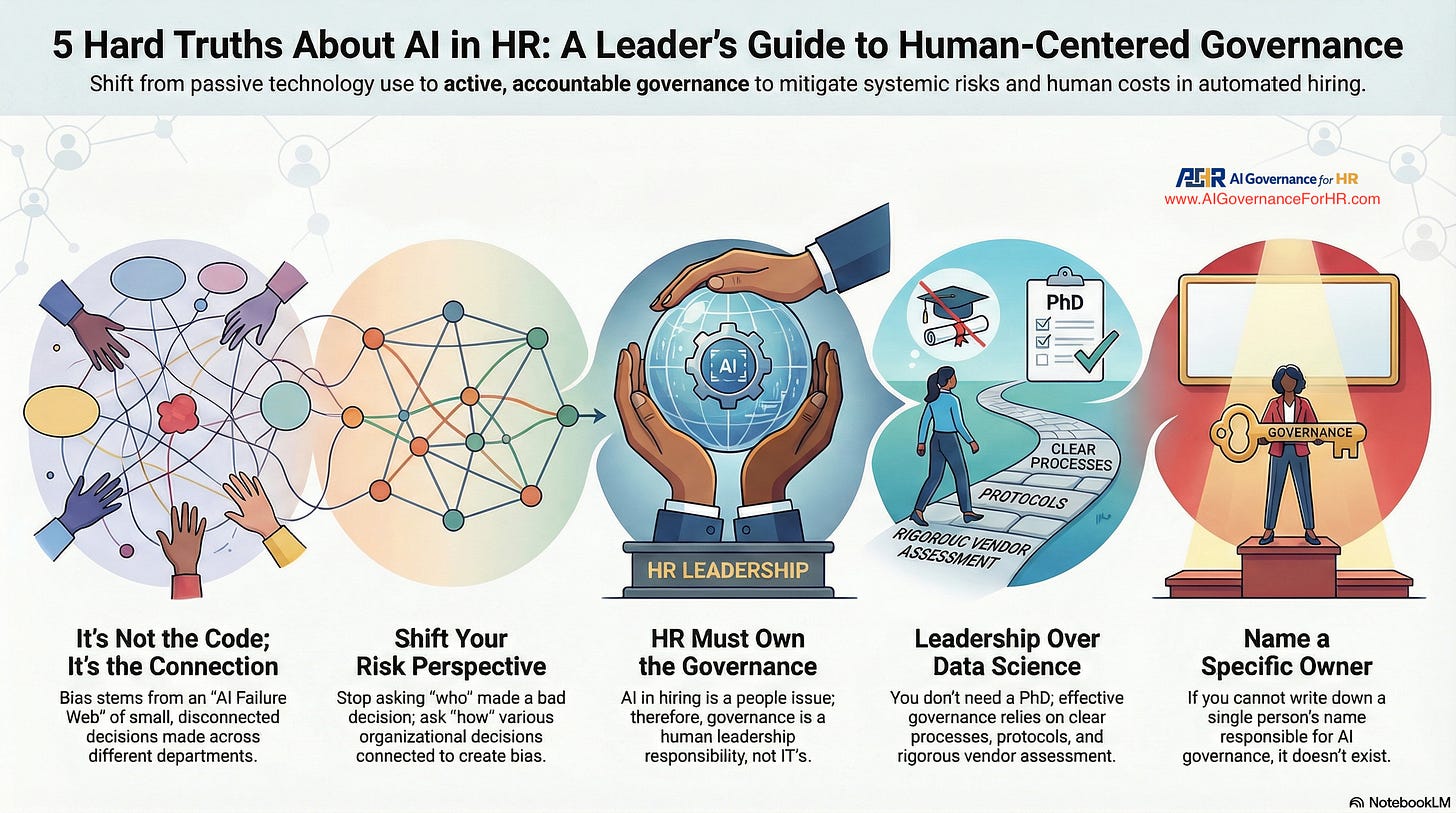

5 Hard Truths About AI in HR That Every Leader Needs to Know

The Human Cost of Automation

If you’re a senior HR leader, you’ve likely seen the posts. Talented, qualified professionals share their heartbreaking experiences on LinkedIn, writing things like, “I’ve applied to 400 jobs. I haven’t had a single human reach out.” or “Every rejection feels like a punch I never saw coming.”

For years, we’ve told job seekers to add keywords and match job descriptions, but we never prepared them for the emotional toll of being rejected by a machine, over and over, without ever speaking to a person. These weren’t isolated incidents; they were clues to a systemic problem.

The spark that finally exposed this reality was the Mobley v. Workday case. A job seeker, automatically rejected for hundreds of roles, filed a lawsuit that confirmed what many had suspected: AI-driven hiring systems can be flawed and discriminatory. The failure wasn’t just technological; it was a failure of leadership and a lack of human-centered governance.

This post will break down the five most surprising and critical truths about AI in hiring that HR leaders can no longer ignore. These are not technical problems for your IT department; they are fundamental leadership challenges that land squarely on your desk.

1. Your Biggest AI Risk Isn’t a “Bad Algorithm”—It’s Your Own Disconnected Decisions

Catastrophic AI failures, such as the one in the Mobley case, are not caused by a single piece of faulty technology. Instead, they are the result of an “AI Failure Trap”—dozens of small, seemingly rational decisions made in isolation by different departments. Procurement approves a cost-saving measure, IT deploys a system, HR provides the data sets, and Legal reviews the disclaimers. Each decision makes sense on its own, but together they can create a perfect storm of systemic discrimination.

The worst organizational failures are not caused by bad people. They are caused by disconnected decisions that seem rational in isolation.

This is a critical shift in thinking for HR leaders. It moves the problem from a mysterious “tech black box” into your direct sphere of influence. The root cause isn’t the code; it’s the organizational systems, processes, and decision-making frameworks you oversee.

2. You’re Asking the Wrong Question About AI Risk

When something goes wrong, traditional risk management asks, “Who made the bad decision?” This approach is ineffective for AI failures, where responsibility is distributed among many stakeholders—Procurement, IT, HR, and Legal. When everyone is responsible, no one is accountable.

A modern governance framework like the SimpliFocus™ system poses a different, more powerful question: “How did our decisions connect to create bias?” This question calls for a systemic view, focusing on the links between departments and decisions, where the true failure points lie. By examining the entire decision-making chain, you can shift from reactive firefighting after a disaster to proactive AI governance that prevents disasters from happening in the first place.

3. AI Governance Isn’t an IT Problem; It’s a Human Leadership Issue

Many leaders mistakenly assume that AI governance is the responsibility of Legal or IT. They see it as a technical or compliance checkbox. This is a dangerous assumption. Because AI in hiring is fundamentally a “people issue,” its governance is a human leadership issue.

Imagine this: It’s 9 AM. Your General Counsel calls. An algorithmic discrimination lawsuit was filed against your organization overnight because your AI hiring tool rejected 100,000 qualified candidates. Your name is listed as the responsible party. What would you say? Would you know which systems were running? Could you explain how they made decisions or provide documentation showing they were tested for fairness? As an HR leader, you must be a proactive steward of these systems, not just a passive user.

4. You Don’t Need a Data Science PhD to Prevent AI Bias

The prospect of governing AI can feel overwhelming, but you do not need to become a technical expert to do it effectively. The key is understanding your organizational systems and asking the right questions. The solutions involve leadership, process, and accountability—not advanced computer science.

The Mobley case was decided under employment law, not computer science. The court examined outcomes and organizational responsibility. Technical complexity did not protect Workday. It will not protect you.

Effective governance is within your capabilities. It entails implementing a clear governance architecture, establishing rigorous protocols for vendor assessment, and testing your systems for biased outcomes. These are leadership and risk-management functions, not data-science problems.

5. If You Can’t Name the Owner, You Don’t Have AI Governance

Here is a simple, stark reality check: Who in your organization is responsible for AI governance in talent-level solutions? If you cannot name a single person, effective governance does not exist. When responsibility is diffuse, “everyone and no one” is accountable, leaving your organization without a safety net.

Turning governance from a vague idea into a real organizational practice begins with establishing a “Governance Architecture” with clear ownership—Step 3 of the SimpliFocus™ system. This involves assigning a named owner, creating RACI frameworks, and defining clear escalation paths. Without a designated leader accountable for the entire process, any governance effort is destined to fail.

Your Governance Journey Starts Now

Ultimately, AI governance is not about creating bureaucracy or mastering technology. It’s a fundamental act of leadership and a commitment to protecting people—your candidates, employees, and organization. It’s about ensuring that efficiency never comes at the expense of empathy and that innovation serves people rather than filtering them out.

Your AI systems are running right now. If the call about a lawsuit came tomorrow, would you have the documentation to defend your decisions?

About AI Governance for HR CoLab Workspace

Margaret Spence, author of When AI Breaks the Law, helps HR and talent leaders operationalize AI governance across hiring, performance, and promotion. This CoLab workspace delivers daily frameworks to bridge the gap between compliance documentation and ethical AI principles—the gap where the $365M Mobley lawsuit occurred. You’ll build governance infrastructure that reduces legal, reputational, and EU AI Act compliance exposure before AI-driven talent decisions scale bias into discrimination lawsuits.

Join us live every Wednesday for our Let’s Build Governance Together Series - Our live programs are a premium edition of this Colab.