6 Ways the EU AI Act Could Reshape U.S. Talent Strategy

Why U.S. HR Leaders Can’t Ignore the EU AI Act

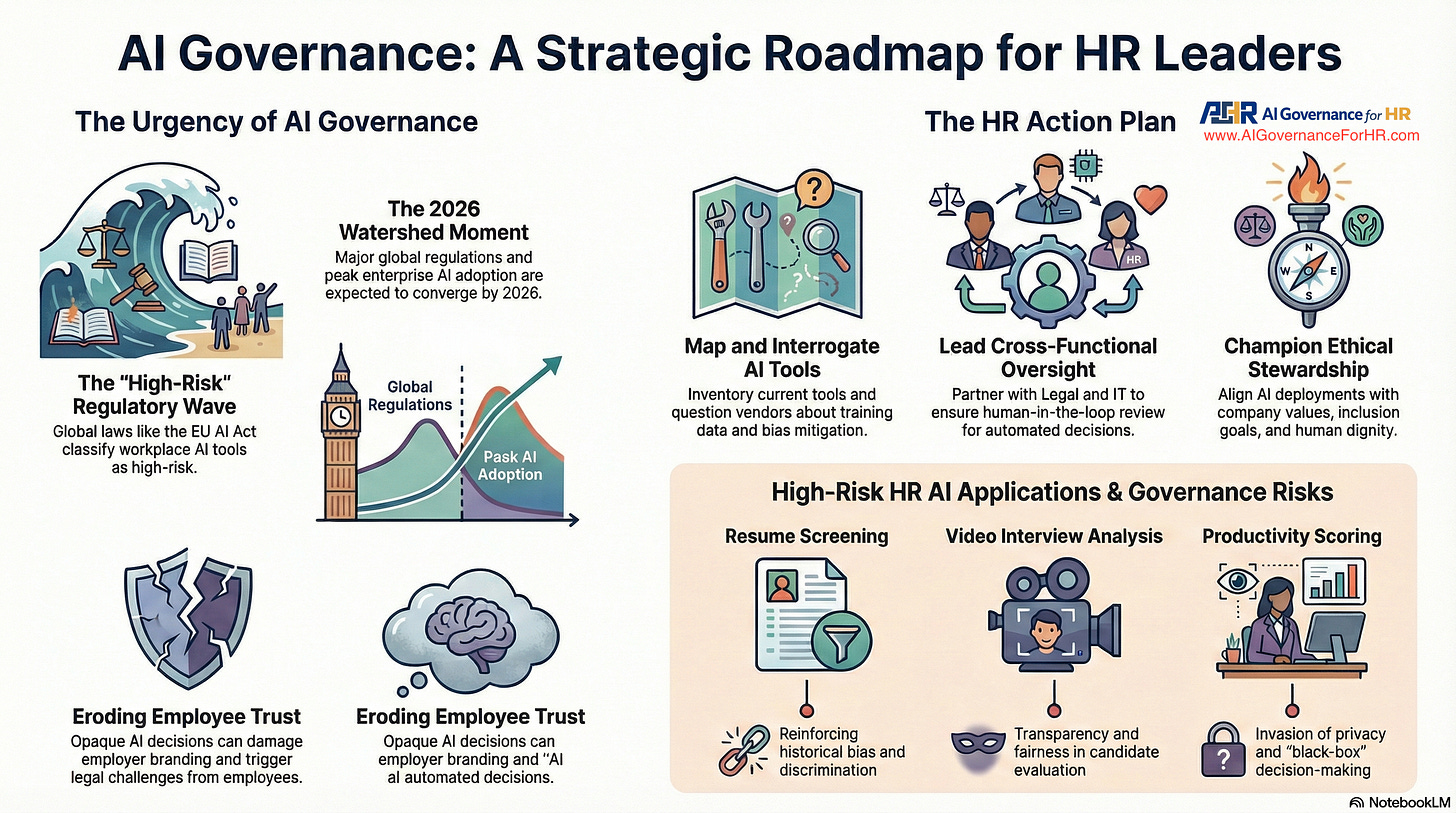

The European Union’s Artificial Intelligence Act (EU AI Act), passed in 2024 and set to take effect in August 2026, is the world’s first comprehensive legal framework for AI—and it’s not just a European issue. For U.S.-based HR and talent leaders, the ripple effects of this legislation are already evident.

The Act classifies employment-related AI systems as “high-risk,” imposing strict requirements for transparency, documentation, human oversight, and bias prevention. Even if your organization is headquartered in the U.S., you’re subject to compliance if your AI-driven hiring or evaluation tools interact with candidates or employees in the EU.

Beyond legal exposure, the risks include reputational damage, loss of candidate trust, and class-action lawsuits under existing U.S. discrimination laws. For any company hiring globally, the EU AI Act is no longer optional—it’s a compliance imperative.

This article breaks down six critical ways the Act could reshape your talent strategy—and outlines what your team must do now.

1. The EU AI Act’s Extraterritorial Reach

One of the Act’s most powerful features is its extraterritorial reach. The EU AI Act applies well beyond Europe’s borders, affecting companies that:

Use AI to screen or assess candidates located in the EU

Evaluate EU-based employees, regardless of their citizenship

Operate offices within EU Member States

For example, if your U.S. talent team uses AI to screen applicants for a Paris-based role, the system falls under EU jurisdiction—even if the technology is deployed from New York.

This mirrors the global impact of the GDPR, which forced multinational organizations to overhaul how they manage personal data. Now, the same reckoning applies to AI-powered employment decisions.

Headquarters location doesn’t matter—candidate location does. HR leaders must treat AI compliance as an international issue, not merely a domestic one.

2. High-Risk Classification for Employment AI

The EU AI Act categorically labels any AI system used in employment contexts as “high-risk.” This includes technologies that impact:

Hiring or candidate screening

Promotion, performance evaluation, or termination

Task allocation based on behavior or traits

That means popular tools such as resume screeners, automated video interviews, assessments, performance management platforms, and even scheduling software are subject to the Act’s highest level of scrutiny.

Once labeled high-risk, a system must meet strict compliance requirements, including:

Transparent, explainable decision-making (Article 13)

Documented risk management and bias testing (Article 9)

Named human oversight with authority to intervene (Article 14)

Full technical documentation and decision logs (Articles 11 & 12)

Fundamental rights assessments (Article 27)

The penalties for non-compliance are steep: up to €20 million or 4% of global revenue, along with reputational damage and legal exposure.

U.S. HR teams must now evaluate AI tools not only for utility but also for compliance readiness.

3. Documentation and Audit Mandates

With a high-risk classification comes a new standard of accountability: documentation must be complete, traceable, and audit-ready.

The EU AI Act requires:

Technical Documentation (Article 11): Employers must maintain full blueprints of how the AI works—its logic, purpose, and decision criteria.

Decision Logging (Article 12): Each AI decision must be recorded and stored for at least six months, enabling post-hoc reviews and audits.

Bias Mitigation Records (Article 9): You must document how you’ve identified and mitigated bias in both training data and outcomes.

Impact Assessments (Article 27): Systems must be reviewed for potential infringements on individual rights, with findings documented.

This is not compliance theater. If an algorithm rejects 100 candidates, you must show why—with evidence.

Without this, your organization will struggle to defend itself in audits, lawsuits, or media scandals. Documentation isn’t a formality—it’s your legal defense strategy.

4. Candidate Rights and Transparency Requirements

The EU AI Act grants new rights to candidates affected by AI-based decisions—and demands greater transparency from employers.

Under Article 13, organizations must:

Clearly explain how their AI systems work

Describe what data is being used and why

Justify why a decision was made—especially in cases of rejection

Candidates also have the right to request human review of automated decisions. This means AI outputs can’t remain a mystery. “The algorithm decided” is no longer acceptable.

For HR teams, this transparency requirement is twofold:

Legal: Non-explainable systems violate EU law.

Strategic: Candidates demand fairness and clarity. Employers that can’t provide it risk losing top talent and damaging their employer brand.

Transparency builds trust. It also protects against lawsuits and regulatory action.

5. Required Human Oversight of AI Decisions

The EU AI Act mandates a human-in-the-loop—someone with the competence, training, and authority to review and override AI decisions.

Article 14 requires this oversight to be established before deployment, not after an incident has occurred. It’s a preemptive control, not a reactive fix.

This breaks a dangerous pattern across many organizations: deploying AI tools with limited internal understanding and full reliance on vendors. Under the law, that’s not just negligent—it’s noncompliant.

HR leaders must ensure that qualified personnel are assigned to:

Monitor AI decisions

Understand the rationale behind them

Intervene when necessary

Ultimately, you—the employer—are the “deployer” under Article 26. You cannot outsource accountability to a software vendor.

If your team lacks the capacity to understand and control the system, it shouldn’t be in use.

6. What U.S.-Based Companies Hiring Globally Must Do Now

The clock is ticking toward August 2026. Here’s your action plan:

Classify Your AI Tools: Determine which are high-risk under Article 6—anything touching hiring, promotion, or evaluation.

Assign Ownership: Name one accountable leader per system. Governance isn’t a group project—it needs a single owner (Article 26).

Audit Vendors Independently: Don’t rely on marketing claims. Test for bias, transparency, and legal risk yourself (Articles 9 + 13).

Build Transparency Channels: Train staff to explain decisions and support candidates’ rights to challenge them (Article 13).

Implement Human Oversight: Train humans with override authority. Document their qualifications and involvement (Article 14).

Prepare for Audits: Maintain decision logs, technical records, and impact assessments ready for regulatory review (Articles 11, 12, 27).

Global hiring now carries global governance responsibility. Compliance is no longer optional—it’s a core part of your talent infrastructure.

From Reactive Compliance to Proactive Governance

The EU AI Act is more than legislation—it’s a call to action. It challenges HR and talent leaders to rethink how they deploy technology, measure fairness, and assume responsibility.

The future of hiring isn’t just fast and automated. It’s explainable, accountable, and equitable.

You have two choices: proactive governance or reactive crisis control.

The deadline is August 2026. But the time to act is now.

About AI Governance for HR CoLab Workspace

Margaret Spence, author of When AI Breaks the Law, helps HR and talent leaders operationalize AI governance across hiring, performance, and promotion. This CoLab workspace delivers daily frameworks to bridge the gap between compliance documentation and ethical AI principles—the gap where the $365M Mobley lawsuit occurred. You’ll build governance infrastructure that reduces legal, reputational, and EU AI Act compliance exposure before AI-driven talent decisions scale bias into discrimination lawsuits.

Join us live every Wednesday for our Let’s Build Governance Together Series - Our live programs are a premium edition of this Colab.