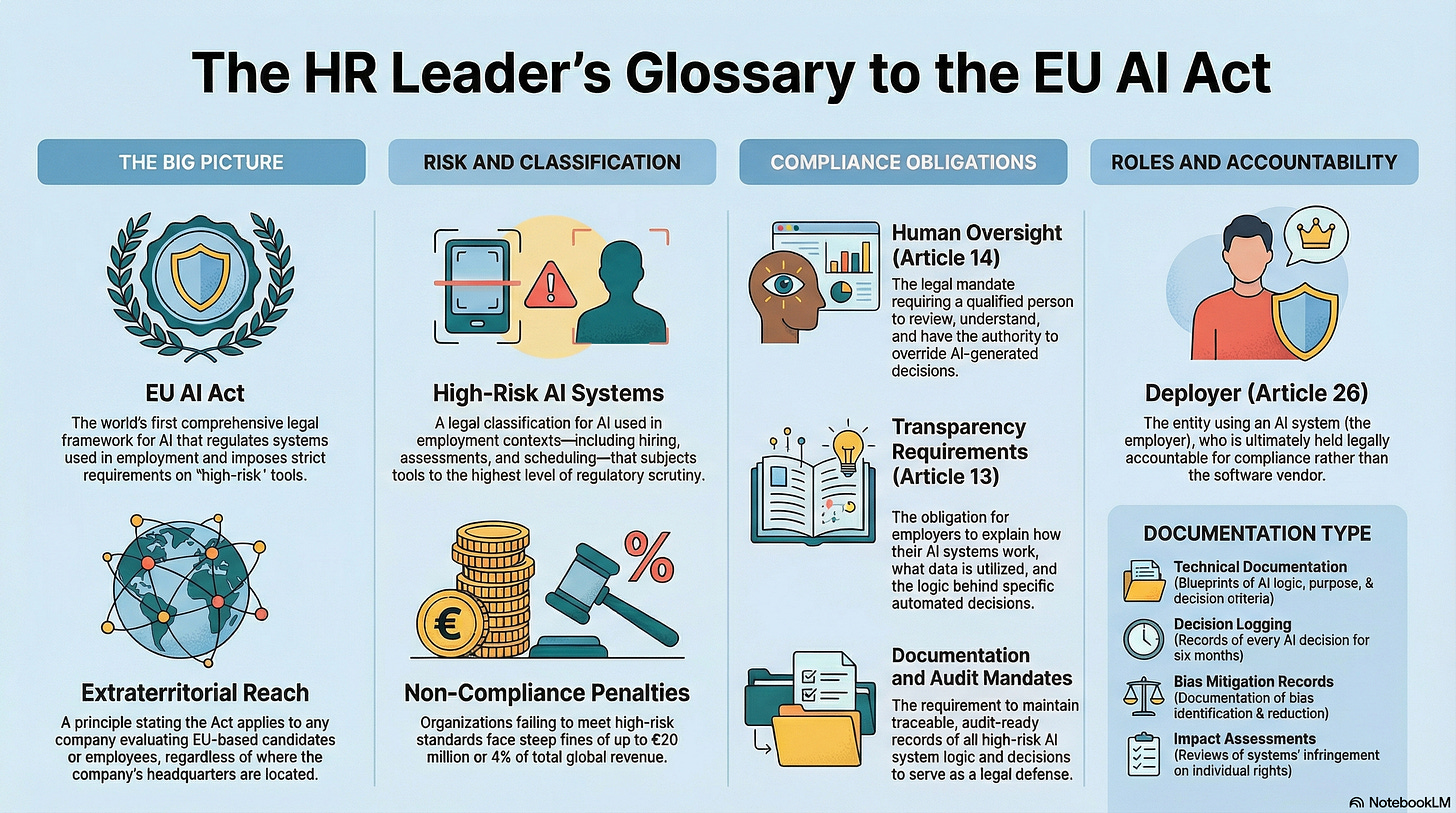

A Practical Glossary of EU AI Act Terms for Global HR Leaders

Your Quick-Start Guide to AI Compliance Terminology

The EU AI Act, effective August 2026, is the world’s first comprehensive legal framework for artificial intelligence. For U.S.-based HR leaders who hire globally, its strict rules on AI in employment are not a future concern—they are an immediate compliance imperative. This glossary defines the essential terms you need to know to ensure your talent strategy is compliant and future-ready.

Part 1: The Big Picture Concepts

1. EU AI Act

The EU AI Act is the world’s first comprehensive legal framework for AI, passed in 2024 and scheduled to take effect in August 2026.

Why It Matters for HR: The Act is not just a European issue; it is a “compliance imperative” for any U.S. company hiring globally. It directly regulates AI systems used in employment, classifying them as “high-risk” and imposing strict new requirements.

2. Extraterritorial Reach

This term describes the Act’s power to extend well beyond the EU’s borders, affecting companies based on their activities rather than their location.

The Act applies to any company that:

Uses AI to screen or assess candidates located in the EU

Evaluates EU-based employees, regardless of their citizenship

Operates offices within EU Member States

Why it Matters for HR: Your company’s headquarters location is irrelevant; what matters is where your candidates or employees are. As the source emphasizes, “Headquarters location doesn’t matter—candidate location does.” This mirrors the global impact of GDPR, but this time, the reckoning applies to AI-powered employment decisions.

Now that you understand the Act’s global reach, it’s crucial to know how it categorizes the specific AI tools your team uses every day.

Part 2: The Core Classification and Its Impact

3. High-Risk AI Systems

Under the EU AI Act, any AI system used in employment contexts is categorically labeled “high-risk.”

Examples of high-risk employment tools include:

Resume screeners

Automated video interviews

Assessments

Performance management platforms

And even scheduling software

Why it Matters for HR: This classification subjects your HR tools to the highest level of scrutiny and strict compliance requirements. Non-compliance carries steep penalties of up to €20 million or 4% of your company’s global revenue, along with strategic risks such as reputational fallout, loss of candidate trust, and legal exposure under existing U.S. discrimination laws.

Because employment AI is considered “high-risk,” it triggers several key obligations related to human involvement, transparency, and documentation.

Part 3: Key Compliance Obligations for High-Risk Systems

4. Human Oversight (Article 14)

This is the legal mandate to have a “human-in-the-loop”—a qualified person with the competence, training, and authority to review and override AI-driven decisions.

Why it Matters for HR: This is a preemptive control that must be established before the AI is deployed. This means asking a critical question now: ‘Who on my team is qualified to be the human-in-the-loop, and how will we train and empower them to override an algorithm?’ Relying on your vendor is not a compliant strategy.

5. Transparency Requirements (Article 13)

This is the obligation for organizations to clearly explain how their AI systems work, what data they use, and why they make specific decisions. This grants new rights to candidates, including the right to request a human review of an automated decision.

Why it Matters for HR: Simply stating “The algorithm decided” is no longer a legally acceptable explanation for a hiring or promotion decision. Therefore, transparency is not just a legal requirement—it is an essential strategy for building candidate trust and actively defending your employer brand.

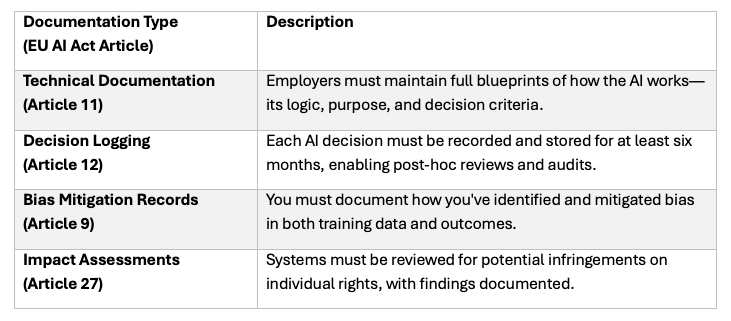

6. Documentation and Audit Mandates

This is the requirement to maintain complete, traceable, and audit-ready records for any high-risk AI system used in your talent processes.

Why it Matters for HR: Documentation is not a formality; it is your “legal defense strategy.” These records are essential for defending your organization against audits, regulatory actions, and potential lawsuits.

With these significant compliance duties in mind, the Act is clear about who bears final accountability.

Part 4: Understanding Your Role and Responsibility

7. Deployer (Article 26)

A “Deployer” is the entity that uses an AI system under its own authority. In the context of HR, the employer is the Deployer.

Why it Matters for HR: This term is critical because it clarifies that the employer—not the software vendor—is ultimately accountable for the AI system’s compliance in its specific use case. This ‘Deployer’ status is precisely why the obligations for Human Oversight (Article 14) and Documentation (Articles 9, 11, 12, 27) fall squarely on your shoulders, not the vendor’s.

Proactive Governance is Non-Negotiable

The EU AI Act compels HR leaders to evolve from technology users to technology governors. The future of hiring demands systems that are explainable, accountable, and equitable, leaving leaders with a clear choice: proactive governance or reactive crisis control.

About AI Governance for HR CoLab Workspace

Margaret Spence, author of When AI Breaks the Law, helps HR and talent leaders operationalize AI governance across hiring, performance, and promotion. This CoLab workspace delivers daily frameworks to bridge the gap between compliance documentation and ethical AI principles—the gap where the $365M Mobley lawsuit occurred. You’ll build governance infrastructure that reduces legal, reputational, and EU AI Act compliance exposure before AI-driven talent decisions scale bias into discrimination lawsuits.

Join us live every Wednesday for our Let’s Build Governance Together Series - Our live programs are a premium edition of this Colab.

Ready to Build Real AI Governance?

Most HR leaders have AI policies. Few have AI governance systems. Join the waiting list for “When AI Breaks the Law” and get the tools to close that gap.