AI Governance in HR: Establishing Guardrails for a Responsible Workplace

Implementing AI Governance Across the Talent Lifecycle

The Talent Cycle Is Now an AI System

Today, nearly every phase of the talent cycle is influenced by artificial intelligence. Recruiting platforms rank resumes. Interview tools analyze tone and word choice. Learning systems recommend training based on behavioral patterns. AI is not just an add-on. It has become a silent decision-maker across hiring, development, retention, and exit.

This transformation means that HR functions once driven by human judgment now rely on algorithms to evaluate people, often without employees or candidates realizing it. The risks increase when those systems are built on biased data, operate without human review, or produce decisions no one can explain.

From sourcing to succession planning, the talent cycle has become a chain of AI-driven decisions. Without proper guardrails, this chain can introduce systemic discrimination, erode trust, and lead to legal consequences.

HR leaders must recognize that the talent cycle is not only a process but a system shaped by data and governed by code. If left unchecked, it becomes a liability. With strong AI governance, however, it can become a source of innovation that enhances fairness, speed, and strategic value.

This article explores how talent leaders can set guardrails that balance innovation with integrity. You will learn to embed governance across every phase of the talent cycle, from hiring to offboarding, and ensure your AI works for people, not just data.

The Business Imperative of AI Governance

Artificial intelligence is no longer a future concept in HR. It is already embedded throughout the talent lifecycle. From resume screening and candidate ranking to performance evaluation and internal mobility, AI is driving efficiency and scale. But without clear governance, it also poses risk: legal, ethical, and reputational.

High-profile regulations, such as the EU AI Act, have heightened awareness of external compliance. Yet even in regions without strict laws, HR leaders must ask:

· Who controls our AI?

· Can we explain its decisions?

· Are we protecting our people and our brand?

AI governance is not just about meeting legal standards. It is about building trustworthy, responsible, and defensible systems. That responsibility begins within HR.

Bridging the Gap Between Innovation and Accountability

AI governance in HR refers to the policies, practices, and structures that ensure the ethical, transparent, and responsible use of artificial intelligence throughout the talent lifecycle. It is the mechanism that prevents AI from becoming a black box that makes high-impact decisions without oversight.

For HR teams, AI governance means more than checking boxes for compliance. It involves asking critical questions such as:

Who is accountable for AI outcomes in hiring or performance evaluations?

Can we explain how the algorithm made its decisions?

Are we continuously testing for bias or adverse impact?

Do we have human experts reviewing and overriding AI when necessary?

AI governance bridges the gap between innovation and accountability. It ensures that the tools HR uses to evaluate people are aligned with organizational values, legal obligations, and candidate expectations.

Whether you are using AI to screen resumes, recommend promotions, or forecast attrition, governance ensures those systems are fair, explainable, and auditable. It transforms AI from a risky convenience into a responsible business asset.

In the context of HR, AI governance is not optional. It is foundational to building equitable, defensible, and human-centered workplaces.

The Core Pillars of AI Governance in HR

Establishing AI governance begins with clear principles. For HR, four foundational pillars determine whether AI tools support a responsible workplace or expose it to risk: Accountability, Transparency and Explainability, Bias Mitigation, and Human Oversight.

Accountability

AI does not absolve HR teams of responsibility. Every AI system used in hiring, performance, or compensation must have a named owner who has the authority and knowledge to ensure it operates fairly. Without assigned accountability, organizations fall into the “accountability gap,” where outcomes are blamed on vendors, IT, or the algorithm itself.

Transparency and Explainability

HR must be able to explain how AI systems work, what data they use, and how decisions are made. This is especially important in candidate selection, where a lack of clarity can erode trust or trigger legal action. Explainability is not just technical; it must be accessible to candidates, managers, and regulators alike.

Bias Mitigation

AI systems often inherit biases from historical data. A resume screener trained on past hiring decisions may learn to favor certain schools, names, or work patterns that reflect systemic bias. Governance means regularly testing for adverse impact, reviewing training data, and intervening when patterns of unfairness emerge.

Human Oversight

AI should support, not replace, human decision-making. HR teams must ensure that qualified professionals review AI outputs and can override decisions when necessary. Oversight must occur before deployment and throughout the system’s lifecycle.

These four pillars form the blueprint for building AI systems that are not only functional but also fair, explainable, and aligned with organizational values.

Designing a Practical AI Governance Framework for HR

Turning AI governance into an operational system requires a structured, repeatable framework. HR leaders do not need to reinvent enterprise compliance models. Instead, they can adapt proven governance approaches to the talent lifecycle’s unique risks and workflows.

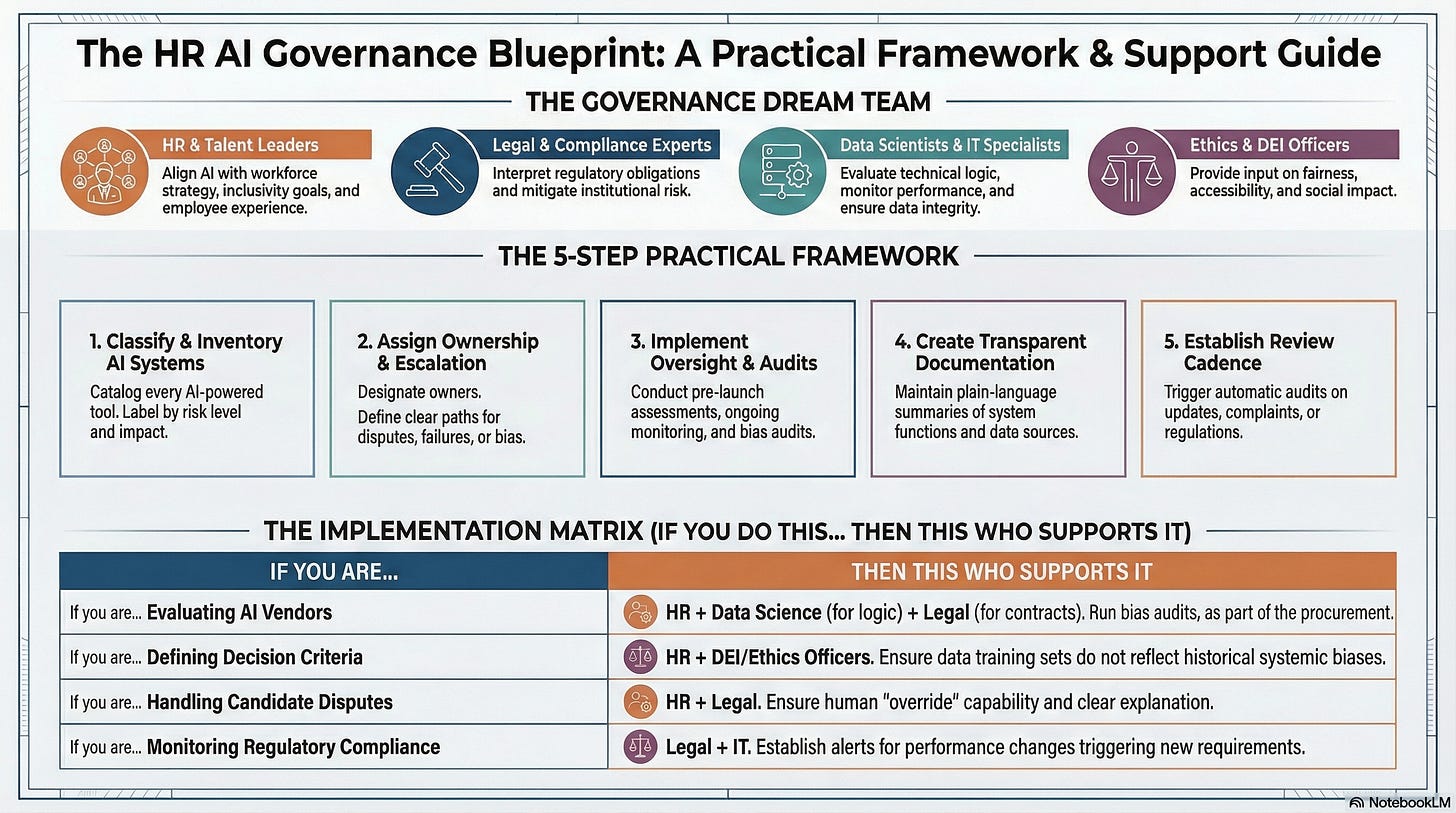

Here is a five-step governance architecture tailored for HR:

1. Classify AI Systems

Inventory all AI-powered tools used across the employee lifecycle. Identify which systems influence hiring, promotion, performance management, or termination. Label them by risk level and by their impact on individuals.

2. Assign Ownership and Escalation Paths

Each AI system should have a designated owner, typically someone in HR or legal who understands the tool and is accountable for its outcomes. Define clear escalation paths for issues such as candidate disputes, system failures, or bias indicators.

3. Implement Oversight and Audit Processes

Conduct a pre-launch risk assessment before deploying any high-impact AI system. Implement ongoing monitoring, including bias audits, performance reviews, and spot checks. Document all findings and decisions.

4. Create Transparent Documentation

Maintain plain-language summaries of each system’s function, decision criteria, and data sources. Ensure this documentation is accessible to internal stakeholders and, when appropriate, to candidates or regulators.

5. Establish Review Cadence and Compliance Triggers

Set up regular governance reviews, either quarterly or biannually. Include automatic reviews triggered by system updates, candidate complaints, or regulatory changes.

This framework helps HR leaders embed governance into their operating rhythm, transforming AI from a static tool into a dynamic, responsible solution.

Building Cross-Functional AI Governance Teams

AI governance cannot be owned by HR alone. It requires cross-disciplinary collaboration to ensure systems are ethical, legal, and operationally sound.

A strong AI governance team should include:

HR and Talent Leaders to align AI use with workforce strategy and inclusivity goals

Legal and Compliance Experts to interpret regulatory obligations and mitigate risk

Data Scientists or IT Specialists to evaluate system logic, performance, and data integrity

Ethics or DEI Officers to provide input on fairness, accessibility, and social impact

This team should meet regularly, share documentation, and coordinate on vendor selection, risk assessments, and policy updates. Cross-functional governance ensures proactive oversight rather than reactive oversight.

Governance is a long-term capability, not a one-time project. Building the right team ensures it can scale and evolve alongside the technology.

Embedding Governance into Daily HR Ops

For governance to be effective, it must be embedded in the everyday flow of HR operations. This includes integrating governance checkpoints into routine activities.

Examples include:

Running bias audits as part of vendor evaluations

Adding explainability reviews into system procurement checklists

Training recruiters and HR staff to spot questionable AI outputs

Setting alerts for changes in AI performance or adverse impact

Governance also requires a cultural shift. HR should foster a safe environment where people can question AI decisions without fear of reprisal. Candidates and employees should have clear pathways to report concerns or request human review.

When governance is embedded in daily operations, it becomes automatic, defensible, and a natural part of ethical HR practice.

Responsible AI Starts with HR

Artificial intelligence can drive immense value in HR, but only when guided by clear guardrails. The responsibility to ensure AI is fair, explainable, and accountable does not belong solely to regulators or tech vendors. It begins with HR.

By establishing governance across the talent cycle, HR leaders become stewards of both innovation and integrity. They protect candidates, employees, and the business from unseen risks.

Governance is not a barrier to progress. It is what makes progress sustainable.

As AI becomes embedded in every decision about people, the role of HR shifts from process owner to system governor. It is a shift worth embracing.

About AI Governance for HR CoLab Workspace

Margaret Spence, author of When AI Breaks the Law, helps HR and talent leaders operationalize AI governance across hiring, performance, and promotion. This CoLab workspace delivers daily frameworks to bridge the gap between compliance documentation and ethical AI principles—the gap where the $365M Mobley lawsuit occurred. You’ll build governance infrastructure that reduces legal, reputational, and EU AI Act compliance exposure before AI-driven talent decisions scale bias into discrimination lawsuits.

Join us live every Wednesday for our Let’s Build Governance Together Series - Our live programs are a premium edition of this Colab.

Don’t Wait for Your Mobley Moment

The gap between AI policies and AI governance is where $365 million lawsuits happen. Join the waiting list now and get the SimpliFocus Readiness Assessment free. Diagnose your governance gaps before they cost you.

Regarding the topic of the article, your point that 'the talent cycle is not only a process but a system shaped by data and goverened by code' resonated deeply. This highlights the architectural shift AI introduces in HR. Excellent analysis.