AI Governance Isn’t a Handbook Policy

10 Myth-Busting Questions HR Is Afraid to Ask

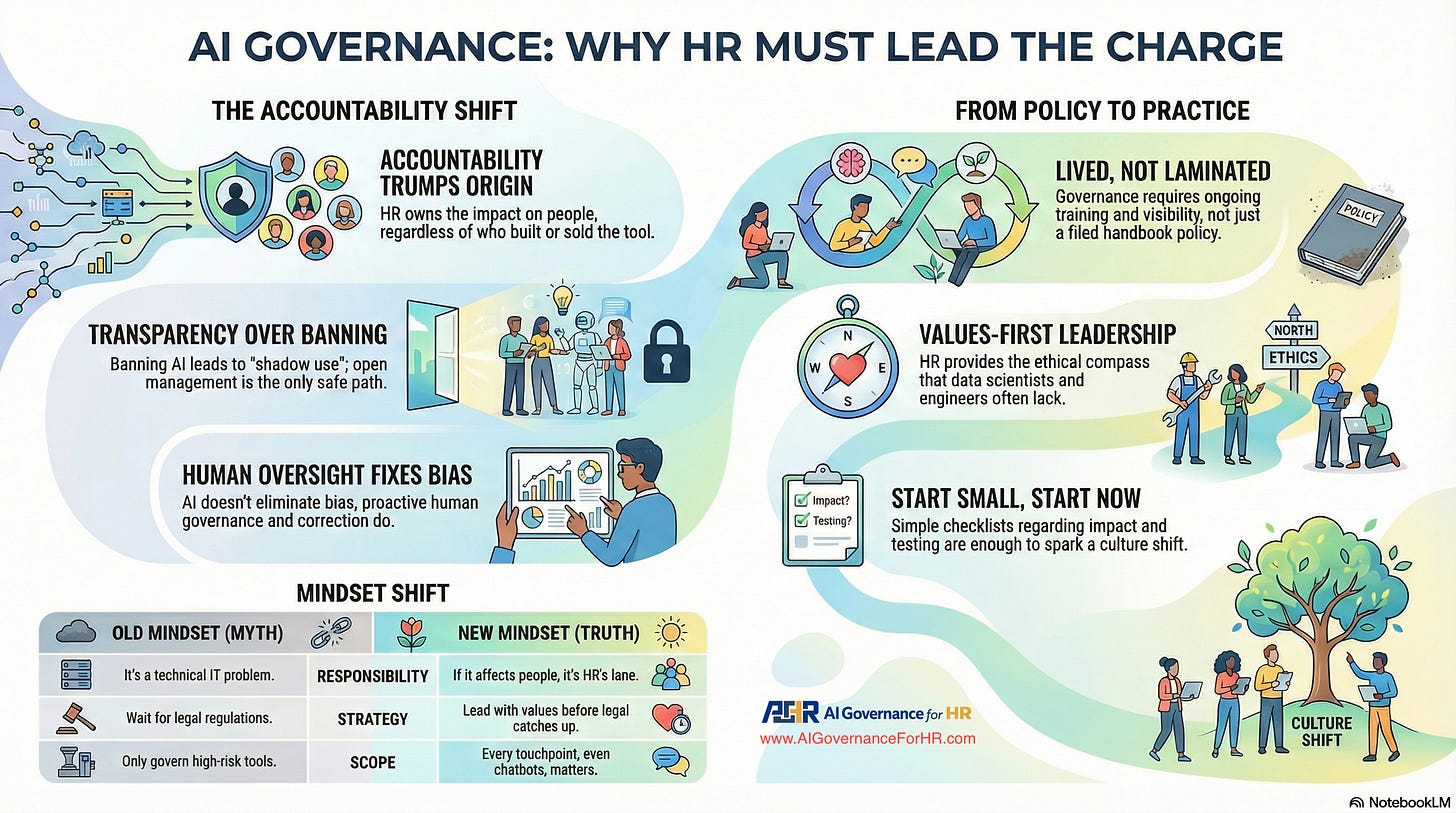

AI in HR is no longer theoretical. It’s real, active, and shaping decisions from recruiting to retention. But with that progress comes confusion, fear, and a whole lot of misconceptions.

Many HR leaders assume AI governance is a technical problem or a compliance exercise. Some think a neatly filed policy will suffice. Others worry that embracing AI could backfire, ethically or legally.

Spoiler: Governance isn’t about fear. It’s about leadership.

This article addresses 10 uncomfortable, unspoken questions HR leaders often have about AI governance. Each answer is grounded in real-world examples, not theory. It’s time to shift from hesitation to confident action.

1. Isn’t AI governance just a tech team’s problem?

Myth: “I’m in HR, not IT. AI governance isn’t my lane.”

Truth: If AI affects people, it’s your responsibility.

Story:

A global retailer deployed an AI resume screener that unknowingly favored male applicants. HR didn’t code it, but they owned the fallout when the headlines broke.

Takeaway:

Governance isn’t about who built the tool. It’s about who is accountable for its impact. That means HR.

2. Can’t we just write an AI policy and move on?

Myth: “Draft a policy, file it, done.”

Truth: A policy without practice is just paper.

Story:

An HR team celebrated their 20-page AI ethics policy. But when a chatbot began giving problematic advice, no one could recall what it said because no one had read it in months.

Takeaway:

AI governance must be lived, not laminated. It requires training, iteration, and visibility.

3. Isn’t banning AI tools safer than managing them?

Myth: “Let’s block it and avoid the risk.”

Truth: Ban it, and employees will use it anyway without guardrails.

Story:

A firm banned generative AI. Recruiters quietly used ChatGPT anyway. One prompt produced a job ad that went viral for all the wrong reasons.

Takeaway:

You can’t govern in the dark. Transparency is safer than silence.

4. What if AI makes a biased decision and we’re blamed?

Myth: “AI bias is inevitable. Better avoid the tech.”

Truth: Bias is manageable with human oversight.

Story:

An AI tool favored external hires over internal ones. The issue? Internal performance data was historically biased. HR caught it and corrected course.

Takeaway:

Avoiding AI won’t eliminate bias. Governing it will.

5. Do we need AI governance if we only use third-party tools?

Myth: “It’s a vendor product. Not our problem.”

Truth: You are responsible for outcomes, not just software licenses.

Story:

A recruitment platform filtered out candidates with employment gaps. Legal trouble followed. HR had never asked how the AI made its decisions.

Takeaway:

Vendors provide tools. You provide accountability.

6. Isn’t AI governance too technical for HR to lead?

Myth: “We need data scientists, not HR.”

Truth: AI decisions are about people, not just data.

Story:

An HR-led team guided engineers through AI ethics grounded in company values. No code required. The result was a more inclusive performance review tool.

Takeaway:

Start with values. HR’s voice is essential.

7. Shouldn’t we wait for legal or compliance to tell us what to do?

Myth: “We’ll act when regulators force us.”

Truth: Waiting invites reputational damage before legal risk.

Story:

A delay in proactive AI guidelines led to a union dispute over a scheduling algorithm. Compliance was too slow. HR took the hit.

Takeaway:

AI governance is about leadership, not just law.

8. Doesn’t AI reduce the need for human involvement in HR?

Myth: “Let AI do the grunt work. We’ll do strategy.”

Truth: AI changes how we show up, not whether we show up.

Story:

A chatbot botched onboarding by mispronouncing names and missing cultural cues. HR redesigned the experience to reflect empathy and belonging.

Takeaway:

AI still needs the human touch.

9. Is it overkill to apply governance to low-risk tools like chatbots?

Myth: “It’s just a FAQ bot. Why bother?”

Truth: Small tools can create big problems.

Story:

A wellness chatbot told a stressed employee to “push through.” The message did more harm than good. The prompt had never been reviewed.

Takeaway:

Every AI touchpoint matters. Governance includes the little things.

10. Is AI governance really worth the effort for HR?

Myth: “We’ll never get it perfect. Why try?”

Truth: You don’t need perfection. You need a starting point.

Story:

One HR team built a basic checklist: What’s the impact? Who is affected? How can we test it? That simple act sparked a culture shift.

Takeaway:

Start small. Start smart. Just start.

Governance Is a Culture Shift, Not a Compliance Checkbox

AI governance isn’t about decoding algorithms. It’s about owning the outcomes when machines influence human lives.

HR leaders are not on the sidelines in this story. They are at the center. You already lead in culture, fairness, trust, and behavior. Now it’s time to apply that leadership to AI.

About AI Governance for HR CoLab Workspace

Margaret Spence, author of When AI Breaks the Law, helps HR and talent leaders operationalize AI governance across hiring, performance, and promotion. This CoLab workspace delivers daily frameworks to bridge the gap between compliance documentation and ethical AI principles—the gap where the $365M Mobley lawsuit occurred. You’ll build governance infrastructure that reduces legal, reputational, and EU AI Act compliance exposure before AI-driven talent decisions scale bias into discrimination lawsuits.

Join us live every Wednesday for our Let’s Build Governance Together Series - Our live programs are a premium edition of this Colab.