AI On Trial: Unpacking the Landmark Mobley v. Workday Lawsuit and Its Impact on HR

A Job Seeker vs. an AI Giant

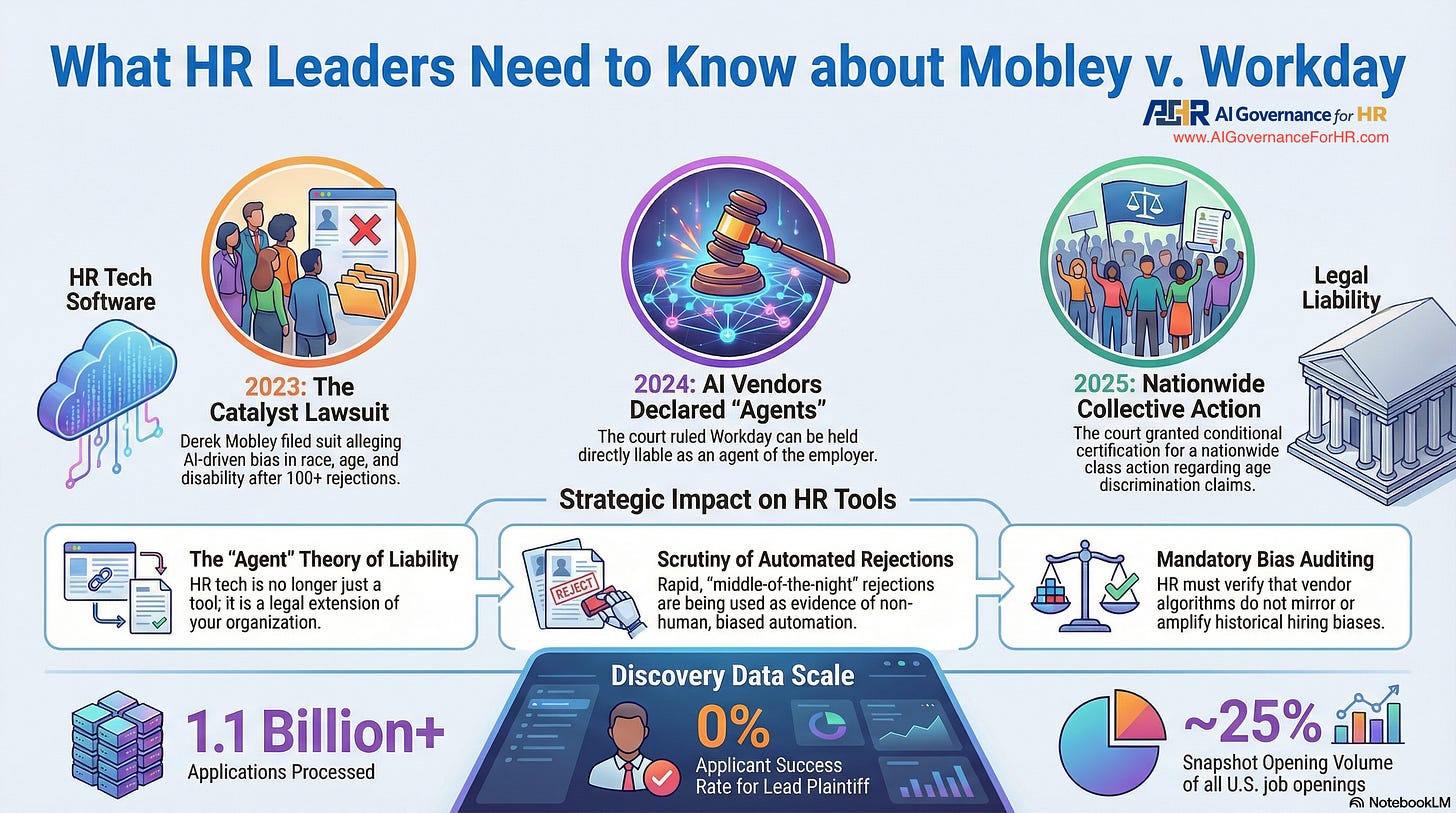

The rapid adoption of Artificial Intelligence in Human Resources promised a new era of efficiency and objectivity in hiring. Yet a landmark lawsuit, Derek Mobley v. Workday, Inc., is sending shockwaves through the industry, forcing every HR leader to confront a critical question: What happens when the AI itself is accused of discrimination? This case is more than a legal dispute; it’s a pivotal moment that could redefine liability for HR technology vendors and the companies that use their tools.

This article breaks down the essential facts of the case, examines the court’s groundbreaking decisions, and distills the critical implications for any organization that leverages AI in its hiring process.

1. The Core of the Conflict: A Job Seeker vs. an AI Giant

At the heart of this case is a confrontation between a qualified job seeker who faced repeated rejections and the powerful AI platform that stood between him and potential employers.

The Plaintiff: Derek Mobley

Protected Classes: Mr. Mobley is an African-American male over the age of 40 who also suffers from anxiety and depression.

Qualifications: He holds a Bachelor’s degree in Finance from Morehouse College and an Associate’s Degree in Network Systems Administration.

Experience with Workday: Since 2017, Mobley has applied for over 100 positions at companies that use Workday’s screening platform and has been denied employment every time.

The Defendant: Workday, Inc.

Workday is a major provider of human resources management services. The company’s platform uses artificial intelligence (AI) and machine learning (ML) in its applicant screening tools. Workday’s website claims its technology can “reduce time to hire by automatically dispositioning or moving candidates forward in the recruiting process.”

The central accusation in the lawsuit is that Workday’s AI system, not just the individual employers using it, engages in systemic discrimination against applicants based on race, age, and disability. This novel claim forced the court to confront a new legal question about where responsibility lies in the age of algorithmic hiring.

2. A New Legal Frontier: Can an AI Vendor Be an Employer’s “Agent”?

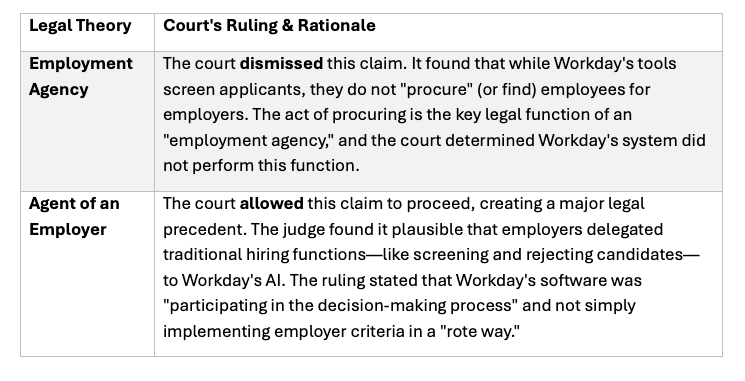

The most significant ruling in this case set a new precedent for AI vendor liability. The court had to decide whether Workday was merely a passive software provider or an active participant in the hiring process. It considered two key legal theories, each with dramatically different outcomes.

The court’s reasoning addresses a crucial gap in liability. For instance, if a software vendor intentionally designed a tool that screens out all applicants from historically Black colleges and universities—a fact unknown to the employer using it—then, without agency liability, no party could be held responsible for the intentional discrimination. This theory ensures that vendors “participating in the decision-making process” cannot operate in a legal vacuum.

This “agent” theory ruling is a game-changer. It opens the door for AI service providers, such as Workday, to be held directly liable for employment discrimination under federal laws, including Title VII of the Civil Rights Act, the Age Discrimination in Employment Act (ADEA), and the Americans with Disabilities Act (ADA). With Workday’s potential “agent” status established, the court then turned to the specific discrimination allegations at the heart of the lawsuit.

3. Understanding the Claims: “Disparate Impact” Moves Forward

In employment law, there are two main types of discrimination claims: intentional discrimination (”disparate treatment”) and “disparate impact.” The former requires proving discriminatory intent, while the latter focuses on discriminatory outcomes regardless of intent. The court’s decision on these two types of claims was a critical turning point in the case.

Why the Disparate Impact Claim Survived

The court allowed the disparate-impact claim to proceed because Mobley presented plausible allegations that Workday’s tools produced discriminatory results. The key evidence included:

The Volume of Rejections: Mobley alleged a “zero percent success rate” after applying for more than 100 jobs for which he was qualified.

The Common Denominator: The Workday platform was the constant factor across many different employers in various industries.

Automated Nature of Rejections: Mobley cited receiving a rejection notice at 1:50 a.m., less than an hour after submitting his application, which suggests an automated, non-human decision.

Why the Intentional Discrimination Claim Was Dismissed

The court dismissed the claim of intentional discrimination. To prevail, Mobley would have needed to present facts showing that Workday intended its tools to discriminate. The judge noted that being “merely aware of the adverse consequences” a policy might have on a protected group is insufficient to prove intent. The court left the door open for Mobley to reassert this claim if the discovery phase uncovers direct evidence of Workday’s discriminatory intent.

With the age discrimination claim successfully established under a disparate-impact theory, the plaintiffs moved to expand the scope of the case.

4. What is a “Collective Action”?

A “collective action” is a type of federal lawsuit that allows individuals with similar legal claims to join together and have their case heard collectively. It is used for claims under statutes such as the Age Discrimination in Employment Act (ADEA). In a major development, the court granted preliminary certification of a nationwide collective action for the ADEA claim, exposing Workday to a potentially massive group of older applicants—a group Workday itself estimated could be in the “hundreds of millions.”

Key features of this collective action include:

Who is included: All individuals aged 40 and older who applied for jobs through Workday’s platform since September 24, 2020, and were “denied employment recommendations.”

How it works: This is an “opt-in” process. Eligible individuals will be notified and must affirmatively opt in to the lawsuit.

The Goal: To allow thousands, or potentially millions, of individuals with similar age discrimination claims against Workday’s AI to have those claims resolved in a single, efficient legal proceeding.

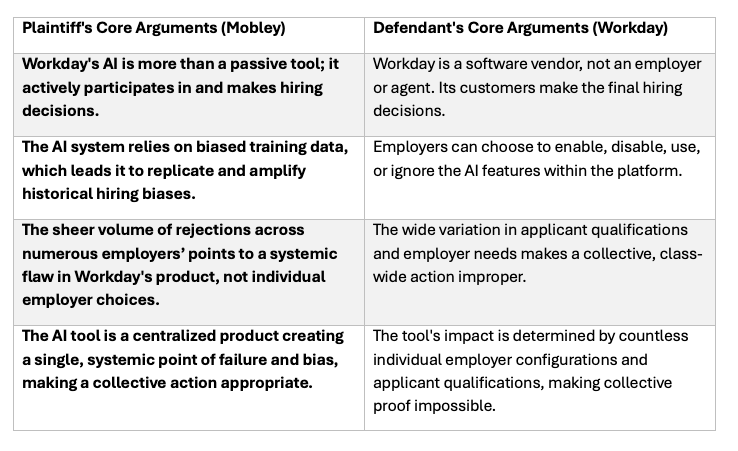

The formation of this massive potential collective brings the fundamental arguments of both the plaintiff and the defendant into sharp relief.

5. The Arguments: Plaintiff vs. Defendant

As the case proceeds, the core arguments from each side have come into sharp focus, highlighting the central debate over the role and responsibility of AI in hiring.

With the arguments defined, the case now hinges not on legal theory but on hard evidence—placing the battle over data access at the absolute center of the conflict.

6. What’s Next: The Pivotal Battle Over Discovery

With the court’s preliminary rulings settled, the lawsuit has entered the “discovery” phase—the formal process of gathering evidence. This is a make-or-break moment, as the plaintiffs must now find the data to statistically prove their claims.

What the Plaintiffs Are Seeking

Mobley and the other plaintiffs are requesting access to Workday’s internal data, including:

Demographic data of job applicants who used the platform.

The results of any internal audits Workday has conducted on its hiring tools for bias.

Technical details about how the AI algorithms are designed and operate.

Workday’s Position

Workday is resisting these requests, arguing that:

The information about its algorithms constitutes proprietary trade secrets.

The applicant data is the property of its customers.

The request is overly broad, burdensome, and unfairly prejudicial to its customers.

Workday’s contracts include an exception allowing access to customer data when the company is subject to a court order.

The outcome of this discovery dispute is crucial. While Workday claims it cannot access customer data, court documents show its contracts include exceptions for court orders. Therefore, how the court balances Workday’s trade secret claims against the plaintiffs’ need for evidence will likely determine the lawsuit’s future. Without this data, it will be extremely difficult for the plaintiffs to conduct the statistical analysis required to prove their disparate impact claim.

This high-stakes battle over data transparency offers a preview of the due diligence and legal risks that every HR leader must now consider.

Key Takeaways for HR Leaders

Regardless of the final verdict, the Mobley v. Workday case has already reshaped the legal landscape for AI in HR. For any organization using or considering these tools, there are clear, immediate takeaways.

AI Vendor Liability is Now a Reality. The court’s “agent” theory is a watershed moment, signaling that employers and their AI vendors may share liability. This shared liability model necessitates a review of vendor contracts. HR leaders should work with legal to ensure that indemnification clauses and clear definitions of responsibility for algorithmic bias are explicitly addressed.

Scrutinize Your AI Tools. Relying on marketing claims of “bias-free” AI is no longer defensible. Due diligence must now include pointed questions inspired by the Mobley discovery battle:

Can you provide us with the results of your internal disparate impact audits?

On which datasets was this algorithm trained, and what steps were taken to ensure their representativeness?

What is your process if we, the customer, are subject to a discovery request for data your system holds?

Governance is Non-Negotiable. Implementing a clear, robust AI governance policy is essential. This policy should precisely define how and when AI tools are used in the hiring process, establish protocols for auditing their impact, and, most importantly, ensure meaningful human oversight, especially for final hiring decisions.

The era of treating AI screening tools as simple, liability-free software is over. The Mobley case is a powerful reminder that with great technological power comes great legal responsibility.

About AI Governance for HR CoLab Workspace

Margaret Spence, author of When AI Breaks the Law, helps HR and talent leaders operationalize AI governance across hiring, performance, and promotion. This CoLab workspace delivers daily frameworks to bridge the gap between compliance documentation and ethical AI principles—the gap where the $365M Mobley lawsuit occurred. You’ll build governance infrastructure that reduces legal, reputational, and EU AI Act compliance exposure before AI-driven talent decisions scale bias into discrimination lawsuits.

Join us live every Wednesday for our Let’s Build Governance Together Series - Our live programs are a premium edition of this Colab.