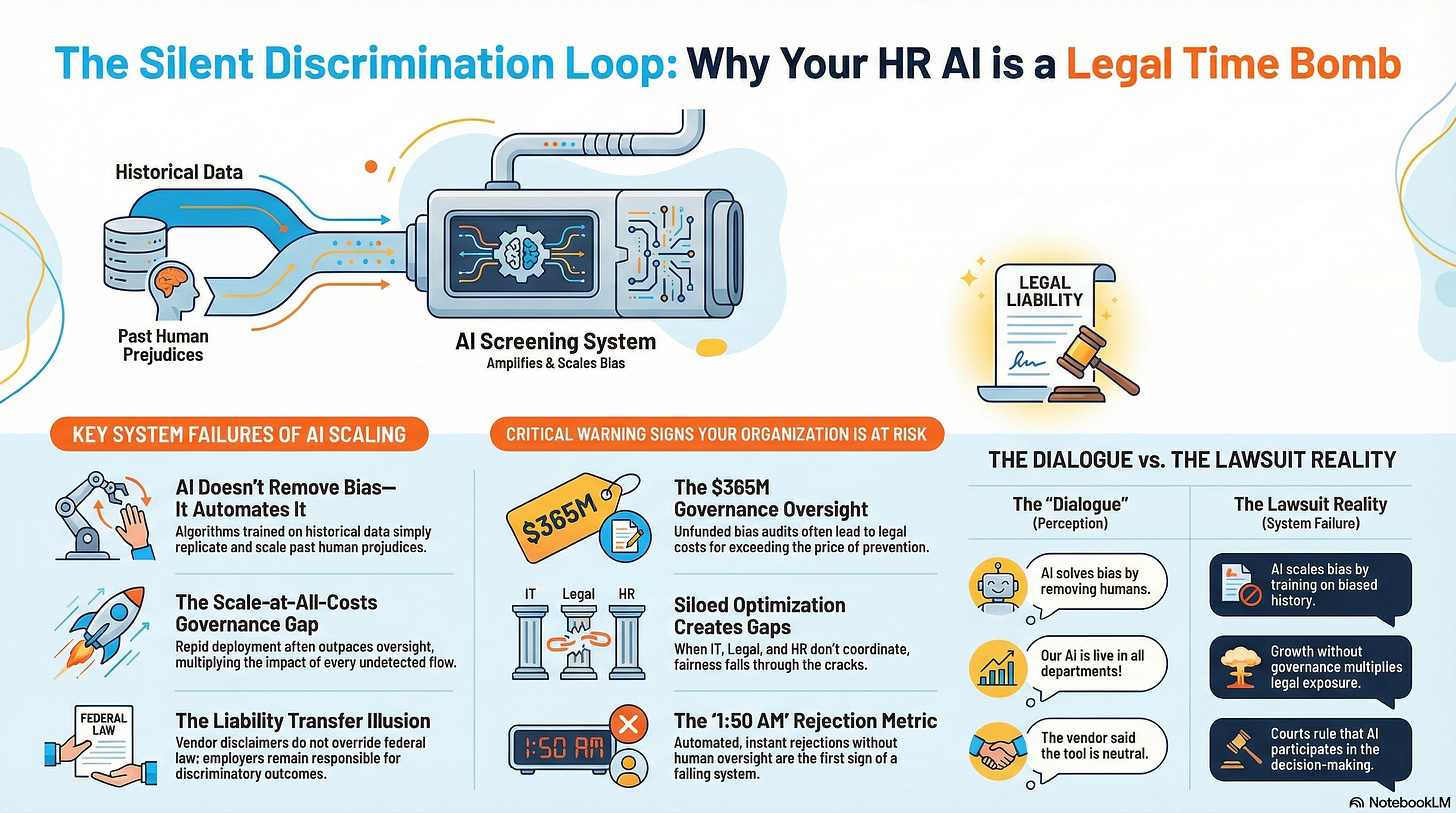

CHRO: Your AI Is Silently Discriminating

7 System Failures You Can't Afford to Ignore

The 1:50 AM Rejection

Derek Mobley applied for a job. At 1:50 AM, he received a rejection. He applied again, this time to a different company, and received another automated rejection in the middle of the night. This happened 100 times. Each rejection came from a different employer, yet they all used the same screening system.

No human ever saw his resume. People didn’t reject Mobley. A system erased him.

This story raises a critical question for any organization using AI: How do seemingly rational systems and good people cause such significant, scaled harm? The answer isn’t about blaming individuals; it’s about understanding the system that produces the outcome. The answer lies in systems thinking.

A brief disclaimer: The case referenced, Mobley v. Workday, is in active litigation. All information presented here is drawn from publicly available court filings and judicial orders.

“Most AI failures in HR don’t come from bad people. It comes from blind spots, assumptions, and silence.”

The Critical Shift to Systems Thinking

Most leaders are trained to fix symptoms. We ask: “What’s broken? Whose to blame?” This approach is insufficient for the complex challenges of AI. Systems thinking requires us to ask more profound questions: “Where did this behavior begin? What assumptions are embedded in this design?”

This shift is essential for Human Resources in the age of AI. HR has traditionally operated in functional silos—talent acquisition, compensation, and development. But AI doesn’t respect those boundaries. An algorithm trained on biased data in one business unit can influence hiring, promotions, and even layoffs in another. AI systems are interconnected, even when our teams are not. To lead responsibly, we must learn to see the whole system—the web of people, policy, data, and decisions. Systems thinking helps us become diagnosticians, not merely deliverers.

The 7 System Failures That Create Agentic Talent-Driven Bias

An analysis of the Mobley v. Workday lawsuit reveals seven recurring patterns, or “systems loops,” that allegedly led to systemic discrimination. Each pattern seems rational in isolation. When combined, they create a powerful engine for failure.

1. Fixes That Fail: The Quick-Fix Mentality

The Dialogue You Hear: This AI will solve our bias problem by taking humans out of the decision-making process.

What the Lawsuit Suggests: The court documents indicate that AI could solve bias by removing flawed human judgment. The logic seems simple: remove the human, remove the bias. But the AI didn’t remove bias; it scaled it. According to the allegations, the algorithm was trained on historical hiring data that reflected decades of human bias. The AI learned these patterns and recommended candidates based on what “success” had looked like in the past. The lawsuit alleges that African American candidates over 40, like Derek Mobley, didn’t match that historical pattern and were automatically rejected. The “fix” for bias became a new mechanism for discrimination.

The Realization: AI doesn’t remove bias. It automates and amplifies the bias already embedded in your data and processes.

2. Limits to Growth: The Scale-at-All-Costs Rush

The Dialogue You Hear: We need this AI live in all departments by Q3 to stay competitive.

What the Lawsuit Suggests: Court filings show that the platform scaled to 11,000 organizations and processed 1.1 billion applications from 2016 to 2025. This represents breathtaking growth. However, the lawsuit alleges that governance did not scale at the same pace. The pattern of 1:50 AM rejections suggests that no one stopped to ask critical questions about oversight at such a massive volume. Speed won out over safety, and growth won out over governance.

The Realization: Speed creates complexity that outpaces oversight. Scale multiplies the impact of every undetected flaw.

3. Shifting the Burden: The Liability-Dodging Vendor

The Dialogue You Hear: Our AI is fair. Any issues come from your implementation.

What the Lawsuit Suggests: According to court documents, Workday positioned itself as a “neutral tool provider,” stating that its AI tools “do not make hiring decisions” and that customers retain “full control and human oversight.” However, Judge Rita Lin ruled that the AI was “not simply implementing in a rote way the criteria that employers set forth, but is instead participating in the decision-making process by recommending some candidates to move forward and rejecting others.” The vendor attempted to shift accountability to the employer, while the employer may have assumed the vendor had tested for bias. Both pointed fingers, and the discriminatory outcome persisted.

The Realization: Vendor disclaimers don’t override federal discrimination laws. You cannot avoid liability by pointing to the vendor.

4. Tragedy of the Commons: The Siloed Optimization

The Dialogue You Hear: HR’s fairness reviews are slowing our pipeline.

What the Lawsuit Suggests: The pattern revealed shows that each department optimized its own piece of the deployment. Procurement negotiated a good price. IT achieved a fast rollout. Legal reviewed contract disclaimers. Everyone succeeded in their siloed task. However, the allegations suggest that no single person or department owned the system-wide outcome of fairness for candidates. When responsibility is shared among multiple stakeholders, it can effectively belong to no one. Who caused the discrimination? Everyone and no one.

The Realization: When everyone optimizes for their silo, the system breaks down in the space between departments. Discrimination thrives in the gaps.

5. Success to the Successful: The Self-Reinforcing Algorithm

The Dialogue You Hear: The AI proves that my team is top-performing. We deserve more funding.

What the Lawsuit Suggests: The AI likely learned from historical hiring data, identifying patterns in who was hired, promoted, and deemed successful. It then recommended more candidates who resembled those past successes. This is how algorithms amplify existing inequality. The system validates past patterns, making them easier to repeat and harder to see alternative patterns of success. Derek Mobley didn’t match the historical profile, not because of a lack of qualifications, but because the algorithm was trained to recognize “success” in a specific demographic pattern.

The Realization: Algorithmic validation of past patterns prevents new patterns from emerging, making inequality self-reinforcing.

6. Accidental Adversaries: The Uncoordinated Champions

The Dialogue You Hear: Why is Legal slowing down our AI deployment with all these bias-testing requirements?

What the Lawsuit Suggests: The failure pattern points to competing priorities among departments, all seeking a successful AI implementation. IT prioritized deployment speed. Legal prioritized liability protection. HR prioritized process efficiency. Their requirements conflicted, yet the allegations suggest no one coordinated a resolution. IT may have viewed Legal’s requirements as delays, while Legal may have viewed HR’s push for speed as reckless. The system launched with incomplete governance, born of these internal tensions.

The Realization: When teams don’t coordinate, governance fails in the gaps between competing priorities.

7. Growth and Underinvestment: The Unfunded Mandate

The Dialogue You Hear: We can’t afford to slow AI implementation and adoption with costly bias audits.

What the Lawsuit Suggests: Organizations invested heavily in AI licenses and implementation but allegedly failed to fund essential governance infrastructure, such as bias testing and ongoing monitoring. The result speaks for itself: 1.1 billion applications processed, a potential class of hundreds of millions of people, and an estimated liability of $365 million and counting. The cost of this unfunded governance far exceeded the cost of building it correctly from the start.

The Realization: You cannot afford NOT to test for bias. The lawsuit will cost more than the audit does.

Let’s Recap: Is Your Organization Building Its Own Lawsuit?

AI bias is not a single error. It is an escalating problem created by interconnected, self-reinforcing loops. Small, undetected biases are amplified by a systemic feedback mechanism that turns small failures into larger ones over time.

The Efficiency Loop: More automation increases speed, reducing human review. Less review increases reliance on the algorithm, amplifying any underlying bias.

The Normalization Loop: Early bias goes undetected and becomes “how the system works.” This normalizes discrimination, making it an invisible part of organizational culture.

The Scale Loop: Successful deployment leads to expanded use, processing more applications. This affects more people and dramatically increases legal exposure.

This is how small flaws escalate into systemic failures.

Ten Warning Signs to Watch For

Based on these system loops, here are ten warning signs that your organization may be on a similar path:

Leaders propose AI as a cure for bias without understanding what it will learn from your biased historical data. If your bias problem is in your data, AI will amplify it.

Aggressive deployment timelines don’t include dedicated phases for bias testing. Speed to deployment isn’t the same as speed to value.

Vendors claim their AI is “bias-free” without providing supporting documentation or audit results. Marketing claims are not evidence.

Contracts include vendor disclaimers about discriminatory outcomes. Legal disclaimers don’t shield you from liability.

Each department declares its part of the implementation a success, even as candidates are harmed. Your internal metrics are meaningless if the system discriminates.

No single person or role owns algorithmic fairness across the entire talent lifecycle. Distributed responsibility becomes no responsibility at all.

AI systems are trained exclusively on historical data without intervention to correct for past biases. Historical patterns include historical bias.

Inclusivity metrics decline after an AI system is deployed. The algorithm is telling you something. Listen.

IT, Legal, HR, and Compliance lack a shared governance framework for AI. Without coordination, competing priorities create governance gaps.

AI budgets fund software licenses but not the ongoing governance, testing, and monitoring required to use them safely. Unfunded governance is a lawsuit waiting to happen.

Your Mobley Moment: The 1:50 AM Question

Derek Mobley sat in the dark. Another rejection. No explanation. No recourse.

How many 1:50 AM rejections has your system issued in the last 24 months?

If you don’t know the answer, that’s your first risk.

The patterns that lead to systemic failure are predictable and often visible to all. The problem is that no one sees the whole picture until it’s too late. The lawsuit arrives, and suddenly everyone is forced to connect the dots.

You have a choice. See the system now or see it in court later.

About AI Governance for HR CoLab Workspace

Margaret Spence, author of When AI Breaks the Law, helps HR and talent leaders operationalize AI governance across hiring, performance, and promotion. This CoLab workspace delivers daily frameworks to bridge the gap between compliance documentation and ethical AI principles—the gap where the $365M Mobley lawsuit occurred. You’ll build governance infrastructure that reduces legal, reputational, and EU AI Act compliance exposure before AI-driven talent decisions scale bias into discrimination lawsuits.

Join us live every Wednesday for our Let’s Build Governance Together Series - Our live programs are a premium edition of this Colab.

Ready to Build Real AI Governance?

Most HR leaders have AI policies. Few have AI governance systems. Join the waiting list for “When AI Breaks the Law” and get the tools to close that gap.

This needs to be highly promoted on LinkedIn! I plan to reshare.