Data Governance: Why the Past Is Quietly Designing Your Future Workforce

When AI systems are trained on historical hiring data, they learn whatever that data contains, including every bias, every exclusion, and every structural inequality that shaped who was hired before.

An algorithm trained on ten years of promotions in a male-dominated leadership pipeline learns, faithfully and precisely, that leadership looks male. An algorithm trained on resumes from a workforce that has historically excluded certain demographics learns that certain demographic signals predict poor performance. The machine is not making this up. It is telling you the truth about your past. And then you deploy it to select your future.

This article is an excerpt from “When AI Breaks the Law” by Margaret Spence, a forthcoming book for HR leaders on governing AI before it governs you.

Executive Summary: The Data Echo

This article explores the critical intersection of historical workforce data and algorithmic decision-making. It is organized into three distinct parts to provide a comprehensive roadmap for senior HR leaders:

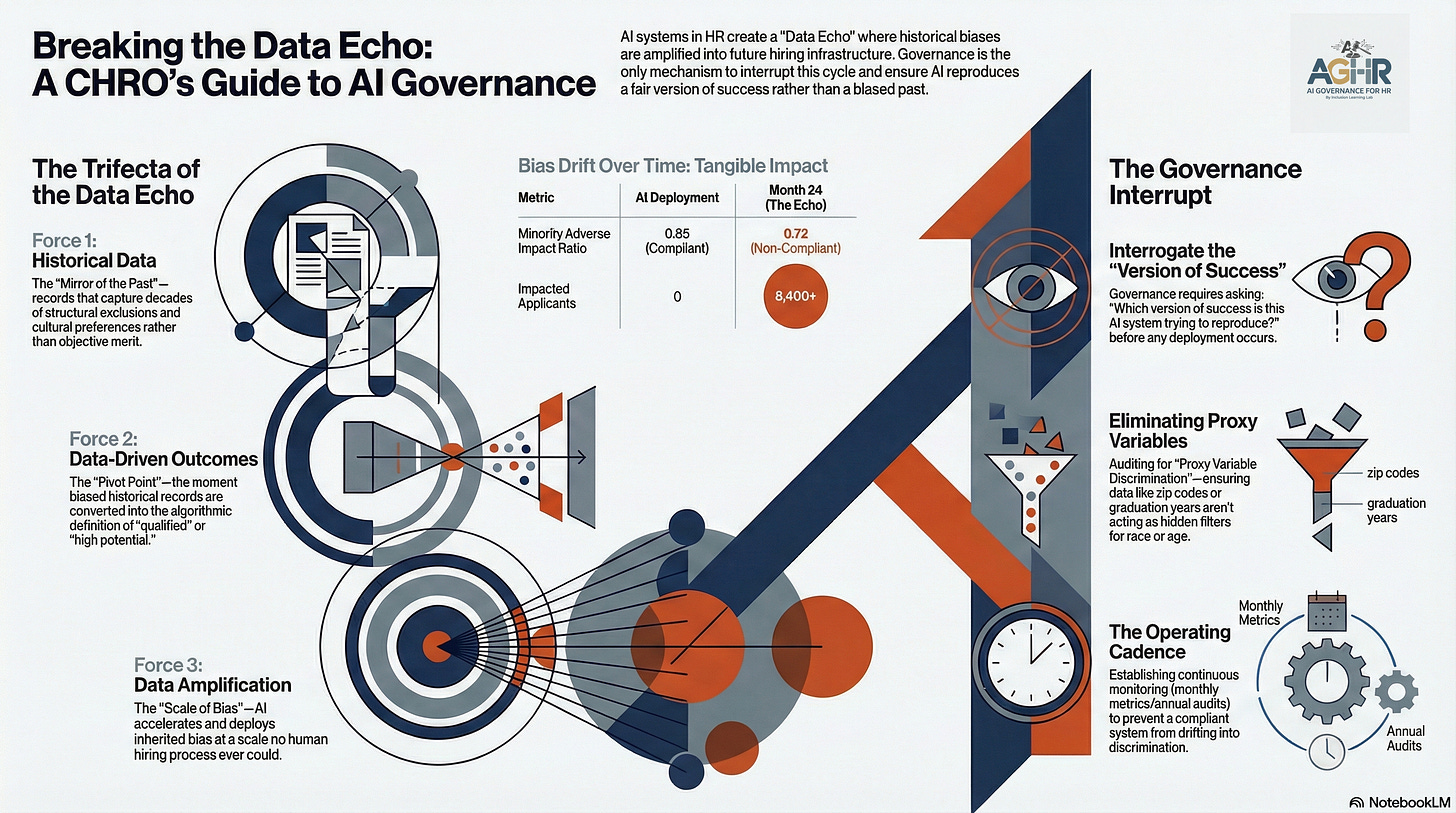

Part 1: The Convergence of Bias – Unpacks how historical data, data-driven outcomes, and data amplification create a self-reinforcing cycle of discrimination.

Part 2: Bias as Infrastructure – Examines the transition from local data issues to systemic infrastructure and the dangers of proxy variables.

Part 3: Moral Data Integrity – Defines the new standards for data integrity and establishes the governance mandate required to interrupt the “Data Echo.”

To further your learning, this article includes two exclusive add-ons:

Video lesson: Data Governance for HR – A walkthrough of the Data Echo process and the impact on establishing data governance protocols.

Audio podcast: Pitching AI Governance to Your Executive Team – A deep-dive discussion on identifying hidden bias in your talent lifecycle and securing C-Suite buy-in.

Part 1: The Data Trifecta: History, Outcomes, and Amplifications

There is a specific kind of danger that arises when three forces converge within a single system. Individually, each has a name. Together, they produce something most HR leaders have never been formally trained to recognize, and that regulators are only beginning to understand well enough to legislate.

The first force is historical data: the records we accumulated over decades of hiring, promoting, and evaluating people. These records capture every assumption, every structural exclusion, and every cultural preference that shaped the organizations we built. They are not neutral archives. They are evidence of who we hired, who we didn’t, and what we told ourselves about why.

The second force is the data-driven outcome: the moment we feed that historical record into an AI system and ask it to predict future success. This is the pivot point. This is where the past stops being the past and becomes the algorithmic definition of the future. The system learns what “qualified” looks like, what “high potential” means, and what “culture fit” predicts. It learns all of this from data that was never designed to be a training set for anything — it was simply what happened, shaped by the world that existed at the time.

The third force is data amplification: the mechanism by which the AI doesn’t just reproduce the bias in the historical record but magnifies, accelerates, and deploys it at a scale no human hiring process ever could. A biased hiring manager affects hundreds of people over a career. A biased algorithm affects millions before anyone notices the pattern.

These three forces combine to produce what I call the Data Echo: a system in which historical discrimination becomes algorithmic instruction, algorithmic instruction becomes a hiring outcome, and that outcome becomes new data that reinforces the very discrimination it inherited. The Echo doesn’t fade. With each cycle, it grows clearer. Louder. More embedded in the infrastructure of who your organization becomes.

This is why data governance is not a technical concern. In HR, it is the foundational act of deciding what kind of future you are willing to build.

The Data Echo occurs when AI systems are trained on historical data that already contains discrimination or bias, causing the algorithm to learn and replicate those biases at scale. Your past hiring, promotion, or upskilling decisions become the blueprint for future ones, so if your top performers were selected through a biased process, the AI treats that bias as the definition of “qualified.” The algorithm does not question the data; it optimizes for the pattern embedded within it.

AI Data Integrity: The Problem With “Our Best People”

The phrase that launches almost every AI hiring implementation sounds reasonable. It sounds like quality control. “Let’s train it on our best people.”

It makes intuitive sense. You know who your high performers are. You have the data. You build a model that learns to recognize the patterns associated with those people and identify candidates who share those patterns. What could go wrong?

Everything — if your best people were selected by a system that was never fair to begin with.

This is the fundamental epistemological trap of historical HR data. The record of “success” is not an objective measure of human potential. It is a record of who was recognized as having potential within a system with particular cultural, structural, and often demographic preferences. When that recognition was systematically skewed — and in most organizations across most of history, it was — then the training data for your AI is not a record of merit. It is a record of the bias that was already there.

Consider what that means in practice. If your organization spent ten years promoting primarily men into leadership roles, your performance data for leaders is predominantly male. The algorithm learns what leadership looks like from that dataset. It learns patterns, communication styles, educational backgrounds, career trajectories, and perhaps even the language used in performance reviews, and it associates those patterns with success. Then it encounters a female candidate with equivalent capability and a different pattern of expression. The system does not recognize her as a match. Not because she is less qualified, but because she is less familiar to a model trained on unfamiliarity with her.

No one programmed this. No one intended it. The data simply reflected the world as it was, and the algorithm faithfully reproduced it.

Training Your AI: Algorithmic Data The Mirror That Lies

Here is the deepest problem with historical data in AI training: it presents itself as objective. Numbers feel neutral. Datasets feel factual. Algorithmic outputs seem beyond the subjectivity of human judgment — which is, after all, why we turned to AI in the first place.

But a mirror doesn’t create what it reflects. It surfaces it. And when what it surfaces is decades of structural inequality, the mirror becomes more dangerous than a biased human decision-maker. It becomes a system that produces biased outcomes while appearing to do the opposite.

Research has documented what this looks like in practice with disturbing specificity. AI hiring tools have been shown to favor white-associated names 85% of the time, compared with 11% for female-associated names — not because anyone chose those preferences, but because the training data reflected a world where those preferences already existed and were treated as performance indicators. Algorithms trained on productivity metrics have been shown to systematically rate workers over 50 as lower performers — not because their output is lower, but because the historical data they were trained on reflects workplaces that consistently undervalued experienced employees and coded younger work patterns as higher value.

In the Mobley v. Workday case, the AI screening system learned what a “successful” candidate looked like from historical hiring outcomes. But success, in this context, was defined by who had been hired before. If those historical hires were disproportionately young, white, and male — as they were across much of the American corporate landscape for decades — then the algorithm learned, with perfect fidelity, to prefer candidates who resembled that history.

The system was not wrong about the data. The data was wrong about people. And nobody asked the difference.

Part 2: Data Governance in HR: How Amplification Turns Bias Into Infrastructure

The transition from data bias to data amplification is the moment a local problem becomes systemic. It happens in a way that is almost impossible to detect without a governance structure specifically designed to identify it.

Here is the mechanism: an AI system is deployed with biased training data. It produces biased outcomes. Those outcomes —who gets hired, who gets promoted, who gets favorable performance reviews—become new data. That new data is fed back into the system, either directly as updated training data or indirectly through the cumulative record it builds of what “success” looks like in your organization. The system reinforces itself. With each cycle, the Echo grows stronger.

A financial services institution documented this process precisely. Their AI resume screening system passed initial demographic tests. It was compliant at launch. Then, over twenty-four months, its bias drifted. The Adverse Impact Ratio for minority candidates declined from 0.85 at deployment to 0.72, crossing the EEOC compliance threshold — not because anyone changed the system, but because the system kept learning. Labor market demographic shifts created new applicant patterns. The algorithm absorbed those patterns without oversight. Month by month, in increments too small to trigger an alarm, the Echo deepened.

By the time anyone looked, 8,400 minority applicants had been affected. The system that had passed every initial check had learned to discriminate, while leadership assumed that initial compliance meant permanent fairness.

This is data amplification in its most complete form. It is not a deployment event. It is a continuous process. Unless an organization has a governance structure with the rhythm to detect it — monthly metrics, quarterly reviews, annual audits — it will proceed invisibly until a lawsuit or a regulatory audit makes the pattern visible.