Inside the AI Black Box: How to Get Transparency from HR AI Tools

(Even When Vendors Say No)

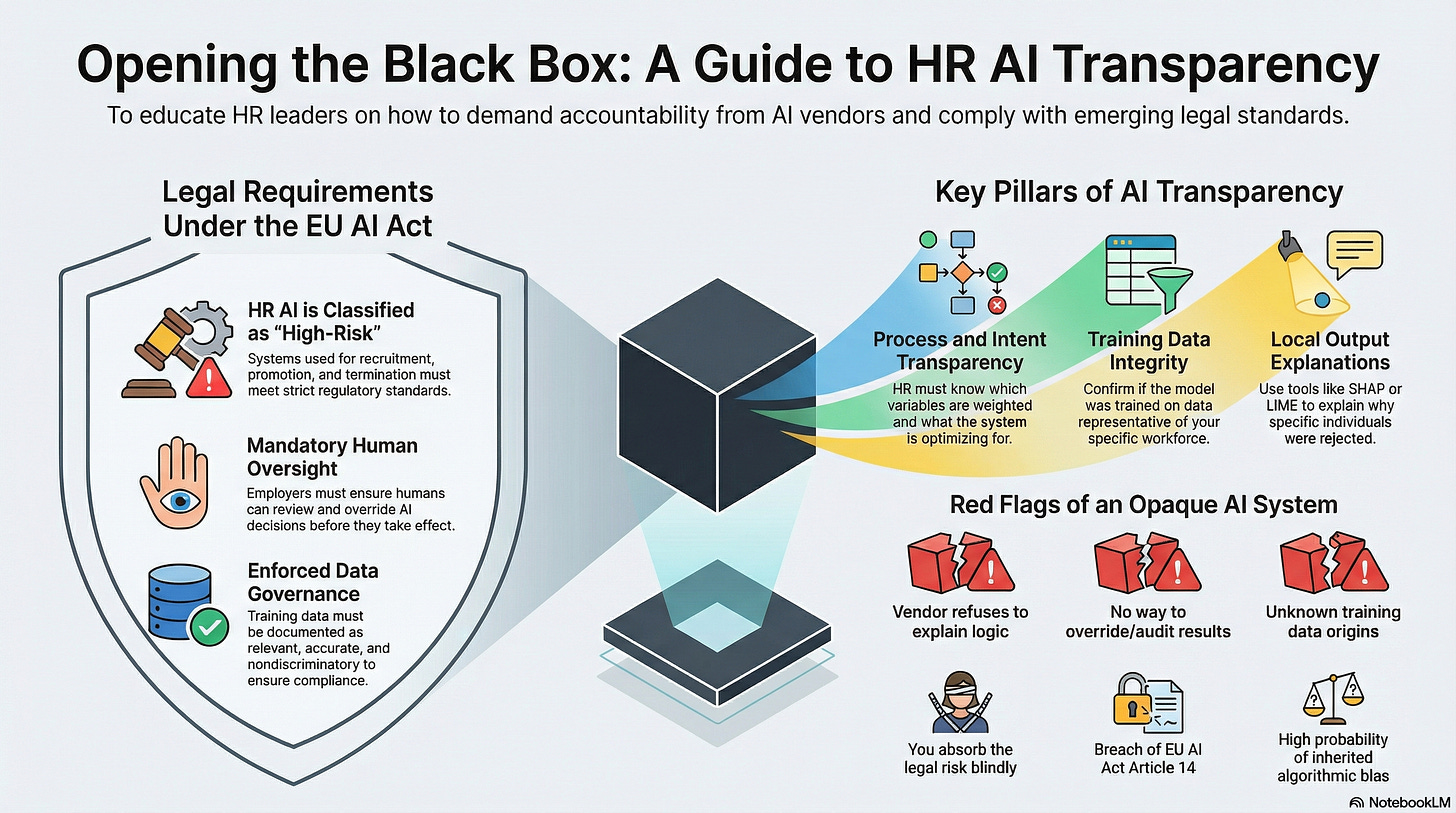

Many AI systems used in HR operate behind a curtain. They’re marketed as faster, smarter, and more efficient, but when something goes wrong, no one can explain why. In an era of new regulations like the EU AI Act, that’s no longer acceptable.

This article shows HR leaders how to demand transparency, challenge “black box” excuses, and stay accountable — even when the tech gets complex.

1. What’s in the Black Box — and Why HR Needs to Open It

The AI flagged a candidate as a “low fit.”

A recruiter asked why. The system offered no explanation.

The hiring manager shrugged and moved on. So did everyone else.

Weeks later, Legal received a call. The rejected candidate had a profile nearly identical to those of others who were hired; same school, same job title, same years of experience. The only difference was age.

No one could explain why the system made its choice, because no one had ever asked how it worked in the first place.

This is the Black Box Problem: AI systems that make decisions or recommendations without providing clarity, logic, or accountability.

In finance, auditors won’t approve numbers they can’t trace. In medicine, treatments require informed consent.

But in HR? People are being hired, rejected, promoted, or sidelined by AI without any clear explanation.

If you can’t explain a decision, you probably shouldn’t be making it.

Transparency in AI is the difference between a doctor saying 'you need surgery' and a doctor showing you the X-ray and explaining exactly why.

2. Transparency Isn’t a Nice-to-Have — It’s the Law

In most HR teams, “transparency” is a cultural value. When it comes to AI systems, it’s a legal requirement.

The EU AI Act — the world’s first comprehensive AI regulation — explicitly classifies AI used for recruitment, promotion, termination, and workforce evaluation as high-risk (Article 6). High-risk systems are subject to specific requirements:

Article 13: Transparency

HR must understand and be able to explain how the system works, what it optimizes for, and what data it uses.Article 14: Human Oversight

Employers must ensure that humans can review and override AI decisions before those decisions take effect.Article 10: Data Governance

You must ensure that training data is relevant, accurate, and nondiscriminatory — and be able to document this.

These requirements apply even if:

You bought the system from a third-party vendor

The AI was embedded in a broader platform

Your company is not based in the EU but operates in EU markets or hires EU-based workers

If your team is using AI in hiring or talent management — and can’t explain how it works — you’re not just ethically exposed. You’re legally exposed.

Four Levels of Transparency HR Should Demand

You don’t need to be technical to demand transparency. You just need to know which questions to ask — and what answers are acceptable.

Here are the four levels of transparency that every HR leader should push for:

1. Output Transparency

What did the AI recommend — and for whom?

What scores, rankings, or decisions did the system produce?

Who saw those results?

Were the recommendations used, changed, or overridden?

If you can’t access or audit these outputs, you have no way to defend them.

2. Process Transparency

How did the system reach that result?

What variables were used (e.g. job history, skill keywords, tenure)?

Were any sensitive or proxy variables excluded (e.g. ZIP code, GPA)?

How are those inputs weighted?

You don’t need source code — you need to understand logic and influence.

3. Intent Transparency

What is the system trying to optimize?

Is the model built to prioritize speed, cost, retention, or engagement?

Who set those goals — and are they aligned with your people strategy?

If you don’t know what the system optimizes for, you don’t know what trade-offs it’s making.

4. Training Data Transparency

What historical data trained the system — and does it reflect your workforce?

Was the model trained on global datasets, or on data from companies nothing like yours?

How recent and representative is the data?

What job levels, geographies, or demographics are reflected?

This is where bias creeps in — and where your accountability begins.

If you inherit a model trained on biased data, you’re deploying those patterns — whether you intended to or not.

4. When the Vendor Says “We Can’t Explain It.”

Many vendors say their AI system is too complex or proprietary to explain. That’s not a dead end — it’s a negotiation point.

Here’s what to do:

Push for Model Documentation

You’re not requesting intellectual property. You’re requesting a high-level summary — often called a “model card” — that outlines:

What inputs are used

What the system is designed to do

What limitations or risks are known

Whether human review is expected

Responsible vendors should be prepared to provide this.

Ask for Local Output Explanations

Even if the model is complex (like a deep learning system), there are tools that can explain individual decisions.

Two examples:

SHAP: Shows which inputs (like experience or location) contributed most to a specific decision

LIME: Builds a simplified, understandable version of the model for one specific case

These are part of a practice called local interpretability, which helps you explain why a single decision was made, even if the overall system is complex.

If you can’t explain why a candidate was rejected or why a promotion was skipped, you may be out of compliance.

Shift Accountability in the Contract

If a vendor won’t provide transparency, you need to protect yourself through contract.

Add an indemnification clause for automated decisions

Secure the right to audit data and decision logs

Include a termination clause if transparency thresholds are not met

Vendors that refuse to be transparent aren’t protecting trade secrets — they’re offloading risk onto you.

5. Red Flags: Signals That Your HR AI Tool Is Too Opaque

Watch for these signs that the system you’re using is dangerously unaccountable:

You don’t know what decisions the system is making

The vendor refuses to explain how the results are produced

There is no way to override or audit the outputs

No one can describe what data the model was trained on

HR can’t explain the outcome to the person it affects

If any of these are true, you’re not managing a tool — you’re absorbing its risk blindly.

6. Questions You Should Be Able to Answer (Even If You Didn’t Build It)

You may not be the engineer, but you’re still accountable.

Here’s what every HR leader should be able to answer regarding the AI systems they rely on:

What decisions or recommendations does the AI influence?

What inputs are used in those decisions?

What is the model trying to optimize?

What kind of data was it trained on — and how similar is that data to your workforce?

Can you explain an individual outcome to a candidate or an employee?

If the answer to any of these is “I’m not sure,” you’re outside the governance range.

AI Governance Rule: If You Can’t Explain It, You Can’t Defend It

Transparency isn’t a bonus feature. It’s the foundation of ethical, legal, and strategic HR in the AI era.

HR leaders don’t need to become data scientists, but they do need to ask tough questions and insist on clear answers. The risk isn’t just bias or error. It’s being responsible for outcomes you don’t understand and can’t control.

You don’t have to fear AI (yet), but you do have to open the black box — and decide whether what’s inside is right for your people, your values, and your accountability.

About AI Governance for HR CoLab Workspace

Margaret Spence, author of When AI Breaks the Law, helps HR and talent leaders operationalize AI governance across hiring, performance, and promotion. This CoLab workspace delivers daily frameworks to bridge the gap between compliance documentation and ethical AI principles—the gap where the $365M Mobley lawsuit occurred. You’ll build governance infrastructure that reduces legal, reputational, and EU AI Act compliance exposure before AI-driven talent decisions scale bias into discrimination lawsuits.

Join us live every Wednesday for our Let’s Build Governance Together Series - Our live programs are a premium edition of this Colab.

Don’t Wait for Your Mobley Moment

The gap between AI policies and AI governance is where $365 million lawsuits happen. Join the waiting list now and get the SimpliFocus Readiness Assessment free. Diagnose your governance gaps before they cost you.