Is Your HR AI Tech Stack a Legal Risk?

Red Flags to Watch For in AI Vendor Contracts

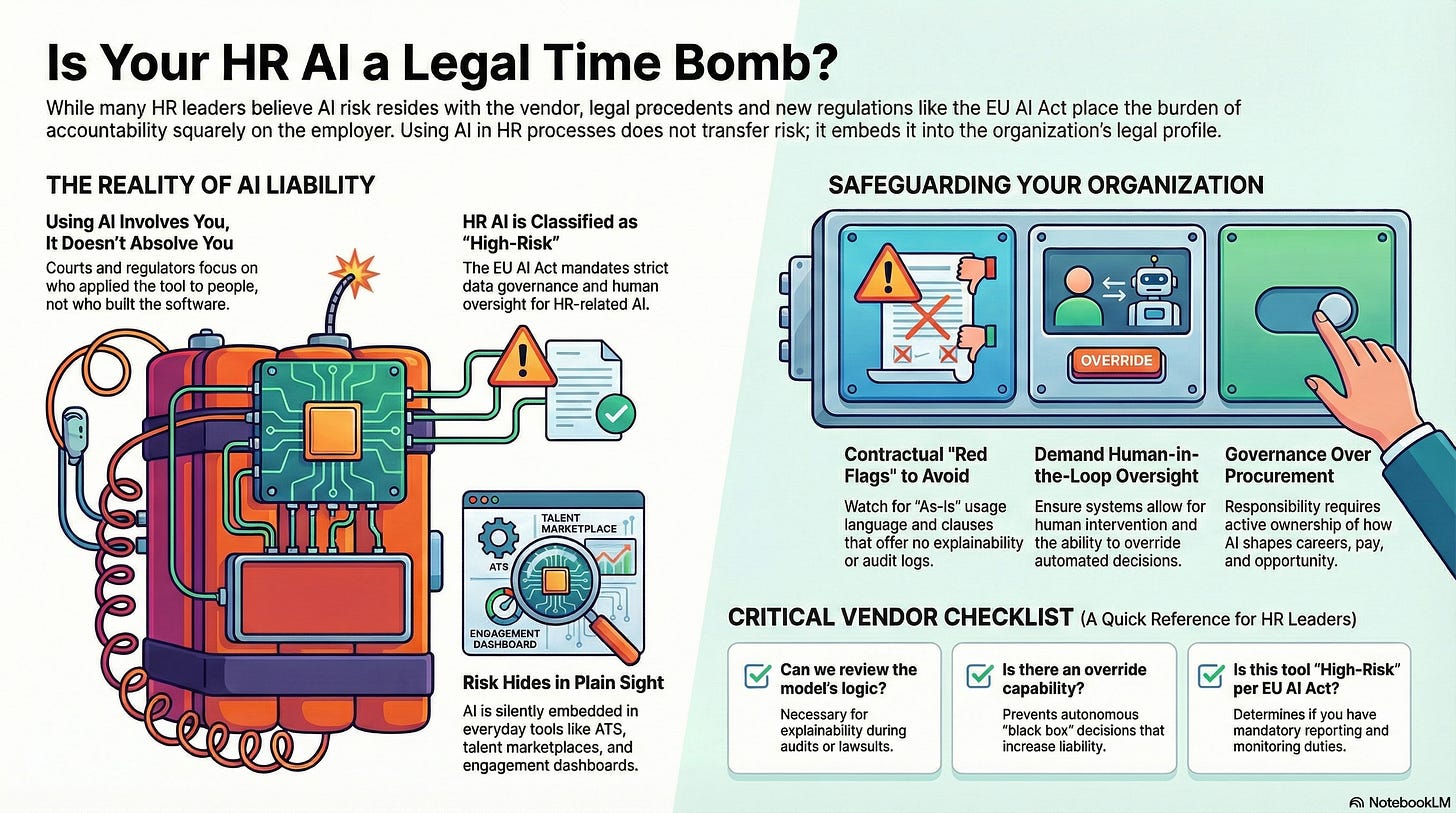

Many HR leaders assume AI risk lives with the vendor. That assumption is not just wrong, it’s legally dangerous.

The Compliance Illusion: Why “We Bought It” Isn’t a Legal Shield

The HR team thought they had done everything right. A well-known vendor. A signed contract. A product marketed as “enterprise-ready” and “legally compliant.” The AI tool automatically screened candidates, ranked them efficiently, and quietly rejected thousands without ever bothering a recruiter.

Then the lawsuit arrived.

In Mobley v. Workday, a job applicant alleged that an AI-powered screening system systematically rejected him because of protected characteristics. The case didn’t hinge on whether the AI was intentionally discriminatory. Instead, it focused on something more uncomfortable: who is responsible when an automated system makes an employment decision.

The vendor didn’t make the hire.

The vendor didn’t reject the candidate.

But the system did.

This is where many HR leaders feel falsely secure. There’s a belief that buying a third-party platform transfers risk along with functionality. That belief is mistaken. Courts and regulators are increasingly focused on use, not ownership.

Even Employment Practices Liability Insurance (EPLI) offers limited comfort. Many EPLI policies were written before AI-driven decision-making became operational. Some now exclude claims related to automation, while others require proof of human oversight that HR teams cannot produce after the fact.

The uncomfortable truth: when AI touches hiring, promotion, performance, or termination, HR is no longer shielded by procurement paperwork or insurance policies.

The compliance illusion is the belief that someone else is bearing the risk. In reality, it’s already sitting in your HR tech stack.

How AI Liability Is Shifting Toward HR

Q: Isn’t AI risk something for Legal or IT to manage? Why is HR now exposed to it?

Because AI in HR isn’t theoretical anymore — it’s operational.

AI tools are now embedded in workflows that affect hiring, internal mobility, promotions, compensation, and even layoffs. When things go wrong, it’s not the engineers who are named in lawsuits — it’s the employers.

Here’s the shift: The more AI influences employment decisions, the more HR becomes the de facto decision-maker — even when no human actually made the call.

This is what’s unfolding in Mobley v. Workday, where the platform’s algorithm allegedly filtered out candidates based on race, age, and disability. Although the vendor is named, the employer remains responsible for the tool’s use. That’s the legal trend. Regulators care less about who built it and more about who applied it to people.

The EU AI Act reinforces this shift by classifying HR-related AI as “high-risk” and placing compliance expectations directly on users, not just vendors.

And don’t assume your EPLI policy will save you. Many policies don’t cover claims tied to algorithmic decisions — especially when no human review occurred.

In short: using AI doesn’t absolve you — it involves you.

Where AI Is Hiding in Your HR Stack?

Q: We haven’t rolled out any major AI tools in HR. Are we still at risk?

Very likely — yes.

The most legally risky AI in your HR function is probably the one you don’t even know is there.

AI is now quietly embedded in everyday platforms: your ATS, HCM, internal mobility tool, learning system, and performance app. Here’s where it hides:

Applicant Tracking Systems: Resume ranking tools that silently suppress “low fit” candidates.

Workforce Planning Tools: AI-driven headcount optimizers that auto-flag teams for reduction.

Talent Marketplaces: Matching algorithms that influence who gets promoted — or overlooked.

Learning Platforms: Content selectors that reinforce historical paths, blocking non-traditional growth.

Engagement Dashboards: Retention scoring systems that may misclassify high performers as flight risks.

The danger isn’t always in what the AI does; it’s in the absence of visibility, auditability, and accountability.

If your team doesn’t know what the system optimizes for or how to override it, then it’s not governed — it’s just outsourced risk.

AI Vendor Contract Red Flags: What Most HR Vendors Don’t Tell You

Q: We’ve signed standard contracts with our vendors. Isn’t that enough?

Standard contracts are exactly the problem.

Most HR tech agreements were not written with AI governance in mind, and many silently shift risk to the buyer. Watch for these six red flags:

1. “As-Is” Use Language

You take full responsibility for outcomes, even if flawed or discriminatory.

2. No Explainability Guarantee

If you can’t explain a decision to an employee or an auditor, that’s a legal vulnerability.

3. “Appropriate Use” Clauses

If the contract says you’re responsible for “appropriate use,” guess who regulators will hold accountable?

4. No Human-in-the-Loop Requirements

Tools that operate autonomously without human checkpoints violate the EU AI Act’s expectations.

5. Silent AI Clauses

AI may be baked into general terms without being labeled as such. You may be using AI without realizing it.

6. No Audit Logs or Traceability

If you can’t reconstruct how a decision was made, you can’t respond to audits or legal challenges.

Bottom line: If your contract doesn’t provide visibility and override capabilities, it’s not just a tech risk — it’s a leadership risk.

The EU AI Act: What High-Risk Means for HR Buyers

The EU AI Act is not theoretical — it’s enforceable and imposes direct obligations on organizations using AI in HR.

HR Use Cases = “High-Risk AI”

Per Article 6, any AI used for hiring, promotion, termination, or evaluation is classified as high-risk. If you’re using AI to make or influence employment decisions, you’re already within the law’s scope.

Your Core Obligations

Article 10 — Data Governance: You’re responsible for knowing the origin of training data and whether it’s biased.

Article 13 — Transparency: You must be able to explain decisions to affected individuals.

Article 14 — Human Oversight: There must be meaningful human intervention.

Article 17 — Post-Market Monitoring: You need audit trails and reporting structures to track system behavior over time.

Even if your vendor is based outside the EU, you are liable for compliance if you use their AI tools on candidates or employees in the EU.

This is not optional — it’s operational risk management.

Top 10 Questions Every HR Leader Should Ask Their AI Tech Vendor

When evaluating or renewing HR tech contracts, use this checklist to safeguard your organization:

Does this product use AI or machine learning in any aspect of its functionality?

What decisions or recommendations does the AI influence within our workflows?

What is the source of the training data?

Can we review the model’s logic or its explanation of outputs?

How often is the model retrained or updated?

Is there always a human in the loop before final decisions are made?

Can AI-driven decisions be overridden, and is the override tracked?

What happens to employee data when our contract ends?

Do we receive complete audit logs of AI decisions and activity?

Is this tool considered “high-risk” under the EU AI Act? How are you ensuring compliance?

Final Take: Accountability Isn’t Optional

HR leaders can no longer afford to treat AI as someone else’s domain. If the technology touches people, it’s your responsibility.

Relying on vendors to carry your compliance burden is not just risky, it’s legally and regulatory untenable. What’s needed now isn’t just better contracts. It’s active ownership of how AI systems shape careers, pay, performance, and opportunity.

You don’t need to fear your HR tech stack — but you do need to govern it.

The future of ethical, equitable, and compliant HR won’t be built by vendors. It will be shaped by leaders willing to ask hard questions — and demand clear answers.

About AI Governance for HR CoLab Workspace

Margaret Spence, author of When AI Breaks the Law, helps HR and talent leaders operationalize AI governance across hiring, performance, and promotion. This CoLab workspace delivers daily frameworks to bridge the gap between compliance documentation and ethical AI principles—the gap where the $365M Mobley lawsuit occurred. You’ll build governance infrastructure that reduces legal, reputational, and EU AI Act compliance exposure before AI-driven talent decisions scale bias into discrimination lawsuits.

Join us live every Wednesday for our Let’s Build Governance Together Series - Our live programs are a premium edition of this Colab.

PS — If you know a CHRO, HR VP, or talent leader navigating AI in the talent cycle, please restack and share this post. It grows AI Governance for HR and accelerates the release of When AI Breaks the Law and its companion toolset.