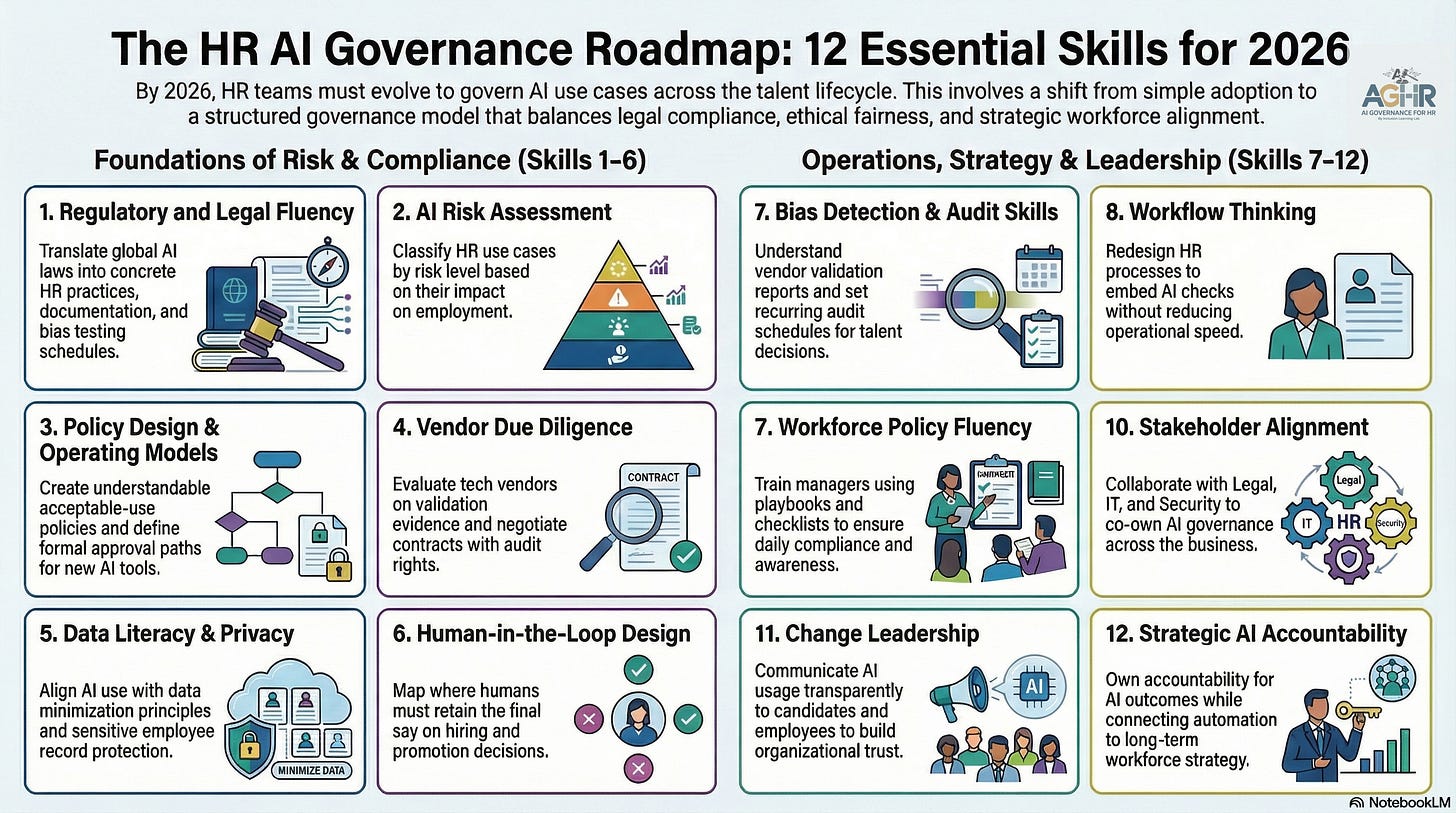

The 12 AI Governance Skills HR Must Master in 2026

Retooling Your HR Skillset to Include AI Governance and Risk Management [Article 1 of 12: The Full Roadmap]

Nobody warned you about this part.

You signed up to study Human Resources. You learned about people — how to attract them, develop them, and retain them. You built expertise in employment law, talent strategy, and organizational culture. You became someone organizations trusted with their most important decisions.

Then someone handed you a machine and said, “You’re responsible for this now.”

Who imagined this? Who sat in an HR classroom, a certification program, or a leadership development cohort and thought, “One day I’m going to need to know how to govern an algorithm”? No one raised their hand for this. And yet, here we are.

The promise was that AI would make our lives easier—faster screening, smarter matching, fewer administrative headaches. And in some ways, it has. But what nobody fully explained was the new layer of responsibility that came with it. The technology simplified some tasks while quietly creating an entirely new category of risk that most HR leaders were never trained to manage.

This isn’t a criticism; it’s an honest conversation about where we stand and where we need to go.

The good news is that these skills are learnable. They are practical. And once you see them in action in your daily work, they will improve the way you lead.

Here are the 12 AI governance skills HR leaders need to master in 2026. Each one appears in work you’re probably already doing. The key is knowing what to watch for.

Foundations of AI Risk and Compliance For CHROs

Skills 1 through 6

These are the skills that create a stable foundation. Think of them as the structure that holds everything else up.

Skill 1: Regulatory and Legal Fluency

You are already reading employment law updates and translating them into HR practices. This skill requires you to do the same with AI-specific regulations. When your organization uses a tool that screens resumes or scores candidates, laws like the EU AI Act, EEOC guidance, and rising U.S. state regulations influence how that tool is used, documented, and disclosed. Regulatory fluency means understanding which laws impact which tools and being able to update your documentation, consent language, and audit schedules accordingly. You don’t need to become a lawyer. Instead, you should ask better questions and know what the answers should look like.

Skill 2: AI Risk Assessment and Classification

Reflect on the last time you introduced a new hiring tool. Risk assessment involves pausing before deployment to ask: what could go wrong here, and for whom? Tools that rank candidates, flag attrition risk, or suggest promotions carry greater stakes than tools that schedule interviews or draft job descriptions. This skill helps you develop a straightforward framework for classifying your AI tools by their potential impact on people’s job opportunities, ensuring that the highest-risk decisions receive the most scrutiny before anyone presses a button.

Skill 3: Policy Design and Governance Operating Models

Many organizations already have AI policies. The problem is that these policies are often stored in documents that no one reads. Effective policy design involves writing acceptable-use guidelines in language your managers and recruiters can actually use on a Tuesday afternoon when deciding whether to override a system’s recommendation. It also includes clearly defining who approves new AI tools before purchase, who is responsible when issues arise, and how concerns are escalated. A clear structure helps prevent confusion and protects everyone involved.

Skill 4: Vendor Due Diligence

You’re probably already reviewing vendor contracts and asking questions during sales talks. Vendor due diligence as an AI governance skill involves going one step further. It means requesting the actual data behind their fairness claims, not just their marketing pitch. It also involves negotiating for audit rights to regularly verify performance. Additionally, it’s important to understand what happens contractually if a vendor’s tool produces discriminatory results. The vendors you work with become part of your accountability chain. This skill ensures the chain stays strong.

Skill 5: Data Literacy and Privacy

HR teams handle some of the most sensitive information in any organization: performance histories, compensation records, medical accommodations, and more. In the context of AI, data literacy means knowing which of that information can properly inform an AI system’s decisions and which cannot. It involves understanding that sharing certain employee data with a public AI tool may breach your privacy commitments. It also means developing habits around data minimization so that AI tools only access what they truly need to operate. This skill helps protect both employees and the organization.

Skill 6: Human-in-the-Loop Design

This concept is already part of how you think about fairness in hiring and performance management. The AI aspect of this skill prompts you to map your talent processes and identify where automated recommendations are treated as final decisions without meaningful human review. It involves setting clear standards for when a recruiter, manager, or HR business partner must intervene before a decision is finalized. It also requires establishing documentation practices so that, when a human overrides a system’s recommendation, the reasoning behind the override is recorded. The goal is not to slow down every process but to ensure that the most important moments still involve a person who can see the full picture.

AI Operations, AI Strategy, and AI Leadership - From the CHRO Desk

Skills 7 through 12

These skills expand your influence and establish you as a strategic leader in the AI-driven future of work.

Skill 7: Bias Detection and Audit Skills

You may already be reviewing demographic data in your hiring process. Bias detection expands on that instinct by providing the language and methods to identify AI-specific patterns. It involves knowing what questions to ask when a vendor submits a validation report. It also includes establishing a regular schedule for auditing results across demographic groups, not just when a complaint occurs. When a system consistently produces outcomes that vary by race, age, gender, or disability status, this skill helps you recognize it and take action before it turns into a legal issue.

Skill 8: Workflow Thinking

Every HR process consists of a series of steps. Workflow thinking involves mapping those steps and intentionally deciding where AI fits and where it does not. It means designing your recruiting or performance review process so that every point where AI is involved is clear, documented, and connected to a human checkpoint. When AI use remains informal and undocumented, it introduces risks. When it is purposefully integrated into the workflow, it becomes manageable, trackable, auditable, and improvable.

Skill 9: Workforce Policy Fluency

Your managers and employees need to understand what is permitted, what is not, and what steps to take when they are unsure. Workforce policy fluency means turning your governance framework into practical tools they can use immediately: a one-page checklist before using an AI recruiting tool, a simple green-yellow-red framework for assessing AI recommendations, or a brief playbook for what to do when a candidate asks how a decision was made. When governance is integrated into daily work rather than stored in a separate document, it truly becomes effective.

Does Your Team Need AI Governance Resources?

Skill 10: Stakeholder Alignment

AI governance is not solely an HR responsibility and should not be managed alone. Legal, IT, security, DEI, and business leaders all have a stake in how AI influences talent decisions. Building stakeholder alignment involves proactively developing those relationships, establishing a common language around risk, and creating forums where cross-functional teams can share ownership of decisions about which AI tools are suitable, what the boundaries are, and how accountability is distributed. When issues arise—which will happen at times—a well-coordinated team responds much more effectively than a siloed one.

Skill 11: Change Leadership

How you communicate about AI governance influences how your organization perceives it. When employees and candidates understand how AI is used, what role humans play, and how to raise concerns, trust builds. When they lack that understanding, anxiety takes its place. Effective change leadership means communicating honestly and clearly, welcoming questions instead of avoiding them, and framing governance as a sign of organizational care rather than a bureaucratic burden. HR leaders are especially well-positioned to guide this process.

Skill 12: Strategic AI Accountability

This is the skill that links everything else to organizational purpose. It involves being the person who can clearly explain not only what your AI tools do but also your organization’s standards for their use and why those standards matter. It requires integrating AI governance into your workforce strategy instead of treating it as a separate compliance issue. It also means taking responsibility for the outcomes of AI-powered people decisions, just like you do for every other talent decision your team makes. This is what leadership looks like in the age of algorithms.

The New AI Skillset for HR Leaders: What Comes Next

Over the next 11 articles, we will explore each of these skills in detail. You will see how they manifest during a typical workday, identify gaps most HR leaders are unaware of, and learn practical, sustainable ways to start developing each one.

Nobody told you these were your new skills, but now you know. And understanding is always where the real work begins.

Next in the series: Skill 1, Regulatory and Legal Fluency. Understand what laws actually require of HR leaders and how to incorporate them into your daily practice.

You might also like this article:

AI Governance Training for HR Leaders

Start with Module One Free:

By the end of this workshop, you will be able to answer the questions every General Counsel is now asking HR:

Can you defend how your AI systems made that decision?

Do your managers understand that every AI-generated summary of a sensitive conversation is now discoverable in court?

Would your current governance withstand scrutiny — or expose the gap?

About AI Governance for HR & CoLab Workspace

Margaret Spence, author of When AI Breaks the Law, helps HR and talent leaders operationalize AI governance across hiring, performance, and promotion. Our CoLab workspace delivers daily frameworks to bridge the gap between compliance documentation and ethical AI principles—the gap where the $365M Mobley lawsuit occurred. You’ll build governance infrastructure that reduces legal, reputational, and EU AI Act compliance exposure before AI-driven talent decisions scale bias into discrimination.