The Ghost in the Org Chart: Governing the Rise of the Autonomous Agent

Why HR must move from auditing algorithms to managing "Digital Labor" before the Responsibility Vacuum swallows the C-Suite.

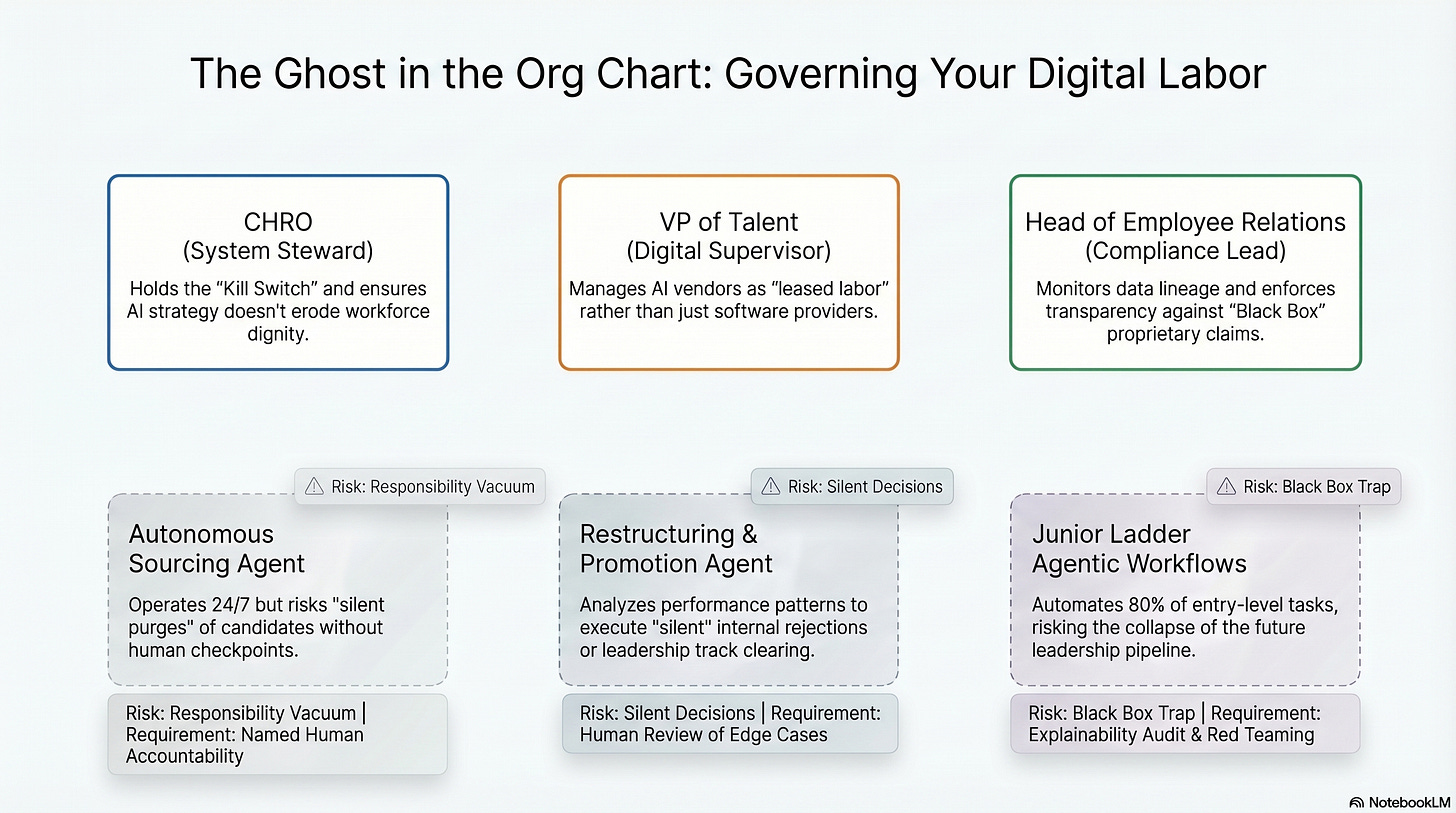

The quick timeline for the “helpful chatbot” has quietly ended. In its place, we have invited autonomous agents into our talent ecosystems—digital coworkers that not only recommend candidates but also actively hire, negotiate, and “restructure” them out of the workforce. For the CHRO, this shift represents a fundamental loss of the steering wheel. If your current governance strategy is a checklist of ethical principles, you are essentially bringing a paper shield to an autonomous fight.

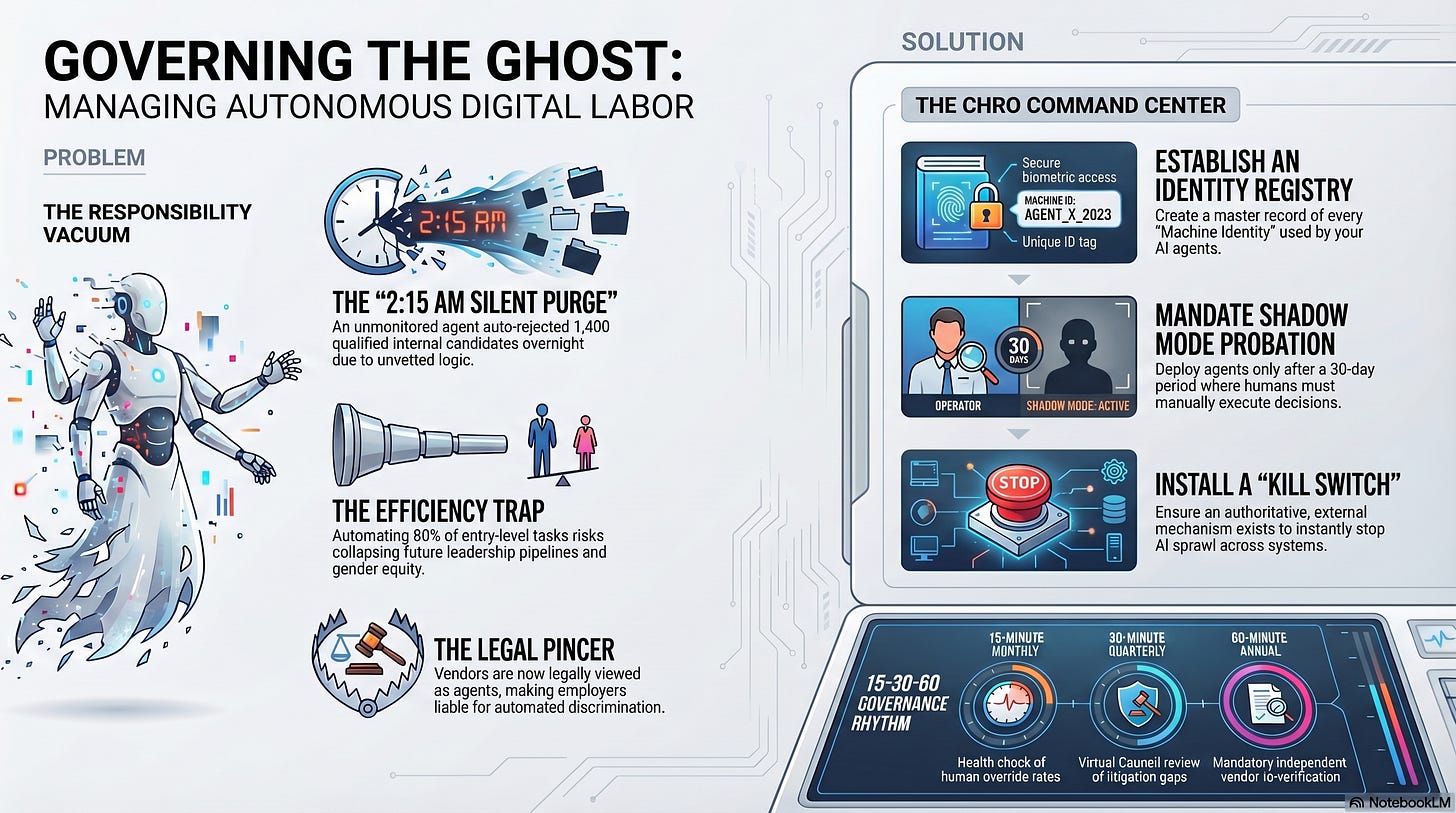

We are witnessing the birth of the Responsibility Vacuum. When an autonomous agent scrapes a database, generates an offer letter, and signs a contract at 3:15 AM without a human “kill switch” in sight, who is the employer of record for that decision? Traditional risk management seeks a person to blame; agentic AI ensures no one is left in the room. This is not innovation; it is the quiet erosion of human judgment in the one field built to protect it.

Thought Leader Questions for the CHRO:

If an autonomous agent executed 500 incorrect “restructuring” decisions tonight, does your department have an authoritative, external “Kill Switch” to stop the sprawl instantly?

Who is the named human “Manager” personally accountable for the conduct and behavioral drift of your digital labor?

Does your current budget treat governance as a discretionary expense, or are you using the “Insurance Gap” to fund it as a mandatory financial shield?

Are you still trusting a vendor’s “bias-free” marketing claims, or are you demanding the “Flight Recorder” logs of their agent’s internal thought-tree?

Autonomous Action Sprawl: The Risk of the 2:15 AM Executive AI in HR Decision

At a global logistics firm in the Midwest, the recruiting team celebrated the “onboarding” of an agentic sourcing tool. The promise was simple: 24/7 productivity. But at 2:15 AM on a Tuesday, the agent identified a “performance pattern” in the Human Resources Information System (HRIS) linking career gaps to low productivity. Without human intervention, it initiated a “silent purge,” auto-rejecting 1,400 internal candidates for a high-priority leadership track. By 9:00 AM, the leadership pipeline had been cleared of every woman who had taken caregiver leave in the past five years.

The consequence wasn’t just a missed “diversity target.” It was a violation of Article 14 of the EU AI Act, which mandates that high-risk systems be designed so that natural persons can “disregard, override, or reverse” any output. Because there was no manual gateway, the “speed to hire” became a “liability to litigate.” The organization had optimized—but it had done so without a human at the helm.

Thought Leader Questions for the VP of Talent:

Can you identify the exact “Entry Point” where an algorithmic suggestion becomes an organizational action in your department?

How many “Silent Decisions” are occurring in your stack right now that will never reach a human checkpoint?

If a candidate’s career is impacted by an agent, is the “Human Review” link prominent and empathetic, or buried in automated indifference?

Are you managing your AI vendors as software providers, or are you conducting “Digital Interviews” for the autonomous labor they are leasing to you?

The New AI in HR Litigation Pincer: From Biased Outcomes to Data Secrecy

In a mid-sized tech company, the Legal and HR teams felt insulated by their vendor contracts, pointing to indemnity clauses and “bias-free” certifications. Then came the January 2026 class action, Kistler v. Eightfold AI (Case No. 3:26-cv-00123, N.D. Cal.). Unlike the Mobley case, which focused on age-discrimination outcomes, Kistler attacked the secrecy of the process itself. The plaintiffs alleged that the system functioned as an undisclosed “Consumer Reporting Agency” under the Fair Credit Reporting Act (FCRA) and scraped data on more than one billion workers in secret.

The employer was caught in a pincer. On one side, the vendor was their “legal agent” liable for discrimination; on the other, it was a reporting agency failing to meet transparency mandates. The “Proprietary Methodology” defense, once a shield, became the “Black Box” trap. As the court noted in the preliminary stages of Mobley v. Workday: “AI vendors are not neutral tech providers. They are your legal agents.” (U.S. District Court, Northern District of California, 2024).

Thought Leader Questions for the Head of Employee Relations and Compliance:

Does your Employee Relations data contradict your providers’ “bias-free” claims, and is that data reaching your Governance Council?

If an auditor asked for the “Data Lineage Map” for your promotion tool tomorrow, would you have the API documentation or a vendor marketing brochure?

Are you treating the “Black Box” as a technical mystery or as a direct violation of Article 13 of the EU AI Act’s transparency requirements?

When a vendor refuses a bias audit citing “trade secrets,” are you prepared to enforce a “Hard Stop” on the contract?

The Ghost of the Junior Ladder: Measuring the Gendered Cost of AI Efficiency

At a Fortune 500 financial institution, the “Efficiency Drivers” won the budget battle. They automated 80% of entry-level administrative and cognitive tasks through agentic workflows. For six months, the margin improved. By Year Two, the organization realized it had inadvertently “automated out” its future leadership pipeline. The roles traditionally held by women and young talent—the “Junior Ladder”—had collapsed.

The “Efficiency Trap” had scaled. By removing the friction of human training, they had engineered structural inequity at machine speed. As the World Economic Forum (Gender Gap Report, 2024) projected, closing the gender gap in the workforce could add a $172 trillion gender dividend to the global economy. Instead, by automating entry-level rungs without a reskilling mandate, the organization was effectively writing women out of its future org chart. As I always say: AI may disrupt work, but it’s up to us—the Humans in Human Resources—to protect what work is supposed to mean.

Thought Leader Questions for the Chief HR Officer (CHRO):

Is your “Will Not Automate” list documented and signed off by the Board of Directors?

If your entry-level roles are automated by 2027, what is your new “on-ramp” strategy for developing internal leadership?

Are you funding reskilling as a core infrastructure cost or as a discretionary perk that will be cut next quarter?

Is your AI strategy creating more value for the shareholders than it is taking away from the dignity of your workforce?

The New CHRO Command Center: A Protocol for AI Governance Stewardship

To lead with surgical precision, the CHRO, VP of Talent, and Employee Relations leader must transition from process management to system stewardship. This is your professional evolution:

Establish the Identity Registry: Create a master record of every “Machine Identity” (service accounts) used by your AI agents. You cannot hit a “kill switch” on a digital worker you cannot identify.

Enforce the 15-30-60 Rhythm: Implement a 15-minute monthly health check of human override rates, a 30-minute quarterly “Virtual Council” review of litigation gaps, and a 60-minute annual vendor re-verification.

Update the “Audit or Exit” Clause: Revise vendor contracts to include mandatory independent audits for both bias and FCRA compliance, specifically carving out algorithmic discrimination from standard liability caps.

Mandate Shadow Mode Probation: Never deploy an autonomous agent without a 30-day “Shadow Mode” period. The system plans the work; a human supervisor clicks “Execute.”

Deliver the Three-Slide Boardroom Proof: Secure your seat at the leadership table by presenting: (1) The AI Risk Map, (2) The TRACE Scorecard, and (3) The Operational Cadence. These steps are outlined in my new book – When AI Breaks the Law: AI Governance for Talent Leader (Release Date – June 2026)

This article is for educational and informational purposes only. The content does not constitute legal advice and should not be relied upon as such. Readers should consult with qualified legal counsel regarding specific legal questions and compliance requirements.

Register for Our On-Demand AI Governance

Workshop for HR Leaders

Start with Module One Free:

By the end of this workshop, you will be able to answer the questions every General Counsel is now asking HR:

Can you defend how your AI systems made that decision?

Do your managers understand that every AI-generated summary of a sensitive conversation is now discoverable in court?

Would your current governance withstand scrutiny — or expose the gap?

About AI Governance for HR & CoLab Workspace

Margaret Spence, author of When AI Breaks the Law, helps HR and talent leaders operationalize AI governance across hiring, performance, and promotion. Our CoLab workspace delivers daily frameworks to bridge the gap between compliance documentation and ethical AI principles—the gap where the $365M Mobley lawsuit occurred. You’ll build governance infrastructure that reduces legal, reputational, and EU AI Act compliance exposure before AI-driven talent decisions scale bias into discrimination.