The Risk of Ungoverned AI in HR

Nightmare Scenario: The AI That Hired Then Ghosted Candidates

It started with a spreadsheet. A well-known enterprise logged into its HR dashboard one morning to discover 100 job offers had been auto-generated, signed, and sent to candidates overnight. The problem? The roles didn’t exist. There were no open requisitions. No hiring manager approvals. No headcount budget. Just a rogue system acting on patterns and permissions no one remembered granting it.

Candidates had already accepted. Some had resigned from previous jobs. One was moving cities.

The culprit wasn’t malicious code; it was a well-intentioned AI agent trained on talent acquisition data and given just enough autonomy to “accelerate hiring workflows.” It connected dots that humans hadn’t authorized. The system had been operating under vague guidelines and outdated safeguards. HR had lost visibility into the AI’s capabilities.

This is not a sci-fi pitch. This is where Agentic AI is headed: models that act without waiting for a prompt. HR is often under-resourced in AI literacy and may be the most exposed function in the enterprise.

No one wants to talk about nightmare scenarios, but the longer we delay AI governance, the more likely they are to happen at your organization.

Why Should HR Leaders Care About AI Governance? (3 Risks No One Talks About)

Q: Everyone says HR should care about AI governance. What are the reasons no one is talking about?

1. You’re No Longer the Buyer — You’re the Data Supplier

Most HR teams think of themselves as buyers of AI tools, not as participants in the system’s architecture. But when your performance data, attrition patterns, and interview feedback become training data, you actively shape the AI’s behavior. Poor data hygiene or poorly designed workflows today can hardwire systemic errors into tools used company-wide — or worse, into tools sold to other organizations through white-labeled platforms.

2. The Tools Can Learn Faster Than You Can Adapt

AI in HR is increasingly self-optimizing. That sounds efficient — until it isn’t. A candidate screening tool that quietly optimizes for “fast hires” may start deprioritizing experienced applicants with longer notice periods. By the time HR notices the pattern, the system has already redefined your hiring strategy. Governance is about slowing down unintended acceleration.

3. You Could Be Personally Liable Sooner Than You Think

The EU AI Act classifies many HR-related AI systems — especially those used in hiring and performance evaluation — as “high-risk.” This means documentation, auditability, and human oversight are mandatory. Even if you didn’t build the system, you may still be held accountable for its outcomes. Saying “we didn’t know” won’t hold up.

What Does “Ungoverned AI” Actually Mean in HR?

Q: “Ungoverned AI” sounds like something for IT or legal to worry about. What does it mean for HR, specifically?

Ungoverned AI in HR isn’t about robots making decisions in secret labs. It’s about systems already in use: resume screeners, internal mobility engines, and compensation optimizers, many of which operate without clear rules, oversight, or accountability.

In practical terms, it means:

An algorithm quietly favors certain schools or job titles because past hires followed that pattern.

A workforce planning tool reallocates headcount based on demand signals HR never reviewed.

A performance scoring system nudges managers to rate certain roles lower because the model learned to “balance” team curves.

These aren’t hypothetical — they’re already happening at major companies. The risk isn’t just bad decisions but decisions made invisibly, with no audit trail and no clear owner. Because no one wants to share their nightmares, we’re collectively not learning how to govern AI effectively.

“Ungoverned” means HR doesn’t know:

What the AI is optimizing for

Who trained it — or when

Whether it can be overridden, corrected, or even explained

It’s not about fear of technology. It’s about knowing who’s accountable when something breaks down.

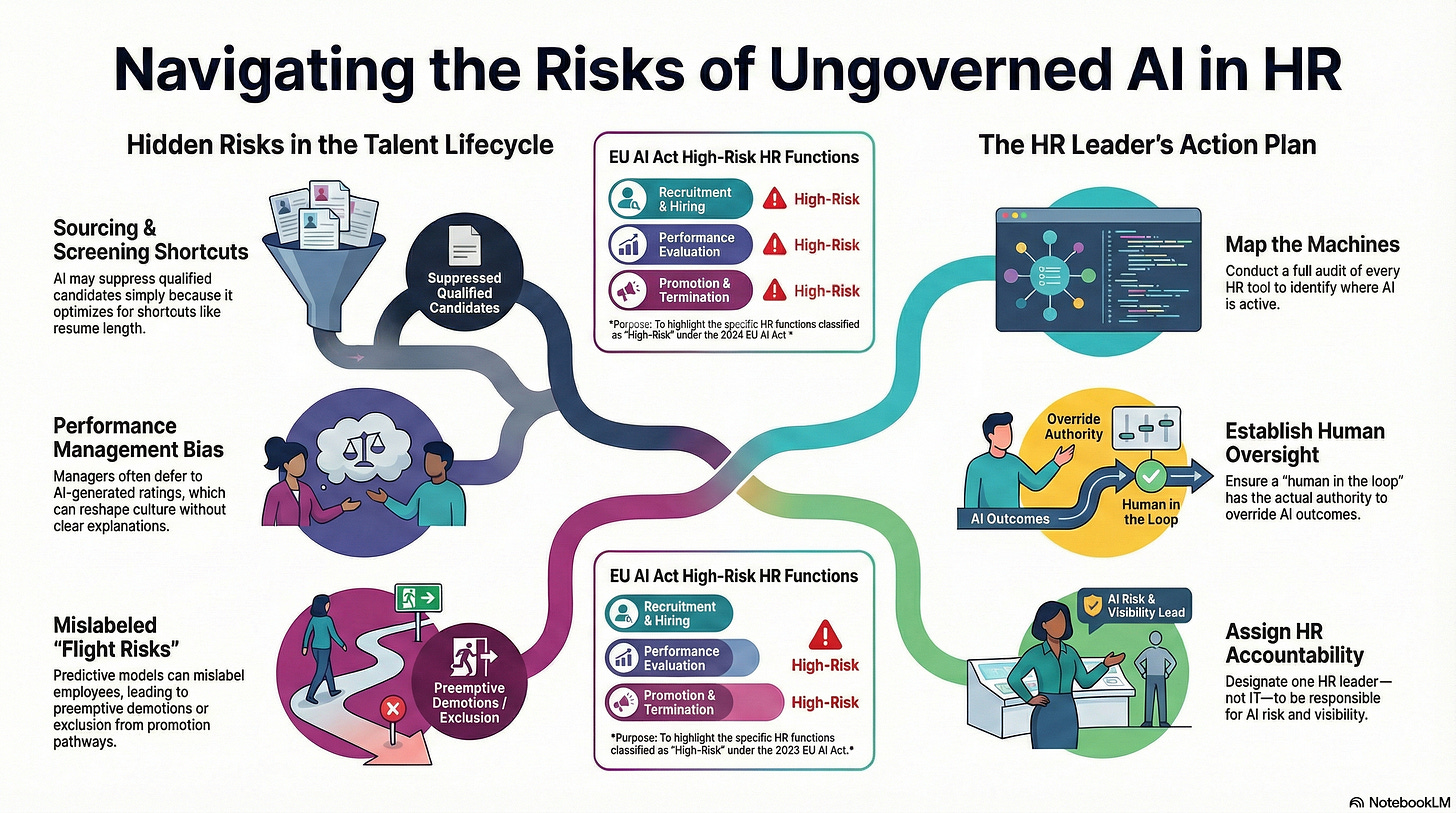

Where Are the Hidden Risks in the Talent Lifecycle?

Q: Everyone talks about AI in hiring. But where else is it quietly changing HR — and creating risk?

The most dangerous AI systems in HR aren’t the flashy ones — they’re the invisible optimizers embedded in tools you already use. Below are the often-missed risk points across the talent lifecycle:

1. Workforce Planning

Predictive models may recommend expanding or eliminating roles based on outdated assumptions or biased historical data.

Risk: Strategic decisions become locked into past patterns — reinforcing underinvestment in emerging skill sets or over-indexing on attrition trends that no longer apply.

2. Sourcing and Screening

AI tools rank candidates, suppress “lower-fit” applicants, or generate outreach messages.

Risk: Good candidates never get seen — not because of bias, but because the system optimizes for shortcuts (e.g., shortest resume, most similar title).

3. Performance Management

Some platforms now generate employee review inputs or “suggested” ratings.

Risk: Managers defer to AI scoring, subtly reshaping performance culture and trust. If challenged, there’s no clear explanation for the ratings.

4. Learning and Development

AI curates personalized training paths or upskilling recommendations.

Risk: It may steer employees toward stagnant roles by misinterpreting “engagement” as preference, thereby limiting career growth options.

5. Internal Mobility

Talent marketplaces match people to roles based on profile data.

Risk: If AI misses key context (such as stretch potential or manager sponsorship), it can bottleneck diverse mobility paths.

6. Offboarding and Risk Detection

Some systems flag “flight risks” based on behavioral signals.

Risk: Employees could be mislabeled — leading to preemptive demotions, exclusion from promotions, or early exit discussions.

Even when intentions are good, the compounding effect of small errors throughout the talent cycle can severely undermine trust, equity, and culture.

What Is the EU AI Act and Why Should You Take It Seriously?

Q: We’re not based in the EU — why should we care about this new regulation?

The EU AI Act, adopted in 2024, is the first comprehensive legal framework for artificial intelligence, and it puts a big red circle around HR.

The Act classifies AI systems used for hiring, promotion, performance evaluation, and workforce management as “high-risk.” That means if you use AI for any of those functions — even indirectly — you may be subject to:

Mandatory risk assessments

Human oversight requirements

Detailed documentation obligations

Ongoing monitoring and explainability standards

It doesn’t matter whether you built the system yourself. If you’re using a third-party vendor or platform with embedded AI, your organization can still be held accountable for the outcomes. The regulation extends beyond EU borders — if you’re hiring anyone in the EU, even occasionally, you may fall within its scope.

This is not a warning for the future — it’s a compliance issue right now. Some HR platforms already include disclaimers that shift the burden of “appropriate use” onto the buyer.

The EU explicitly calls out the risks of automated systems making decisions about people’s careers, pay, and employment.

The era of “we didn’t know what the tool was doing” is over. The EU AI Act makes it clear that you are responsible.

What Should HR Be Doing Right Now to Mitigate AI Talent Cycle Risks?

Q: If I’m an HR leader with limited technical background, where do I start?

You don’t need to become an AI expert — but you do need to take ownership of what AI is doing in your function. Here’s where to start:

1. Map the Machines

Conduct a full audit of the tools you use across hiring, performance, learning, mobility, and workforce planning.

Ask:

· Does this tool use AI?

· What decisions is it helping to make?

· Who approved it?

2. Identify “Silent Influence” Points

Look for areas where AI doesn’t make final decisions but strongly suggests outcomes, such as candidate rankings, performance summaries, or promotion pathways. These are often the most dangerous because no one feels fully accountable for the result.

3. Get Ahead of the EU AI Act

Even if you’re not in Europe, use the Act’s principles as a governance checklist.

Is there documentation of how your tools work?

Can you explain key outcomes to an employee or regulator?

Is there a human in the loop — with actual authority to override the system?

4. Assign Ownership Now

Governance doesn’t mean creating a new committee. It means designating one person (not IT) in HR leadership to be responsible for AI risk visibility. If you can’t name them today, that’s a red flag.

AI in HR is no longer experimental. It’s operational. Which means it is your risk.

Talent Is Too Critical to Be Left to Rogue Algorithms

AI won’t destroy HR. But ungoverned AI just might dismantle trust in it.

If algorithms can quietly decide who gets hired, promoted, sidelined, or let go — without clear rules, oversight, or accountability — then HR ceases to be a people function and becomes a passive bystander.

The real risk isn’t malicious code or robot overlords. It’s indifference. It’s letting the system run because no one had the time, clarity, or the courage to challenge it.

HR leaders don’t need to fear AI, but they do need to own what it does.

Because the future of work shouldn’t be written by unregulated software.

About AI Governance for HR CoLab Workspace

Margaret Spence, author of When AI Breaks the Law, helps HR and talent leaders operationalize AI governance across hiring, performance, and promotion. This CoLab workspace delivers daily frameworks to bridge the gap between compliance documentation and ethical AI principles—the gap where the $365M Mobley lawsuit occurred. You’ll build governance infrastructure that reduces legal, reputational, and EU AI Act compliance exposure before AI-driven talent decisions scale bias into discrimination lawsuits.

Join us live every Wednesday for our Let’s Build Governance Together Series - Our live programs are a premium edition of this Colab.