Understanding the EU AI Act's Risk Categories: A Guide for HR Professionals

Why the EU AI Act Matters to HR

The European Union’s Artificial Intelligence Act is a landmark regulation that will fundamentally change how companies develop, deploy, and use AI systems. For Human Resources professionals, this isn’t a distant technological issue; it’s a core compliance and ethical challenge that directly affects how you can use AI in recruitment, employee management, and workplace operations.

This guide is designed to demystify the Act’s risk-based categories for an HR audience. We will focus on how the regulation classifies AI applications you might encounter throughout the employee lifecycle, from hiring and promotion to performance monitoring. The Act’s central goal is to promote “human-centric and trustworthy artificial intelligence” while ensuring a high level of protection for fundamental rights (Recital 1, Article 1). Understanding its framework is the first step toward building a compliant and ethical HR technology strategy.

To begin, it’s essential to understand the core terminology the Act uses to define the technologies it governs.

The Building Blocks: Key Definitions for HR

This section clarifies the fundamental terms in the EU AI Act that are most relevant to HR functions. For each definition, we provide the official concept and a simple, one-sentence explanation in HR terms.

What is an ‘AI System’?

The Act provides a broad, technology-neutral definition of an AI system to remain future-proof.

An ‘AI system’ means a machine-based system designed to operate with varying levels of autonomy, may exhibit adaptability after deployment, and, for explicit or implicit objectives, infers from the input it receives how to generate outputs such as predictions, content, recommendations, or decisions that can influence physical or virtual environments.

In HR terms, this includes everything from resume-screening software and performance-monitoring tools to chatbots that interact with candidates and internal AI-powered survey-analysis platforms.

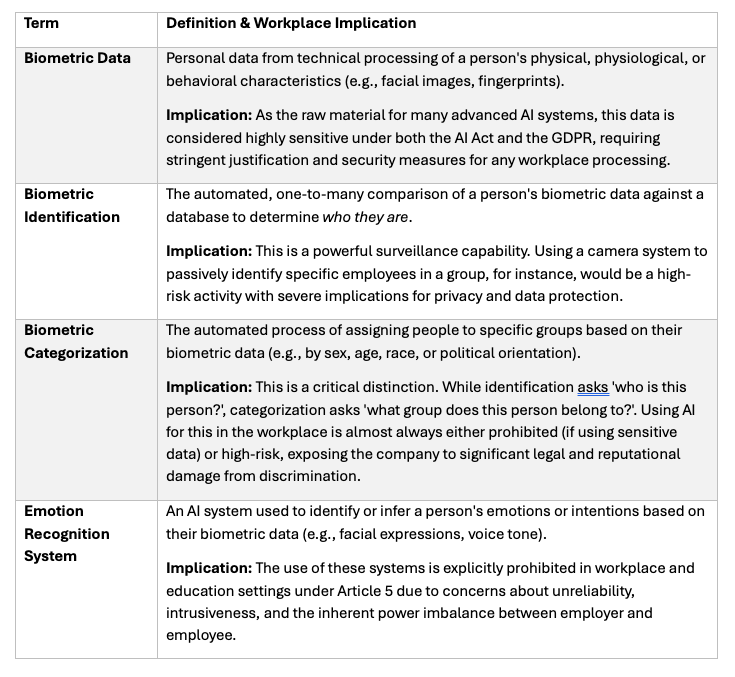

Biometric and Emotion-Based Technologies

The Act pays special attention to technologies that process human characteristics. Understanding these distinctions is crucial because their use is heavily restricted.

Now that these basic terms are clear, we can examine how the EU AI Act uses them to classify AI systems into risk categories.

2. Unacceptable Risk: AI Practices Prohibited in the Workplace

The EU AI Act takes a firm stance against AI practices that pose a clear threat to human rights, dignity, and EU values. As stated in Recital 28, these practices are “particularly harmful and abusive and should be prohibited.” For HR professionals, this means there are no exceptions or risk mitigation strategies; procurement, deployment, or use of such systems is strictly illegal.

The following practices are the most relevant prohibitions for a workplace context:

Emotion Recognition in the Workplace. Based on Article 5(1)(f), it is prohibited to place on the market or use AI systems to infer the emotions of individuals in workplace settings. The Act recognizes that these systems have “limited reliability” and are highly intrusive, particularly given the “imbalance of power” inherent in an employer-employee relationship (Recital 44). This ban does not apply in narrow cases, “...except where the use of the AI system is intended to be put in place or into the market for medical or safety reasons.”

Sensitive Biometric Categorization Article 5(1)(g) prohibits using AI to categorize individuals based on their biometric data to deduce or infer sensitive data such as race, political opinions, trade union membership, religious beliefs, or sexual orientation. This prevents the use of AI tools that might attempt to profile candidates or employees along these protected lines.

Social Scoring. The Act bans AI systems that evaluate or classify people based on their social behavior or personal characteristics to generate a “social score” (Article 5(1)(c)). If that score results in detrimental or unfavorable treatment in contexts unrelated to where the data was collected, it is prohibited. In an HR context, this would prohibit a system that, for instance, lowers an employee’s internal “trustworthiness” score based on their social media activity, thereby affecting their eligibility for promotion.

These prohibited systems pose the highest level of risk. The next category, while not banned, is subject to the strictest regulations.

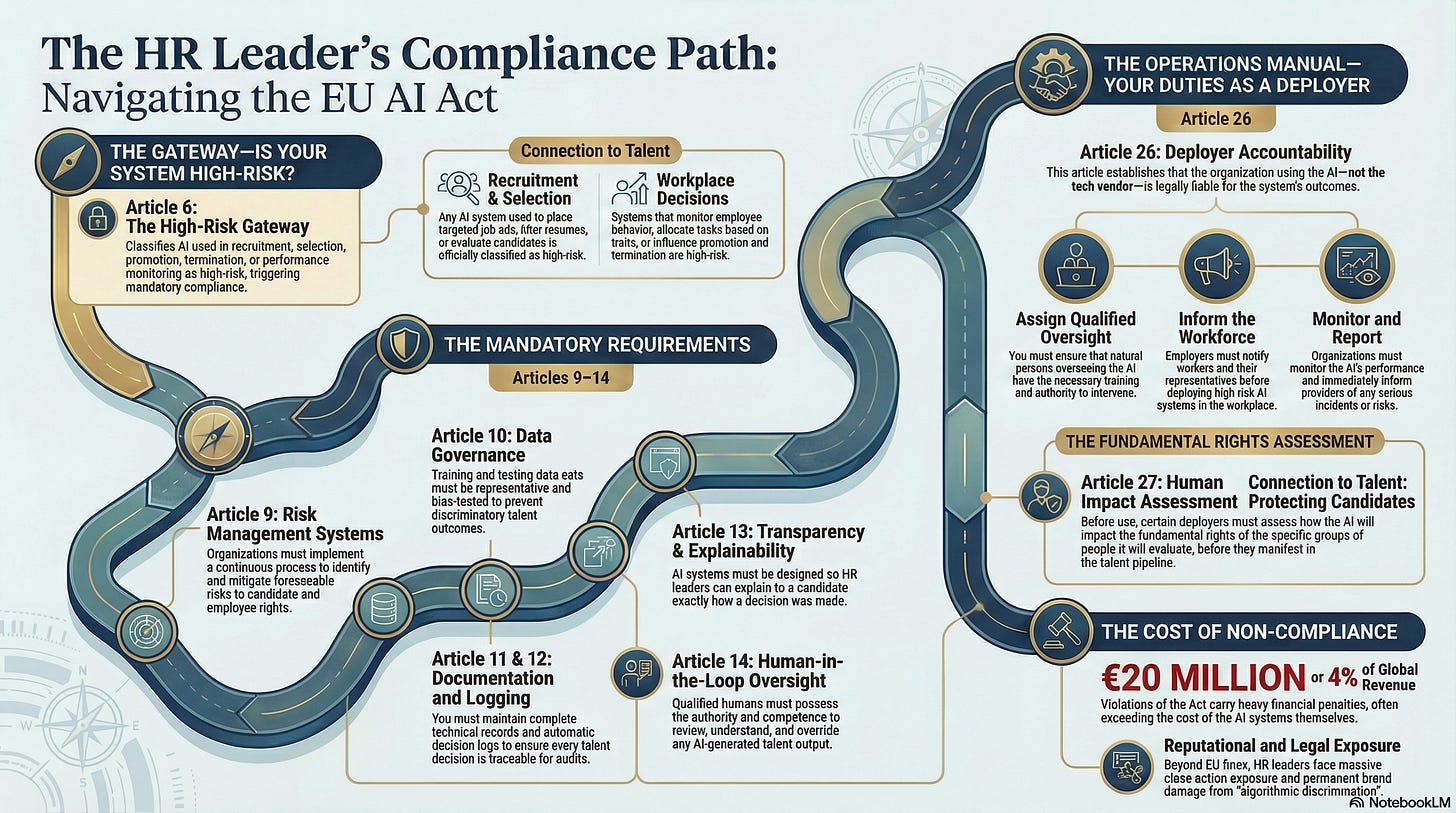

3. High-Risk AI Systems: What HR Needs to Know

This section is the core of the Act’s regulatory framework for HR. “High-risk” AI systems are not banned, but their potential to significantly affect people’s fundamental rights means they must comply with a strict set of rules before they can be used.

What Makes an AI System “High-Risk”?

High-risk systems are those used in critical areas that can have a substantial impact on a person’s life and opportunities. As Recital 57 highlights, AI systems used in employment are a prime example because they “may have an appreciable impact on future career prospects, livelihoods of those persons and workers’ rights.”

These systems are not banned but are subject to a rigorous compliance regime. Before deployment, both the system’s provider and the user organization must navigate specific hurdles and produce substantial documentation to demonstrate adherence to mandatory requirements, including:

Risk management systems

Data quality and governance

Technical documentation and record-keeping

Transparency and provision of information

Human oversight

Accuracy, robustness, and cybersecurity

High-Risk AI Applications in the Employee Lifecycle

The Act’s binding legal classification in Annex III, point 4, explicitly designates AI systems used in “employment, workers management and access to self-employment” as high-risk. The rationale, explained in Recital 57, is that these systems can significantly affect career prospects and worker rights. The following use cases are specifically identified:

AI for Recruitment and Selection: Systems that place job advertisements, screen or filter applications, and evaluate candidates.

Examples: Automated resume screeners that rank applicants, chatbots that conduct initial interviews, and video analysis tools that evaluate candidate performance.

AI for Promotion and Termination Decisions: Systems used to make decisions that affect the terms of a work relationship, such as career progression or ending an employment contract.

Examples: Performance management software that uses algorithms to recommend employees for promotion, or systems that flag employees for termination based on performance metrics.

AI for Task Allocation: Systems that assign tasks to employees or teams based on individual behavior, personal traits, or inferred characteristics.

Examples: A logistics system that assigns delivery routes based on a driver’s monitored behavior, or a platform that allocates freelance work based on inferred personality traits.

AI for Performance Monitoring and Evaluation: Tools that track or assess employees’ performance, behavior, or productivity.

Examples: Software that tracks employees’ computer activity to measure productivity, or tools that analyze communications to evaluate teamwork and engagement.

Understanding which of your tools fall into these categories is no longer merely a matter of good practice; it is a critical step toward legal compliance.

Key Takeaways: Why These Classifications Matter for Your HR Department

The EU AI Act’s risk framework is more than a legal checklist; it’s a strategic guide for building a fairer, more transparent, and more trustworthy workplace. Here are the most critical implications for your HR department.

Scrutinize Your HR Tech Stack. Your immediate priority is to conduct a thorough audit of your current and planned HR technologies. Identify which, if any, of your tools fall into the “prohibited” or “high-risk” categories. An AI tool for scheduling meetings is low-risk, while a tool that ranks candidates for a promotion is high-risk and requires rigorous oversight.

Vendor Accountability is Crucial. The Act imposes significant legal obligations on providers of high-risk AI systems. As a user (or “deployer” under the Act), your HR team must demand transparency and proof of compliance from your software vendors. Before purchasing or renewing a contract, request documentation and be prepared to ask pointed questions, such as:

Is your system classified as ‘high-risk’ under Annex III of the EU AI Act?

Can you provide us with the system’s EU Declaration of Conformity and technical documentation?

What specific measures have you implemented for effective human oversight?

How have you tested for and mitigated bias in your datasets and algorithms?

Protecting Employee Rights is Paramount. At its core, the Act is designed to safeguard fundamental rights, including non-discrimination, fairness, and privacy (Recitals 48 & 57). Viewing compliance through this lens turns it from a legal burden into a strategic asset. In today’s competitive talent market, a demonstrable commitment to fair and transparent AI is a powerful component of your Employer Value Proposition (EVP). By ensuring your use of AI is transparent, fair, and subject to human oversight, you build trust with candidates and employees, foster a more equitable workplace, and gain a competitive advantage in attracting and retaining top talent.

About AI Governance for HR CoLab Workspace

Margaret Spence, author of When AI Breaks the Law, helps HR and talent leaders operationalize AI governance across hiring, performance, and promotion. This CoLab workspace delivers daily frameworks to bridge the gap between compliance documentation and ethical AI principles—the gap where the $365M Mobley lawsuit occurred. You’ll build governance infrastructure that reduces legal, reputational, and EU AI Act compliance exposure before AI-driven talent decisions scale bias into discrimination lawsuits.

Join us live every Wednesday for our Let’s Build Governance Together Series - Our live programs are a premium edition of this Colab.