Unravelling AI in HR: Can You Terminate AI?

Why removing AI is harder and bloodier than implementation.

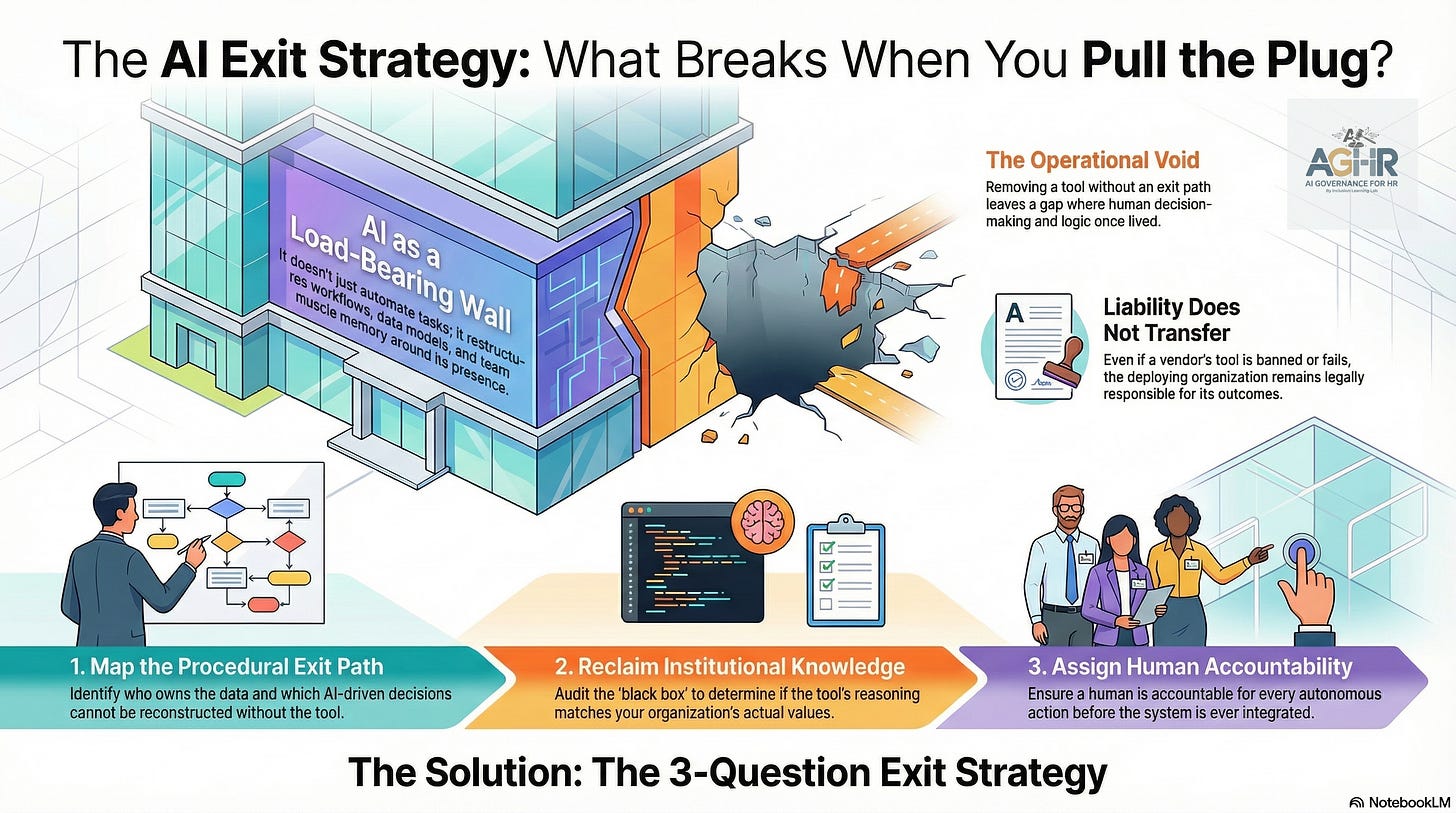

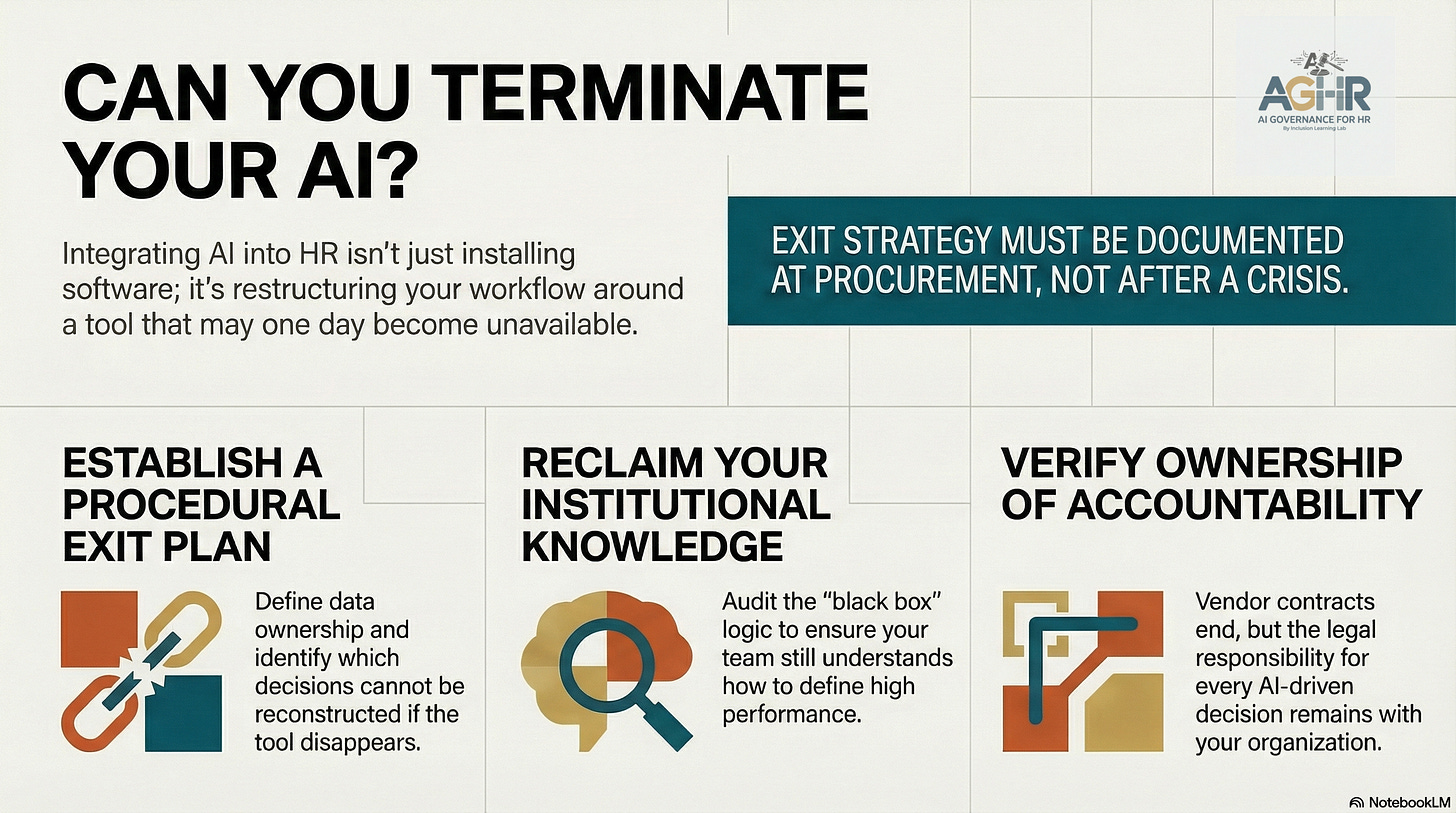

Removing an AI tool is not as simple as clicking "uninstall" or ending a subscription. In most organizations, the AI hasn't just automated tasks; it has reorganized the entire workflow around its presence.

The headlines changed quickly. The U.S. government indicated restrictions on Claude’s AI tools. Agencies rushed to respond. Procurement offices sent memos. Compliance teams flagged risks.

And somewhere in an HR department, a recruiter looked up from their screen and asked a question that no policy memo had prepared them for.

If we can’t use this anymore, how do we remove it without causing major disruption? What happens to the institutional knowledge we’ve transferred to the AI Agent?

The Question Has Already Changed

There is a moment in every technology adoption cycle that passes quietly, without announcement or ceremony. It’s the moment when the question shifts from “Is this allowed?” to something much more uncomfortable.

Once we’ve adopted AI and transferred our intellectual knowledge to an AI tool or agent, what happens if we have to unravel the AI?

“What breaks if we remove it?”

That shift is not a legal or compliance question. It is an architectural question about how deeply a tool has become part of the way work gets done.

And most organizations, if they are honest, cannot answer that.

Consider what happens when AI becomes embedded in a talent cycle. A recruiting platform scores resumes. A scheduling agent speeds up hiring timelines. A performance system provides ratings that influence succession plans and promotion decisions. Each of these tools was adopted independently, quietly approved, and celebrated during a rollout meeting.

But together, AI tools in HR have accomplished something important: they have restructured the workflow around their presence.

The HR analysts understand how to interpret AI outputs, but now they struggle to do the work without them. The institutional memory — including what “a strong candidate” looks like and what “high performance” means — has shifted into the model’s reasoning patterns. The model hasn’t just automated the process; it has become the process.

Now remove it.

The Workday Parallel

When Mobley v. Workday became the landmark AI bias case, the focus was on discrimination—whether an algorithm had systematically rejected qualified candidates on the basis of protected characteristics. That is the right conversation.

But buried within that story is a second, less visible, more systemic conversation.

What happens to an organization when the AI vendor that developed their hiring logic shuts down, gets banned, or is held liable?

Workday didn’t just sell a software license. Workday sold a decision framework. Thousands of organizations built their recruiting workflows, data models, and compliance assumptions around how Workday’s systems reasoned. When that reasoning is questioned — legally, ethically, or regulatorily — the crisis isn’t merely about “finding a new vendor.”

The crisis is that we don’t understand what our data looks like without this lens. We do not know how to make these decisions without this scaffold.

That isn’t a technology problem; it’s a governance failure embedded at the time of adoption.

The Three Questions No One Asks Before Signing the Contract

Every organization deploying AI in talent, learning, or workforce systems should be required to answer three questions before they go live — not after a ban, not after a lawsuit, not after the vendor pivots:

1. What is the exit path? Not conceptually, but procedurally. Who owns the data? What format is it in? What decisions have been made by this system that cannot be reconstructed without it? If this tool disappeared tomorrow, what would your team no longer know how to do?

2. What institutional knowledge resides within this model? When your team stopped debating what defines a great hire and began trusting the score, something shifted. A judgment that once dwelled in a human mind — fallible, yes, but auditable — moved into a black box. Can you reclaim it? More importantly, was it the right judgment to begin with, or did you just automate bias at scale?

3. Who is accountable when the tool is no longer available? AI governance frameworks, including the EU AI Act, are clear: the deploying organization is responsible for the outcome. The vendor’s liability clauses do not transfer your legal responsibility. If Workday’s algorithm discriminated, you are responsible. If a banned AI tool made 40,000 hiring decisions before the ban, those decisions are yours.

The vendor contract ends. The decisions do not.

Unraveling Is Not the Same as Removal

Here is what most organizations discover too late: removing an AI tool from your infrastructure is not like uninstalling software. It is more like removing a load-bearing wall.

The AI agent did more than just automate tasks. It restructured dependencies. Your HRIS feeds it. Your ATS was calibrated around it. Your compliance reporting was designed to produce its outputs. Your team’s muscle memory was built in its presence.

Removing AI without a proper offboarding plan doesn’t bring you back to your previous state. Instead, it leaves a gap, a mental and operational void where the process once was.

This is why the governance conversation cannot begin with “what happens when something goes wrong.” It must start at procurement with a straightforward, non-negotiable principle:

No AI system is integrated into your ecosystem without a documented path for its exit – what is the exit strategy?

What AI Governance in HR Actually Means Now

AI Governance has become a word that feels like paperwork, but it should feel like building architecture.

The organizations that will navigate bans, vendor shutdowns, regulatory changes, and algorithm failures are not the ones with the best policy documents. They are the ones who understood the dependency before it became hidden.

Who assigned a human to be accountable for every autonomous action?

Who built the kill switch before it was needed?

The U.S. government's restriction on Claude is not just about national security or choosing vendors. It’s a live example of what every organization will eventually face on a smaller scale: a tool they rely on suddenly becomes unavailable, causing a reckoning with how much they’ve allowed it to grow. The dependency triangle.

The critical AI governance question is how dependent we have become on AI in HR and how we can successfully unravel that dependence.

The question is not whether this will happen to you.

The question is whether you will be able to answer: what breaks if we remove this AI from the HR system?

And whether you will have built something solid enough to survive that answer.

This article is part of an ongoing series on AI governance, workforce systems, and the human infrastructure behind intelligent technology.

About AI Governance for HR and AI CoLab Workspace for HR Leaders

Margaret Spence, author of When AI Breaks the Law, helps HR and talent leaders operationalize AI governance across hiring, performance, and promotion. This CoLab workspace delivers daily frameworks to bridge the gap between compliance documentation and ethical AI principles—the gap where the $365M Mobley lawsuit occurred. You’ll build governance infrastructure that reduces legal, reputational, and EU AI Act compliance exposure before AI-driven talent decisions scale bias into discrimination.