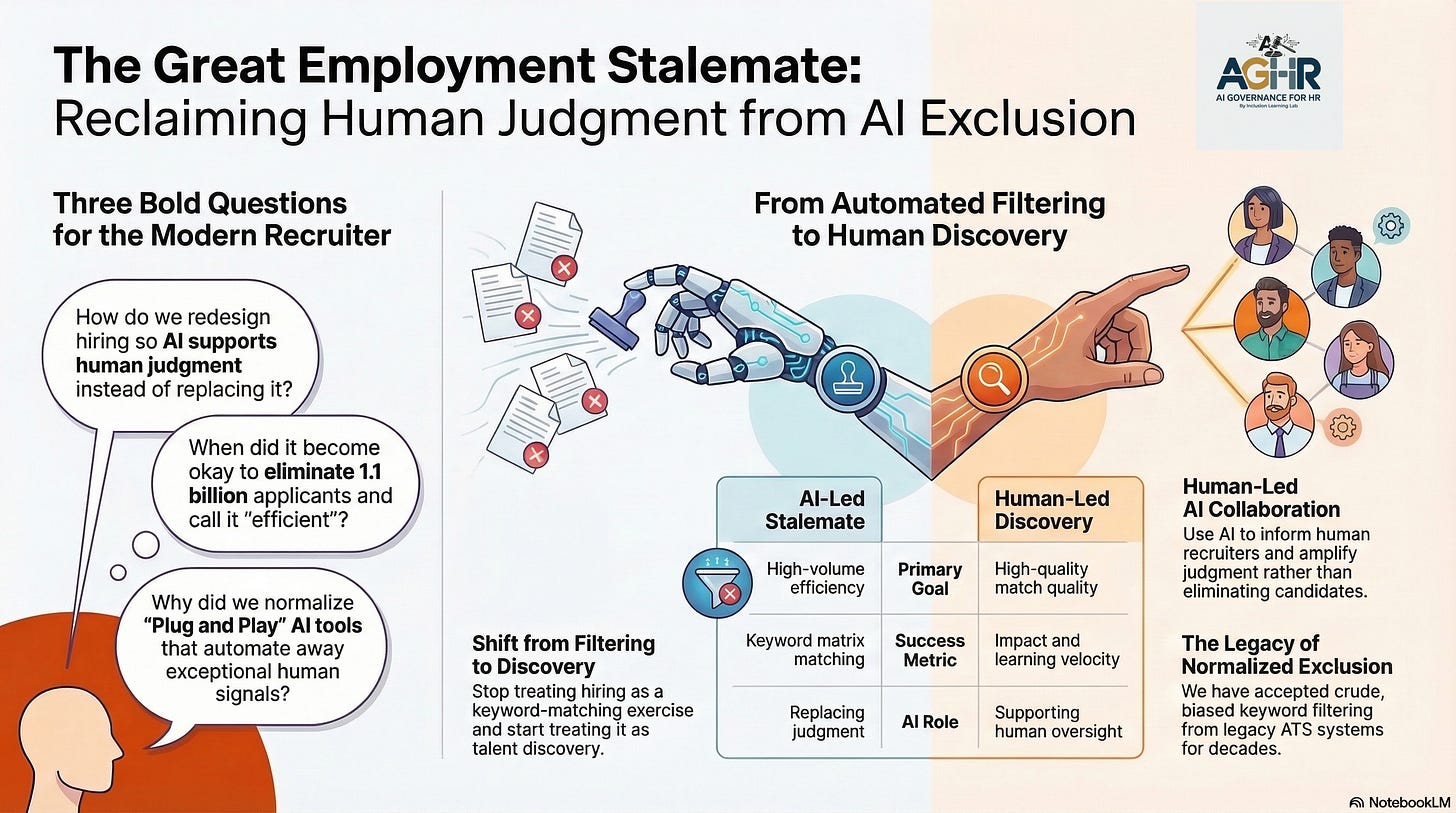

Why Did We Normalize Plug-and-Play AI Tools in HR?

We went looking for the shiny red ball — and we found it. The only problem is that the ball has now taken over our consciousness to the point where rational decisions no longer feel rational.

When did it become okay to eliminate 1.1 billion applicants and feel that we have done something so efficient that it is normal?

The HR Conference Floor Moment - We Need to Find New HR Technology

Picture the scene. You are walking the expo floor at a major HR conference. The energy is electric. Vendors line every aisle, each promising a future where hiring is faster, smarter, and → finally, finally → free of human bias. You have spent years defending your budget, justifying your headcount, and watching your team drown in paperwork and ATS backlogs. Then a vendor pulls you into a booth and shows you a demo.

The AI screens a thousand resumes in seconds. It ranks candidates before you’ve had your first cup of coffee. It promises objectivity. It speaks your CFO’s language: efficiency, scale, cost reduction, and risk mitigation. You breathe a sigh of relief; you didn’t know you were holding.

You go back to your office. You open the contract and sign it.

Nobody asked the most important question in the room: What happens to the people on the other side of this system?

We Were Not Looking for Truth. We Were Looking for Relief.

Let’s be honest about what happened — not as an accusation, but as a reckoning. HR has been under siege for decades. Fewer resources. Higher volume. Intensifying legal pressure. The promise of AI wasn’t just attractive; it was oxygen.

We were the Optimizer, prioritizing speed over scrutiny. We were the Delegator, assuming the tech knew best, so we didn’t have to. We were the Compliant, following the process without questioning what it was actually doing to people. We were, in many cases, the Bystander — noticing something felt off and staying quiet because we had no formal language for what we were observing.

None of us believed we were doing harm, and that is precisely what makes this moment so dangerous.

When harmful outcomes feel normal, they stop registering as harm at all. They become part of the infrastructure. They become Tuesday.

The Normalization Trap: 1.1 Billion Applicants Should Have Stopped Everything

Workday’s own filings show that 1.1 billion applications were rejected by its algorithmic systems during the relevant period in the Mobley v. Workday case. One point one billion. Not one thousand. Not one million. One billion human beings, each of whom applied with some measure of hope, with some version of themselves on paper, were filtered out, ranked down, and eliminated by a system that nobody audited, owned, or could fully explain.

And the industry did not stop. It accelerated.

That number did not become a scandal until a lawsuit gave it a name. Before Mobley, it was a benchmark, a proof point in a sales deck, and what efficiency looked like.

The Normalization Trap in its most complete form: when a system processes bias at sufficient scale and speed, it stops looking like discrimination and begins to look like operations.

AI Implementation: How Rational People Build Irrational Systems

Here is what makes failures in AI governance in HR so difficult to confront: no single person in the chain made a catastrophically wrong decision. Each step had its own internal logic.

Engineers built prediction models trained on historical hiring data because that was what they had. Talent leaders championed the tools because they promised to remove the human bias they had been criticized for. Hiring managers trusted the scores because they were told to and because trusting a score felt safer than trusting a gut feeling in a litigious environment. Legal reviewed the indemnification clauses and confirmed contractual compliance. Executives approved deployment because the vendor provided algorithmic fairness documentation, which seemed sufficient.

No one was the villain. Together, they built a system that discriminated against people based on race, age, gender, and disability — at scale, in silence, for years.

This is not a technology story. It is a systems story. In systems, the most dangerous failures are those that emerge from the gap between what each person believes they are responsible for and what the system is actually doing in aggregate.

IT deployed. HR approved. Legal reviewed. No verification.

If you like our newsletter -

Share it with someone in HR

The AI Implementation Seduction Has a Name: Speed

There is a specific failure pattern worth naming here because it appears in nearly every AI bias case on record. Call it the Speed Trap.

The organizational pressure to deploy always outpaces the institutional capacity to test. A Q3 deadline. A board presentation. A competitive intelligence report warns that your largest rival already has AI in its screening pipeline. The urgency feels real because it is — in the market's competitive logic, it is. But urgency has a cost that rarely appears on the deployment timeline.

No time for bias audits. No time for demographic testing. No time to ask: what does this system do to candidates who attended Historically Black Colleges? What does it do to applicants over 50? What does it do to people whose career paths look nonlinear because life is nonlinear?

The Speed Trap doesn’t just skip governance. It makes skipping governance feel responsible. Moving fast feels like leadership, while slowing down to verify feels like obstruction.

And so organizations went live with systems they did not understand, governing decisions they were no longer making, and about people they could no longer see.

The Data Told the Past. We Called It the Future.

The second failure pattern, hidden within the normalization story, is subtler and more insidious. Call it the Data Echo.

When AI systems are trained on historical hiring data, they learn whatever that data contains — including every bias, exclusion, and structural inequality that shaped who was hired before. An algorithm trained on ten years of promotions in a male-dominated leadership pipeline learns, faithfully and precisely, that leadership looks male. An algorithm trained on resumes from a workforce that has historically excluded certain demographics learns that certain demographic signals predict poor performance.

The machine is not making this up. It is telling you the truth — about your past. Then you deploy it to choose your future.

One study found that AI hiring tools favor white-associated names 85% of the time, compared with 11% for female-associated names — not because someone programmed bias, but because the training data reflected a world where that imbalance existed and was treated as normal. The Data Echo doesn’t amplify bad intentions. It amplifies the status quo. And when the status quo is unjust, amplifying it at an algorithmic scale isn’t neutrality. It’s acceleration.

We called this “predictive.” A more accurate term would be “reproductive.”

AI Governance: We Outsourced Accountability and Called It Partnership

There is a third layer to this story that the industry has been slow to confront, and it lies in the vendor relationship itself.

When companies deploy AI hiring tools, they enter into a legal and ethical arrangement that few fully understand at the time of signing. The vendor promises bias-free performance. The contract includes indemnification language that appears to shift liability. The HR leader, reasonably, assumes that the vendor’s fairness documentation means the fairness problem has been solved.

But courts are now establishing a different reality. In Mobley, the prevailing legal framework was not the traditional employment agency theory — it was agency. Employers who delegate significant control over hiring to algorithmic systems are responsible for what those systems do. The vendor is your legal agent. Their algorithm’s discrimination is your organization’s discrimination.

“Our AI is bias-free” is not governance. It is marketing.

And yet we normalized vendor assurance as a substitute for institutional accountability. We normalized the black box as an acceptable trade-off for speed. We normalized the Accountability Gap — where IT deployed, HR approved, Legal signed off, and nobody owned the outcome.

The result was not a lack of accountability. It was accountability distributed so thinly across so many functions that it effectively disappeared.

AI Bias on Scale: The People We Could No Longer See

Here is the piece that tends to get lost in the governance conversation, because governance often falls to the technical and legal. But it deserves to be stated plainly.

On the other side of every algorithmic rejection was a person. A person who is prepared. They researched the company. Wrote a cover letter. Applied for your job with some version of hope.

The research shows that AI hiring tools using proxy variables such as educational background, geographic location, graduation year, and personality assessment results can infer protected characteristics from seemingly neutral data. A candidate who attended a Historically Black College. A candidate whose graduation year reveals their age. A candidate whose personality assessment captures a disability. These are not edge cases. They are patterns. And when a system processes those patterns at a scale of 1.1 billion rejections, the mathematical probability of discriminatory outcomes is no longer theoretical. It is structural.

We built a system that could not see the person. Then we measured its success by how quickly it processed the invisibility.

AI Bias: Normalizing Was a Choice.

The most important thing to understand about normalization is that it is not something that happens to us. It is something we participate in, incrementally, through thousands of small decisions, each of which seems reasonable in isolation.

Signing the vendor contract without requiring independent bias testing was a choice. Approving deployment before the demographic analysis was complete was a choice. Trusting the algorithmic score without establishing an escalation path for frontline recruiters who noticed something wrong was a choice. These are not indictments. They are invitations — to look clearly at what we have built and to decide whether we want to keep building it.

ATS systems have been doing crude keyword filtering for more than twenty years with almost no scrutiny. That, too, was a form of normalization. What AI has done is not create a new problem — it has inherited and scaled an existing one. The question is whether we use that clarity to finally address the root, normalize the next layer of dysfunction, and wait for the next lawsuit to name it.

Critical AI Governance Question We Have to Ask Before We Ask Any Other

Before any governance framework. Before any compliance checklist. Before we discuss the EU AI Act’s high-risk classification requirements or the EEOC’s expanded theory of vendor liability, there is a more fundamental question every HR leader owes themselves.

What did I believe I was protecting when I said yes to AI in HR?

Because the answer to that question reveals something important about what we must protect going forward.

If we said yes to AI tools because we believed we were protecting candidates from human bias, we need to reckon with the evidence that we may have replaced visible, contestable bias with invisible, scalable bias.

If we said yes to protect our teams from burnout, we need to ask what burden we transferred onto the people our systems could no longer see.

If we said yes to protect the organization from liability, we need to acknowledge the irony that the tools we deployed are now the source of the very liability we were trying to prevent.

None of these realities makes the decision wrong in its original intent. They make it consequential in ways that demand our attention now.

The Beginning of AI Accountability.

Let’s be clear: the answer is not to abandon AI tools. The answer is to stop treating them as decisions we make once and then forget. AI in hiring is not a procurement event. It is an ongoing governance responsibility — one that belongs to HR leaders, not to vendors, IT, or the contract.

That means demanding evidence, not assurances. It means building independent verification into every deployment timeline, regardless of quarterly pressure to move faster. It means ensuring that every AI system affecting a person’s livelihood has a human with the authority and obligation to override it. It means treating historical data as what it is, a record of what we have done, and auditing it before we use it to determine what we will do next.

Most of all, it means refusing to let scale and speed normalize harm.

1.1 billion is not a benchmark. It is a reckoning. The only question now is whether we treat it as such.

The Normalization Trap closes when we stop calling it AI efficiency and start calling it what it is. The first act of governance is the act of seeing clearly. That begins here.

About AI Governance for HR:

Margaret Spence, author of When AI Breaks the Law, operationalizes AI governance for HR leaders overseeing talent-cycle AI. Daily frameworks to close the gap between compliance and ethics—where lawsuits occur. Build governance systems that reduce legal and regulatory exposure before bias scales.