Woman's Talkspace Therapy Sessions Read in Court by Her Former Employer

How digital mental health apps operate in a regulatory gray zone and may not qualify as HIPAA-covered entities

There is a case making its way through the national conversation right now that every HR leader needs to read in full. Not skim. Read.

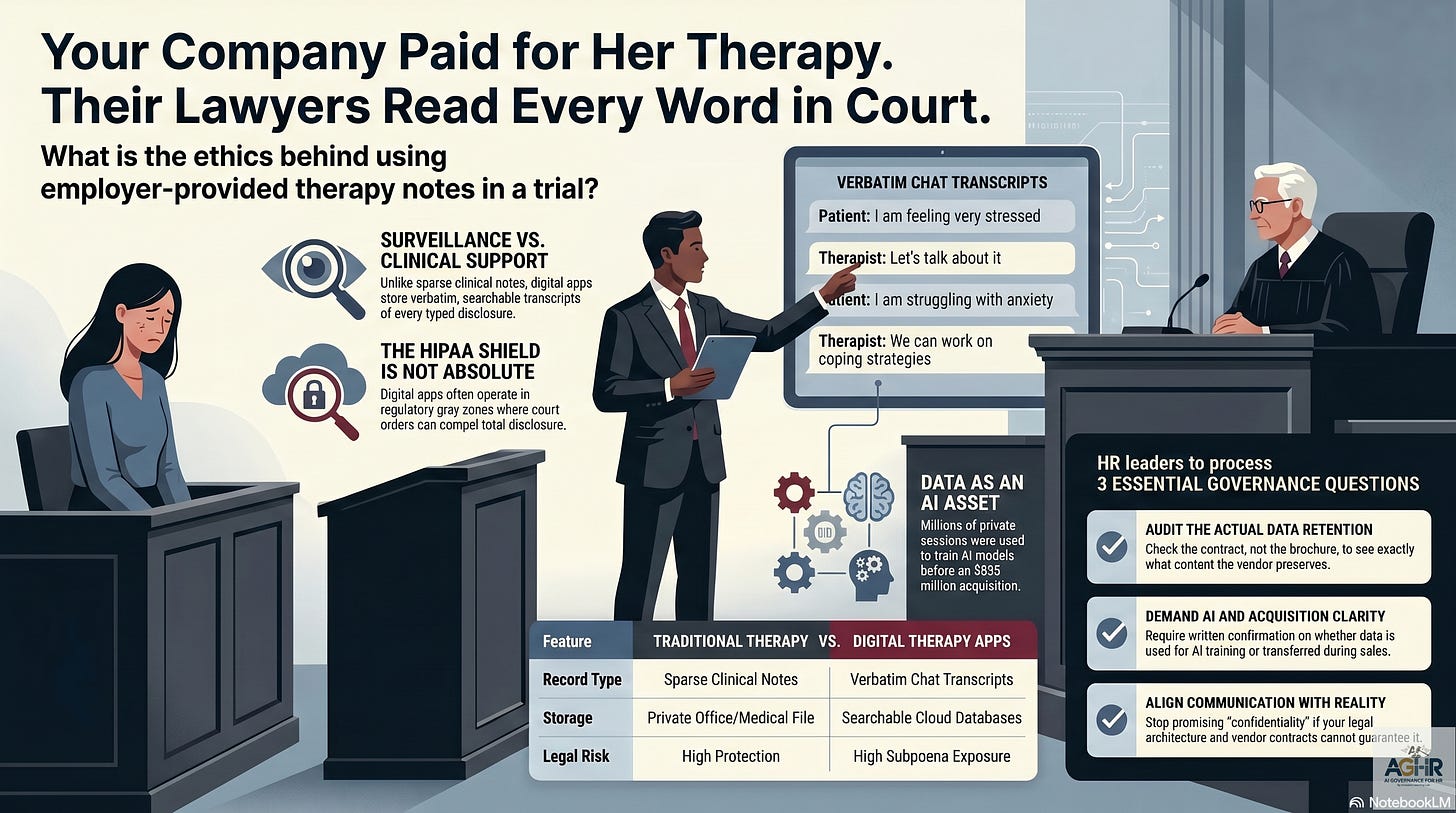

Her employer paid for her mental health support. She filed a pregnancy-discrimination lawsuit. The employer subpoenaed the records and then used them to win in court.

Are Privacy Rights Dead?

Jennifer Kamrass was a nurse practitioner at AdventHealth. She was terminated while nearly nine months pregnant. She sued for pregnancy discrimination. During litigation, AdventHealth’s legal team obtained a court order compelling Talkspace, a digital therapy platform offered through her employer’s benefits program, to produce the complete transcripts of her sessions.

Word for word. Every fear she typed. Every private disclosure she made was in what she believed was a confidential space.

Those transcripts were admitted into the litigation. The discrimination claim was dismissed, and the court ruled in AdventHealth’s favor.

No one was held accountable for violating her privacy. (But did she have a privacy right?)

This case is not about termination or technology. It is a governance story. HR built the environment that made it possible.

Why This Is a Governance Crisis, Not Just a Tech or Termination Story

HR leaders are quick to frame data breaches and privacy violations as IT problems. This one cannot be handed off to IT.

You selected the vendor. You negotiated the contract. You included Talkspace or a similar platform in the benefits enrollment guide. You sent the all-hands email about psychological safety and whole-person well-being.

And then you never asked what would happen to those words in a courtroom.

That is a governance failure. Not an IT failure. Not a legal failure. A governance failure that began the moment HR chose a vendor without fully understanding the benefits’ underlying data architecture.

The gap between what HR promises employees and what vendor contracts actually guarantee is where Jennifer Kamrass fell. That gap exists in most organizations today, yet most HR leaders do not know its extent.

What HR Leaders Have Missed About Digital Therapy Platforms

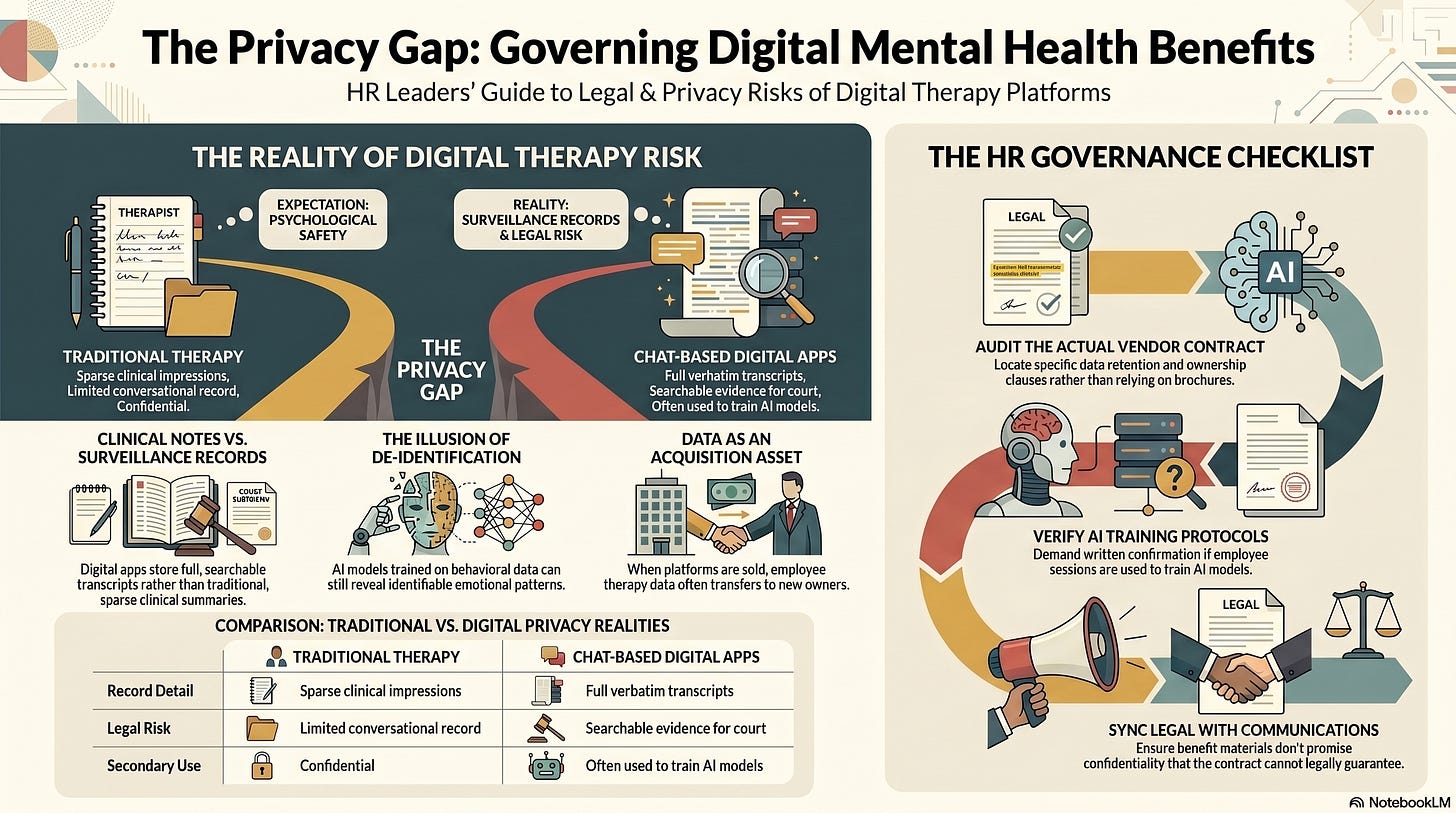

Traditional therapy notes are sparse. A clinician records an impression, a mood, and a clinical observation. The full conversation stays in the room.

Chat-based digital therapy platforms store everything. Every word the employee types. Every response from the therapist. The complete transcript is preserved, searchable, and retrievable.

That distinction is not minor. It is the difference between a clinical note and a surveillance record.

When a court orders that content be produced, it is not getting a summary. It is getting the raw, verbatim record of an employee’s most vulnerable moments. Those records can be read, excerpted, and used in litigation.

Most HR leaders who selected digital therapy platforms for their EAP were unaware of this. The vendor presentations did not lead with it, and the enrollment materials did not explain it. The benefit was positioned as support, not as a data asset with legal exposure.

Now you know. The question is what you do with that knowledge.

The AI Layer Makes Data Privacy Significantly Worse

The Kamrass case would be concerning enough as a standalone privacy and litigation story. But there is a second layer that HR leaders have yet to reckon with.

Talkspace has publicly disclosed that it is building large language models trained on what it describes as the industry’s largest behavioral health dataset, comprising millions of therapeutic interactions and twelve years of employee disclosures, now powering an AI product.

The sessions are described as de-identified. Researchers who study behavioral data will tell you that de-identification is not a guarantee. Word patterns, emotional cadence, and the therapeutic arc of an individual’s disclosures over time are identifiable signals even without a name attached.

In March 2026, Universal Health Services agreed to acquire Talkspace for $835 million.

The dataset traveled with the company. The terms under which your employees consented to share their most private thoughts did not automatically transfer to a new owner. The data did.

Here is the question most HR leaders have never asked their EAP vendor: Are my employees’ sessions being used to train an AI model?

Here is the follow-up question almost no one asks: When you are acquired, what happens to that data under the new owner’s governance framework?

If you do not know the answers, you have a liability that you have not yet measured.

The Four Risk Management Lenses HR Leaders Must Apply Now

This case poses risk across four dimensions simultaneously. Each dimension demands a response.

Employee Trust and Psychological Safety

HR has spent years building psychological safety into a cultural priority. Campaigns, training, and benefit communications, all of it built on the premise that employees can seek help without fear.

This case shows how easily that premise collapses when the legal architecture beneath it has not been examined. Employees who learn that their therapy transcripts could appear in a courtroom will stop using mental health benefits. They will carry their burdens privately. The downstream effects on performance, retention, and culture are not theoretical. They are predictable.

The trust debt created by this case, and by every similar case that follows, compounds every day organizations fail to address it.

Vendor Selection and Data Governance

Most HR vendor due diligence for EAP and mental health platforms focuses on clinical quality, cost, and employee adoption rates. Almost none of it addresses data architecture.

How long does the vendor retain session content? Where is it stored? Who has access to it? Is it used to train AI models? What happens when they receive a subpoena? What happens when they are sold?

These questions were not included on most HR procurement checklists when these platforms were selected. They need to be included on every checklist from this point forward.

Legal and Compliance Exposure

HIPAA is often misunderstood as an absolute shield. It is not. Many digital mental health apps operate in a regulatory gray zone and may not qualify as HIPAA-covered entities in the traditional clinical sense. Even when HIPAA applies, court orders can compel disclosure.

Employment litigation often involves subpoenas for third-party records. The Kamrass case is not an anomaly. It is a preview of a pattern that will repeat as more employers adopt digital mental health platforms and more employment disputes end up in court.

The question your General Counsel needs to answer: If an employee who uses our EAP platform files a discrimination or wrongful termination claim tomorrow, what records can opposing counsel subpoena from our vendor? What are the ethical parameters of using someone’s therapy notes in litigation? Is it scorched earth, or do we have a red line we will not cross?

If legal cannot answer that question, you have an ethical compliance gap that needs to be closed today.

Reputation and Ethics

There is a board-level question within this case that most organizations have not yet surfaced.

Is your organization comfortable sponsoring tools whose business model depends on collecting the most sensitive data your employees will ever generate?

Is the board aware that EAP platforms are developing AI products that use employee mental health disclosures?

Is that consistent with your stated values regarding employee dignity and data ethics?

These are not abstract questions. They are governance questions that belong in the CHRO’s report to the board.

Five Questions HR Leaders Must Ask This Week

Not next quarter. Not in the next benefits review cycle. This week.

1. What does your EAP vendor actually store?

Retrieve the contract, not the benefits summary. Use the actual contract language. Locate the data retention clause. Understand exactly what content the platform preserves, for how long, and in what form.

2. Is session content being used to train any AI model?

Ask this directly in writing and require a written response. “De-identified” is not a sufficient answer. Ask specifically which data is used, how de-identification is performed, what the re-identification risk assessment indicates, and who oversees the process.

3. What happens to employee data if your vendor is acquired?

This clause either appears in your contract or it does not. If it does not, your employees’ data can be transferred to a new owner under terms your employees never agreed to and your organization never reviewed. Add this to every vendor contract renewal and every new procurement process going forward.

4. How does your vendor respond to subpoenas and court orders?

Who makes the decision to produce records? What is the notification process? Does your organization receive notice before records are produced? Does the employee receive notice? What legal standards does the vendor apply before complying with a court order?

5. What are you telling employees about confidentiality that you cannot legally guarantee?

Review your benefit enrollment communications. Review your wellness campaign materials. Identify every place you have used the word confidential or implied that session content is protected. If those statements are not supported by the actual contract terms and the legal landscape, you have a communications problem and a trust problem. Both require correction.

What HR Must Address About Data Privacy at the Organizational Level

Beyond the five immediate questions, this case requires structural action.

Conduct an emergency inventory of all digital mental health and well-being platforms in your benefits ecosystem. List every EAP vendor, teletherapy platform, coaching app, mindfulness tool, and chatbot. Identify which platforms store session transcripts versus minimal clinical notes. Categorize them by legal exposure risk.

Establish a position on the use of benefit-derived mental health data in litigation. Work with your General Counsel to define your organization’s policy. The Kamrass case shows that employers can legally seek therapy records in employment disputes. The question is whether your organization will. That decision should be made deliberately at the leadership level and documented in your ethics governance framework.

Build AI governance into every benefits vendor due diligence process going forward. This is not optional and is not an IT responsibility. HR selects these vendors and governs these vendor relationships. AI governance for benefits procurement falls under HR.

Brief your leadership. The CHRO, the C-suite, and the board need to understand this case, its implications, and your organization’s current exposure. Prepare a one-page briefing and present it. Do not wait for a lawsuit to start the conversation.

The Question That Cannot Be Unanswered

Jennifer Kamrass sought help from a system her employer built for her. She was nine months pregnant, fighting to protect her livelihood, doing exactly what HR programs are designed to encourage.

She trusted the room.

The room was not safe.

Every HR leader reading this has employees who are, right now, typing their fears into an employer-provided platform. They believe the room is safe because HR told them so.

If your employees knew their therapy chats could be disclosed in a courtroom, would they still feel psychologically safe in your organization?

If the answer is no, the problem is not the app. The problem is the governance you have not yet built around it.

You have the tools to close this gap. The only question is whether you act before your organization becomes the next case study.

Or a risk management article.

This Article Made You Think. What Comes Next Will Make You Act. Learn more about our AI Governance Masterclass for HR Leaders

Start with Module One Free:

By the end of this workshop, you will be able to answer the questions every General Counsel is now asking HR:

Can you defend how your AI systems made that decision?

Do your managers understand that every AI-generated summary of a sensitive conversation is now discoverable in court?

Would your current governance withstand scrutiny — or expose the gap?

About AI Governance for HR & CoLab Workspace

Margaret Spence, author of When AI Breaks the Law, helps HR and talent leaders operationalize AI governance across hiring, performance, and promotion. Our CoLab workspace delivers daily frameworks to bridge the gap between compliance documentation and ethical AI principles—the gap where the $365M Mobley lawsuit occurred. You’ll build governance infrastructure that reduces legal, reputational, and EU AI Act compliance exposure before AI-driven talent decisions scale bias into discrimination.