Are We Asking the Wrong Questions About AI in HR?

These 25 Questions Will Change How You Manage AI in HR Forever.

There is a conversation underway in every HR department right now. It sounds like a strategy. It looks like progress. Leaders are announcing AI initiatives, deploying new tools, and celebrating efficiency gains.

But beneath the enthusiasm lies a quieter, more unsettling truth: most organizations are moving fast in the wrong direction — and nobody is asking the questions that would reveal it.

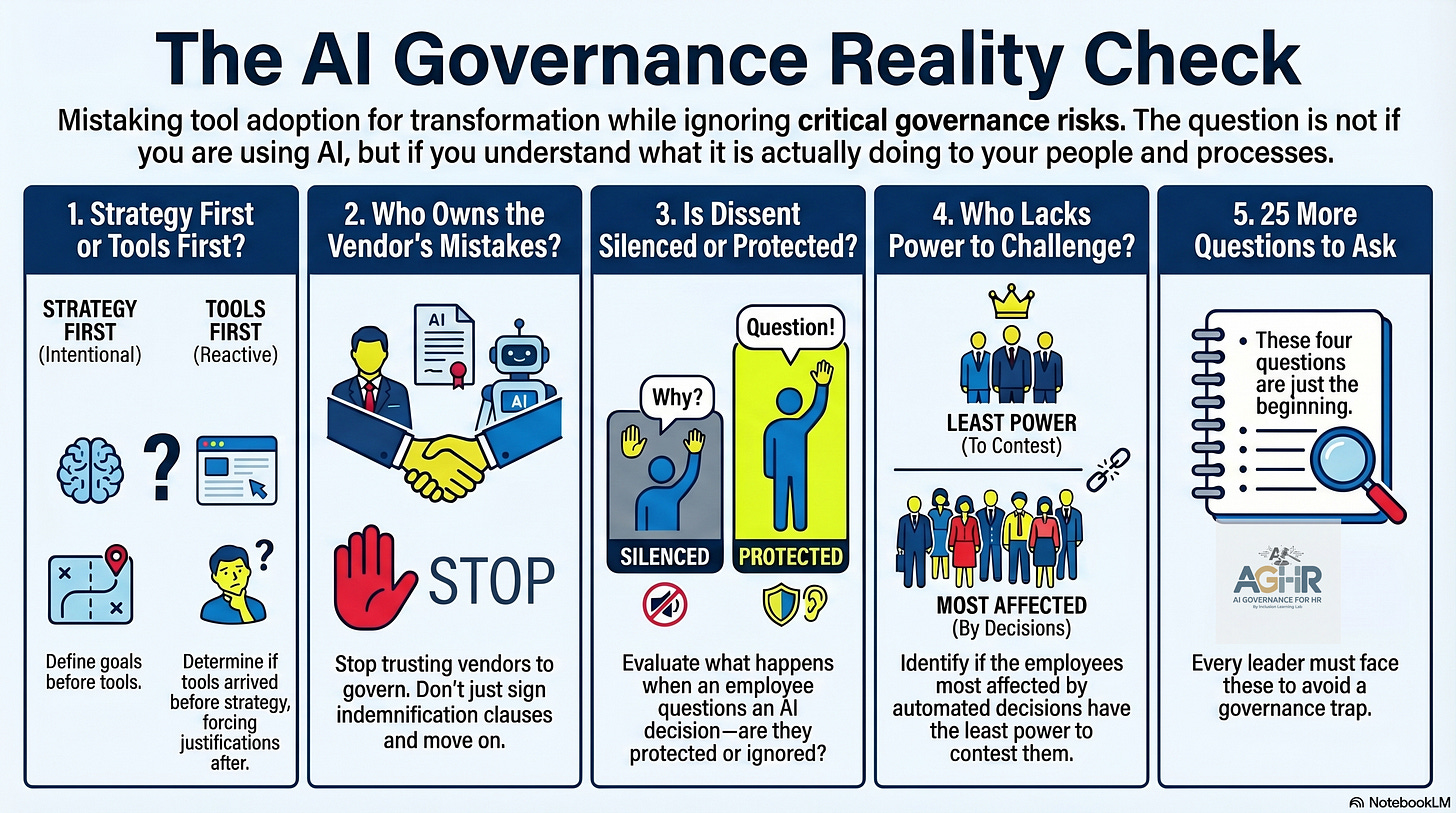

We have confused activity with strategy. We have mistaken tool adoption for transformation. And in the gap between what we think we’re doing with AI and what is actually happening inside our organizations, governance trapdoors are quietly opening.

This is not a technology problem. It never was.

Is it a failure of leadership alignment or change management?

Most HR leaders won’t recognize the failure until they’re sitting across from legal counsel, explaining why their AI system has become the centerpiece of a discrimination lawsuit.

The question is not whether your organization is using AI. The question is whether you understand what your AI is actually doing within your processes, to your people, and the human beings on the other end of every automated AI decision.

Before you build another AI roadmap, sign another vendor contract, or announce another transformation initiative — stop.

Ask yourself whether you’ve been asking the right questions about AI in HR.

The 25 Questions HR Leaders Are Not Asking About AI — But Should Be

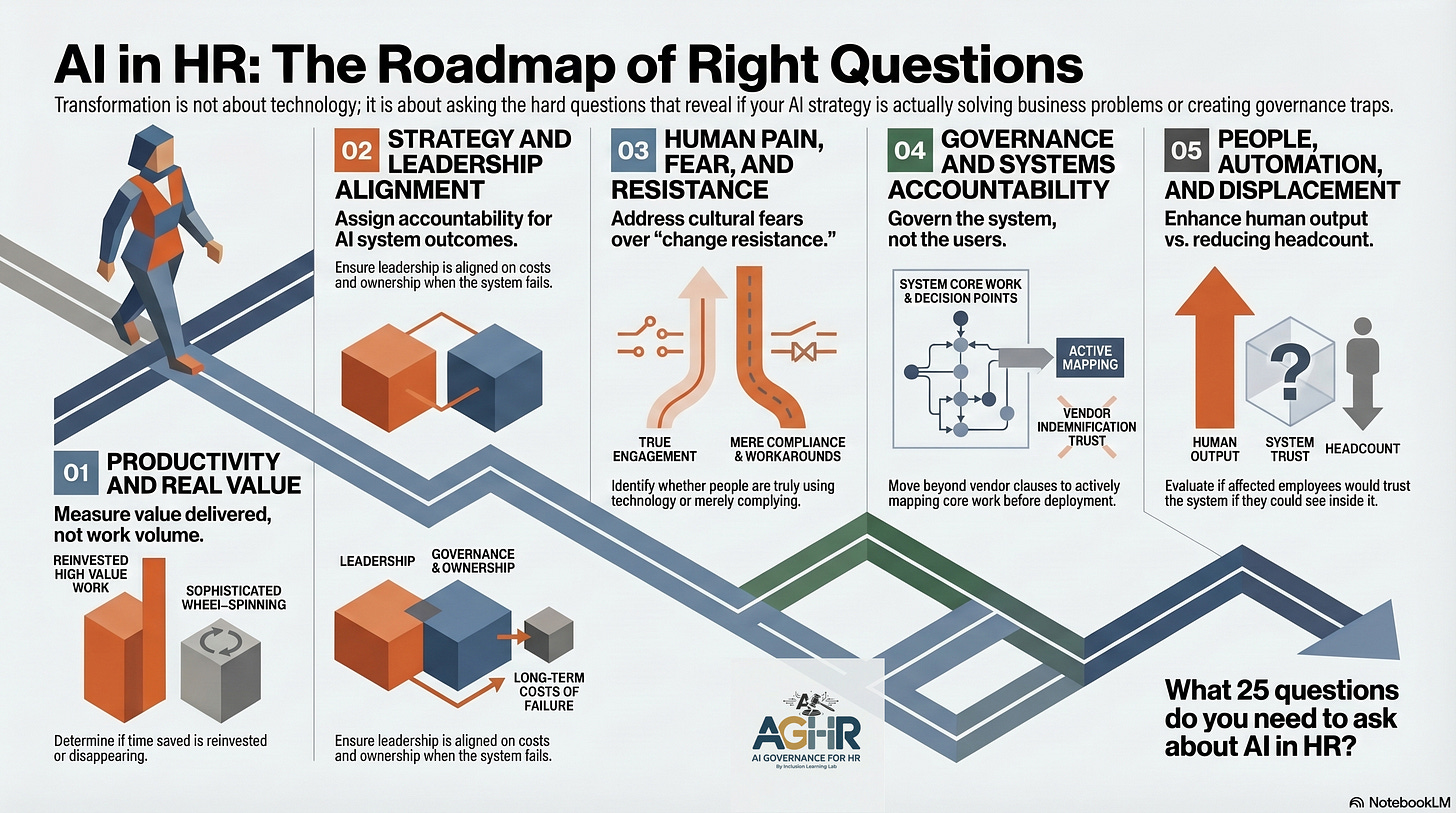

Productivity and Real Value

Are we saving time with AI — or have we simply created a more sophisticated way to spin our wheels?

What specific business problem were we trying to solve when we deployed this AI tool — and has that problem been solved?

Are we measuring work volume or the value AI delivers to the bottom line? And do we know the difference?

If we removed our AI tools tomorrow, would our outcomes worsen — or would we discover we’ve been measuring activity that never moved the needle?

Have we evaluated whether the time saved by AI is reinvested in higher-value human work — or is it simply disappearing?

Strategy and Leadership Alignment

Did we have an AI strategy before we had AI tools — or did the tools arrive first, with the strategy following to justify them?

What do we fund when it comes to AI? Not what we announce — what do we actually resource, staff, and sustain?

Are our senior leaders aligned on the costs of AI governance — in time, money, and difficult decisions — or only on what AI promises to deliver?

Who owns the outcomes of our AI systems? Not the vendor. Not IT. Who in this organization is accountable when the system gets it wrong?

Does our AI strategy include a change management strategy — or did we deploy technology into an unchanged human system and expect different results?

On Human Pain, Fear, and Resistance

What is the real pain our organization was trying to solve — and is AI the right solution for that pain, or is it simply the most readily available one?

Where is the fear of AI in our organization— and have we fueled that fear, or simply managed around it?

What are the real barriers to AI adoption in our culture —have we named them honestly, or have we reframed them as “change resistance” to avoid accountability?

Are our people actually using the technology — or are they merely complying with our expectations while working around the system entirely?

What happens in our organization when someone questions an AI decision? Is that person protected — or silenced?

On Governance and Systems Accountability

Have we evaluated the AI system itself — or have we simply raised expectations for its users and called that governance?

Do we know what our AI is doing in unstructured work environments — the roles, workflows, and decision points that were never clearly defined before the technology arrived?

How do we map the core work our AI touches — and have we done that mapping before deployment, or only after something goes wrong?

When was the last time someone in our organization slowed an AI deployment due to a governance concern? If the answer is never, what does that tell us?

Are we governing AI — or are we trusting vendors to govern it for us while we sign the indemnification clause and move on?

On People, Automation, and Who Gets Left Behind

Are we using AI to enhance our people’s output — or are we using AI to reduce the number of our people and calling it efficiency?

What is our honest view of AI automation and workforce displacement — and have we said it out loud to those it will affect?

Who in our organization is most vulnerable to being left behind by AI adoption — and do we have a plan for those people, or just a plan for the technology?

Are the employees most affected by our AI decisions the same employees who have the least power to challenge those decisions?

If the people our AI makes decisions about could see inside our system, would they trust it? Would we?

Your Answers Reveal Whether Your AI in HR Strategy Is Real — Or Just a Story You're Telling Yourself.

Here is what I know after more than four decades of working at the intersection of talent, risk, and organizational systems:

The organizations that will be talking about AI governance in three years are not the ones deploying the most tools today. They are the ones willing to pause, right now — and ask whether they actually know what those tools are doing.

Most won’t stop. The pressure to move fast is too loud. The fear of falling behind is too strong. And the questions that matter most are the same questions that slow everything down before they speed it back up again.

But some will. And those are the leaders I want in the room.

Live AI Governance CoLab for HR Leaders:

Every Wednesday, I will be hosting AI Governance CoLab — an open discussion for HR leaders who are done pretending the questions don’t exist and ready to work through what they actually mean for their organizations.

No slides. No scripts. Just the conversation the industry needs to be having.

Are you in?

Join the AI Governance CoLab Discussion:

Wednesday, April 15th at 2 pm EST – A 90 Minute open discussion on AI Governance and Risk. This is not a webinar; it is an open discussion about AI for HR. The sessions will not be recorded. You can join at any point in the discussion and hop off as your schedule permits.

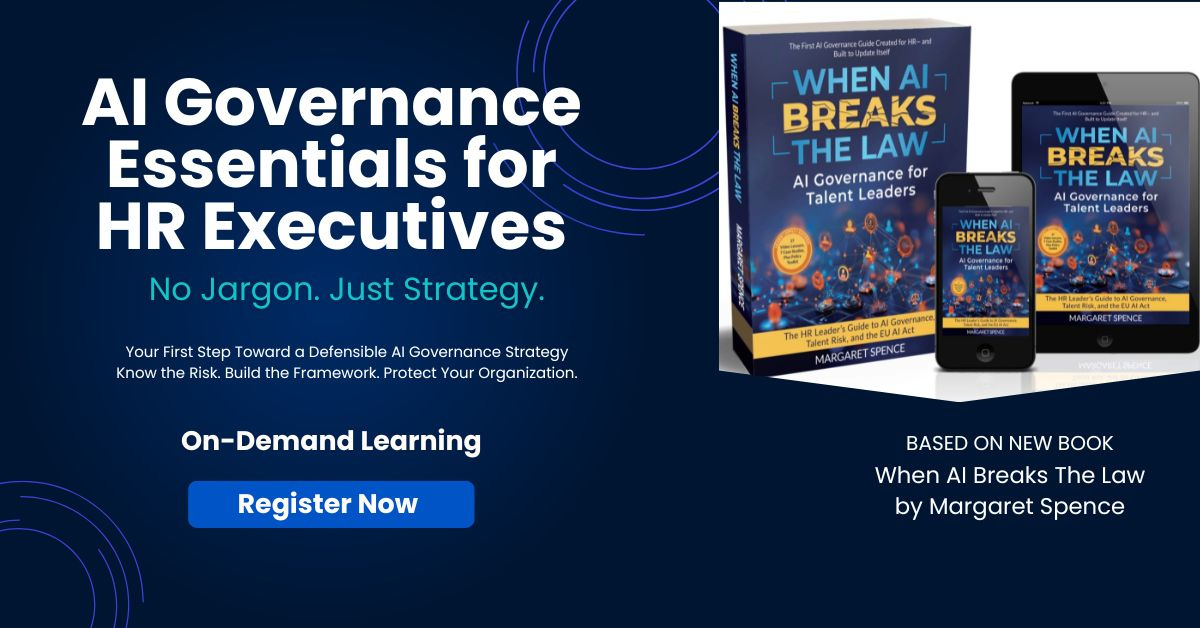

Do you need AI Governance Training for Your HR Team?

Start with Module One Free:

By the end of this workshop, you will be able to answer the questions every General Counsel is now asking HR:

Can you defend how your AI systems made that decision?

Do your managers understand that every AI-generated summary of a sensitive conversation is now discoverable in court?

Would your current governance withstand scrutiny — or expose the gap?

About AI Governance for HR & CoLab Workspace

Margaret Spence, author of When AI Breaks the Law, helps HR and talent leaders operationalize AI governance across hiring, performance, and promotion. Our CoLab workspace delivers daily frameworks to bridge the gap between compliance documentation and ethical AI principles—the gap where the $365M Mobley lawsuit occurred. You’ll build governance infrastructure that reduces legal, reputational, and EU AI Act compliance exposure before AI-driven talent decisions scale bias into discrimination.