The AI Impostor Syndrome: How HR Leaders Are Failing at AI Governance & Strategy

What happens when the people leaders in charge of overseeing AI in your organization don't actually understand what they're governing — and are too afraid to admit it?

There’s something going on in HR leadership meetings globally that nobody dares to admit…

An HR leader attends a meeting about algorithmic bias in recruitment. The data scientist explains false negative rates and disparate impact. Everyone nods thoughtfully. Then the HR leader asks a question—something about whether the algorithm can “just pick better candidates.”

It’s not a stupid question. But it’s the wrong one. And worse, they know it’s not the right one. Still, they ask it anyway. Because in that room, with that silence, and everyone looking confident and sure, they feel the cold panic of not knowing what they don’t know.

This is impostor syndrome in AI governance and strategy, and it’s not the mild, self-doubt kind. It’s something more serious and riskier.

Let’s talk about AI Imposter syndrome in HR on steroids.

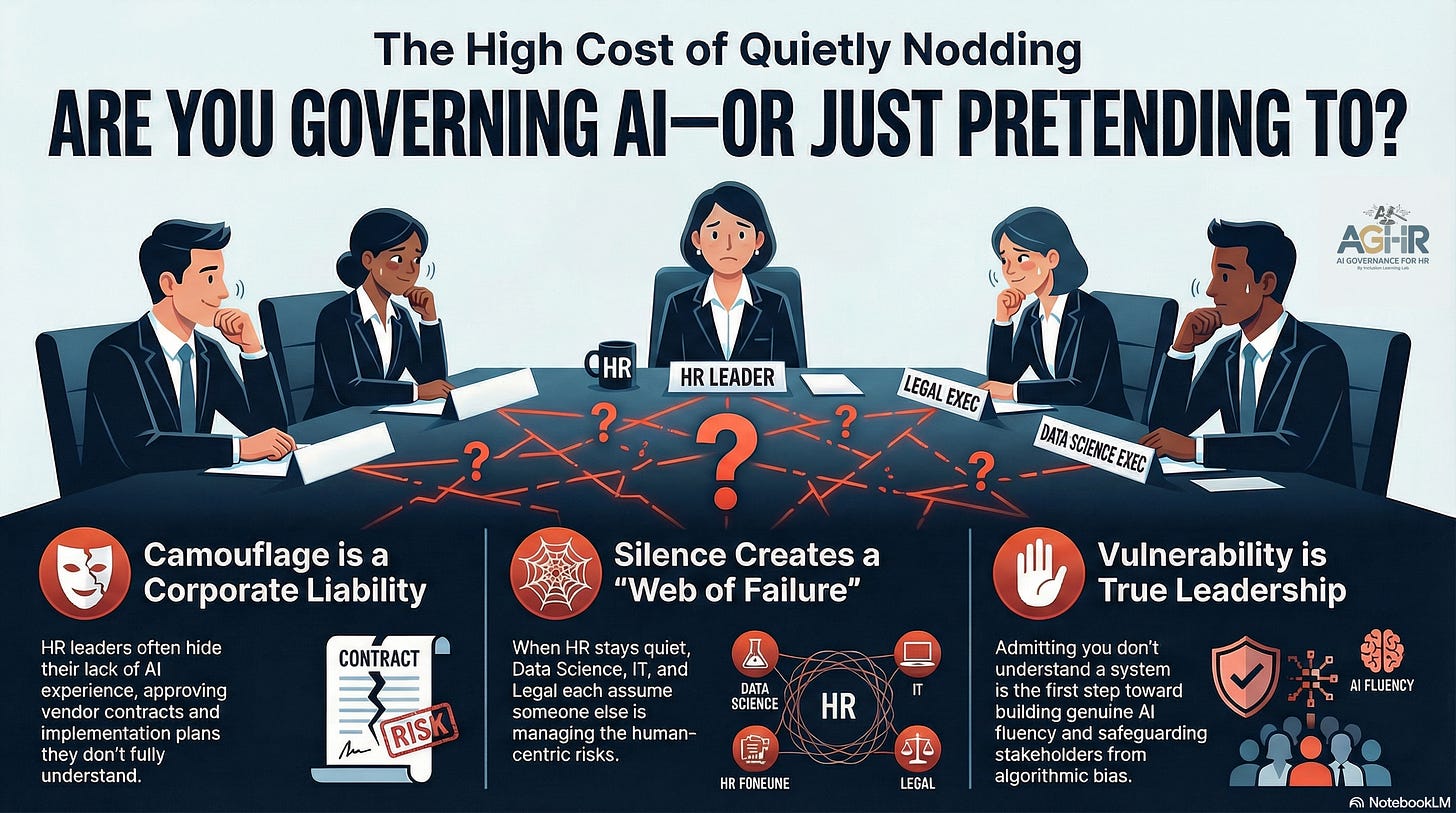

The Real Problem Isn’t Confidence. It’s Camouflage.

Impostor syndrome receives a lot of attention in HR circles. It’s often viewed as a personal issue—something you resolve with therapy, affirmations, or enough promotions to make you feel like you truly belong. But that’s not the case with AI governance in HR.

This is different. This is systemic pretending.

HR professionals excel at shaping perception. We’ve spent years refining this skill. We make decisions seem purposeful. We make processes appear fair. We make culture feel cohesive. That’s not manipulation—it’s simply part of the job. We create the frameworks that make organizations feel secure, even when they’re not.

But when it comes to AI governance, that same instinct can become a liability.

Because HR leaders lack decades of experience managing algorithmic systems, we don’t have mental models for how bias remains hidden in data pipelines. We don’t understand the language of machine learning or statistical testing, and we definitely don’t have peer networks of other HR leaders who have figured this out before us. We’re all learning as we fly the plane, hoping not to make a fatal mistake.

So instead of acknowledging that gap, we do what HR does best: we hide it.

We ask questions that sound knowledgeable. We trust the data science team with a confident nod. We approve vendor contracts without fully understanding the risks. We skip the meeting about bias testing because someone else will probably handle it. We say “yes” to AI implementation plans because admitting “I don’t understand this” feels risky—like admitting we’re not prepared to protect people from harm.

And the truly insidious part? Nobody notices us because everyone else in the room is doing the same thing.

The data scientist believes HR will manage the governance side. The CFO thinks IT has control over risk. Legal assumes HR understands what they signed. And HR? HR is quietly hoping someone else has a plan.

This isn’t impostor syndrome. It’s a web of failure.

Here’s What Happens When We Hide the AI Knowledge Gap

Let me be direct: when HR leaders act like they understand AI governance, people get hurt.

Not eventually. Not in theory. Right now.

Your biased resume-screening algorithm rejects qualified candidates from specific demographic groups. Your performance management AI flags women with caregiving responsibilities as flight risks and steers them away from leadership development. Your internal mobility system suggests promotions in ways that reflect the demographics of your current leadership team—meaning that historical underrepresentation becomes a permanent exclusion.

And none of this sparks a conversation because no one with authority felt confident enough to ask the tough questions.

The Workday case highlighted this issue in a way that’s hard to ignore. But Workday wasn’t the only company involved; it was just the one that faced a lawsuit.

· How many other systems are currently sorting, screening, and predicting in ways that appear to be merit-based but actually reproduce biases from your historical data?

· How many go unnoticed because the person who should ask questions chooses to stay quiet?

This is the elephant in the room. It’s not just that HR leaders lack enough knowledge about AI; it’s that we’re not confident enough to speak up about it. Can you honestly say, ‘I do not know anything about this area of AI’ and not feel like you’re incompetent? Are you able to dispel the myth that HR has all the answers and admit that you do not have any level of competency in AI – you’re just faking it until you make it!

The Permission You Need to Hear It’s Okay Not to Know Everything About AI

You are not alone in this.

Not even a little bit.

STOP pretending that you have everything figured out, because you don’t.

If you’re an HR leader and you don’t fully understand AI governance, that’s not a flaw. It’s a sign that the field has advanced faster than the education system could adapt. There is no HR curriculum that prepared us for this, and no peer network that solved this problem first and can simply hand us the answer. We’re all figuring this out in real time.

But here’s what matters: figuring it out is possible. Not knowing isn’t the same as being unable to learn. Admitting the gap isn’t the same as staying in it. Be vulnerable and accept that you are not moving fast enough because the technology is advancing at lightning speed; competency isn’t the answer, continuous learning is what’s required.

Impostor syndrome fades when you stop focusing on mimicking AI skills and start developing genuine AI fluency, along with learning AI governance to safeguard all stakeholders and the organization.

To break the cycle of AI Imposter Syndrome, HR leaders need AI governance mentors both inside and outside the organization. Mentors who can guide and challenge outdated thinking so we can progress toward tomorrow’s reality.

Five Steps to Find Your AI Governance Mentor

You can’t handle this on your own, and honestly, you shouldn’t try.

A mentor isn’t someone who has all the answers. A mentor is someone who’s a few steps ahead, who remembers what it felt like to not know, and who can help you ask the right questions without judgment. They’re the person you can admit to: “I don’t understand this. Will you help me figure it out?”

1. Map the expertise within your ecosystem

Look around your organization. Who understands both HR and AI?

It might not be obvious. It could be a data scientist who used to work in talent. A compliance officer who’s been through algorithmic audits. An operations person who pushed back on a vendor implementation. Start conversations with people who have had to sit at the intersection of people and systems.

If nobody exists in your organization, look nearby. Your industry association, vendor partners, and peer CHROs at other companies that are further ahead. LinkedIn and professional networks are your allies here.

2. Make it safe to be honest

The person you choose as a mentor needs to understand that you’re starting from a place of genuine not-knowing. Not because you’re lazy or incurious, but because the expertise literally didn’t exist ten years ago. Frame the conversation that way: “I’m committed to governing AI responsibly in our talent decisions, and I need to level up. Will you help me understand where the gaps are?”

People respect that. They will mentor someone who is willing to be vulnerable and committed to the work.

3. Ask for a structured learning pathway

Don’t just grab coffee and hope something helpful happens. Ask your mentor for a sequence: “What do I need to understand first? What comes next? How do I know I’m getting better?” Structure matters because it turns vague anxiety into clear milestones. By June, you can read a vendor’s bias audit report and identify red flags.

4. Practice in safe spaces first

Before making the final decision on a new AI system, ask your mentor to help you run practice scenarios. Sit in on their team's vendor evaluation. Attend a bias audit. Shadow the process so you understand what questions are asked, what data is important, and what decisions look like when they’re carefully made.

Small, low-pressure practice builds confidence. By the time it counts, you’ll have already done it a few times.

5. Join a community of practice

You also need peers. Not mentors—people at your level who are facing the same challenges. A community might be a peer group you create with other HR leaders in your network, a formal program or workshop, or even an online space where people are openly discussing AI governance issues.

The shame fades away when you realize you’re not the only one asking simple questions.

Dear HR Leader: You’re Not the Only One in the Room Pretending

That moment when someone asks an uninformed question in the governance meeting? That’s not a sign of incompetence. It’s a sign of honesty. That’s the start of change.

Imposter syndrome disappears when you realize that admitting you don’t know is actually the most responsible thing you can do.

Because the alternative—staying quiet, nodding along, hoping someone else catches the bias before it becomes a lawsuit—isn’t confidence. It’s negligence.

Here’s what I want you to do: identify one area of AI governance that genuinely makes you uncomfortable. One system you’ve approved, or one that’s upcoming, where you lack confidence in the oversight.

Now find someone who can help you understand it better. And tell them the truth: “I need to get smarter about this, and I need help.”

That’s no longer impostor syndrome.

That’s true leadership.

What’s one AI governance challenge you’re currently struggling with that you haven’t felt comfortable admitting to anyone?

Share it in the comments—anonymously if you’d like. You’re probably not the only one.

Start with Module One Free:

By the end of this workshop, you will be able to answer the questions every General Counsel is now asking HR:

Can you defend how your AI systems made that decision?

Do your managers understand that every AI-generated summary of a sensitive conversation is now discoverable in court?

Would your current governance withstand scrutiny — or expose the gap?

About AI Governance for HR & CoLab Workspace

Margaret Spence, author of When AI Breaks the Law, helps HR and talent leaders operationalize AI governance across hiring, performance, and promotion. Our CoLab workspace delivers daily frameworks to bridge the gap between compliance documentation and ethical AI principles—the gap where the $365M Mobley lawsuit occurred. You’ll build governance infrastructure that reduces legal, reputational, and EU AI Act compliance exposure before AI-driven talent decisions scale bias into discrimination.