The Guillotine in Your Zoom Room: AI Chatbots & Discoverability

Why Your AI Notetaker Is the Most Dangerous Tool in Human Resources — and You Turned It On Yourself

60,000 people saw my last post about the U.S. v. Heppner ruling. Attorneys shared it. CHROs forwarded it to their boards. Senior HRBPs forwarded it to their attorneys. The comments continued for days.

But one comment stopped me cold. An HR executive wrote,

I just realized my AI has been in every sensitive conversation I've had this year.

That is not a comment. That is a confession. If you are honest with yourself, it might be yours, too.

My first article warned you that your AI conversations with tools like Claude, ChatGPT, and Copilot are not privileged — the Heppner ruling made that a matter of federal law. But the question the community returned with was sharper, more personal, and far more urgent:

“What about the AI bots that automatically record my meetings?”

That question deserves a direct answer. Here it is: your AI notetaker is not an assistant. It is a permanent record of your most vulnerable moments — and in most organizations, it has been running on auto-join since someone clicked ‘enable.’

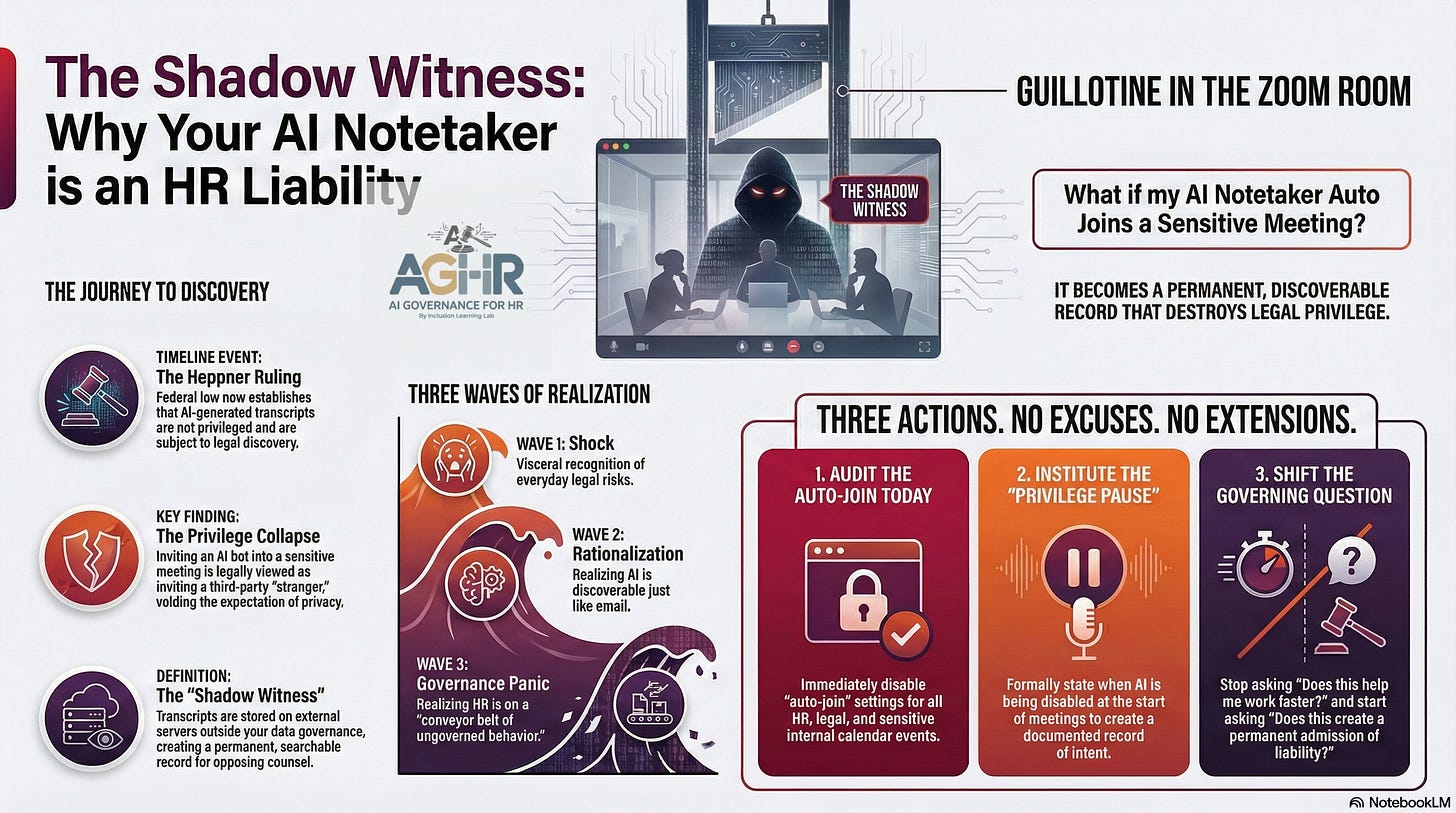

The Shadow Witness You Invited In: AI Chatbots and Governance

When an AI notetaker — Otter.ai, Fireflies, Zoom AI, or Microsoft Copilot — joins your meeting, three things happen simultaneously that your legal team almost certainly does not know about:

First, a non-privileged third-party software provider begins recording every salient detail of your conversation. Second, that data is processed on external servers that exist entirely outside your litigation hold and data governance framework. Third, a permanent, searchable transcript is generated — one that is legally treated the same as any other discoverable document your organization produces.

Attorney-client privilege requires a reasonable expectation of privacy. The moment the bot joins a strategy session with your counsel, you have invited a stranger into the tent. The privilege does not bend around the bot. It collapses.

One attorney in the comments on my first post called these tools ‘a guillotine you use to decapitate yourself.’ That line is not hyperbole. It precisely describes what happens when convenience is allowed to govern where legal protection is required.

Note, I am not an attorney. I am restating what several attorneys added to the post. Here is a link if you would like to read Part 1 - (Read Post on LinkedIn)

What 60,000 Voices Told Us: Viral LinkedIn Post

The viral response to Part 1 was not just engagement — it was a live diagnostic of how unprepared the professional world is for this moment. The reactions moved in three distinct waves.

The first wave was shock. ‘Yikes.’ The immediate, visceral recognition that something they were doing every day carried legal teeth they had never considered.

The second wave was rationalization. Legal professionals pushed back: ‘This isn’t new law. AI is just like email — it has always been discoverable.’ They are technically correct, and that correction makes it worse, not better. If this risk has existed from the beginning and organizations still have no policy, the governance gap is not a knowledge problem. It is a leadership failure.

The third wave was the one that mattered most: governance panic. The realization that HR has been operating on what one commenter called ‘a conveyor belt of ungoverned behavior’ — drafting disciplinary write-ups, documenting internal investigations, conducting sensitive ER sessions — all with an ungoverned bot quietly transcribing every word.

That third wave is where your liability lives.

Three Actions. No Excuses. No Timeline Extensions.

This is not a call for a task force or a policy committee. These three steps must happen before your next meeting.

1. Audit the Auto-Join — Today

Most AI notetakers are configured by default to automatically join every calendar event. Most leaders do not know this. HR must immediately mandate that every team member disable auto-join for any internal meeting, any HR investigation, and any session involving legal counsel. This is a 10-minute setting change with multi-year liability consequences.

2. Institute the Privilege Pause

Create a spoken, documented practice: when a meeting begins, and any AI recording tool is present, the lead person explicitly states: ‘I am disabling the AI assistant for this portion of the meeting to protect legal privilege.’ That statement is not bureaucratic theater. It is the difference between a defensible position and an inadvertent waiver — and it creates a record of intent that a court can see.

3. Change the Governing Question

Your organization has been asking the wrong question about AI tools. The question has been: ‘Does this help me work faster?’ The question must now be: ‘Does this create a permanent, discoverable admission of liability?’ Policy must precede productivity. In the era of the Heppner ruling, convenience is the enemy of confidentiality.

The Reckoning Is Already Recorded

This is not an article about software settings.

It is about the moment organizations stop treating AI as an assistant and start treating it as what it legally is: a permanent witness to your most sensitive professional decisions.

Every disciplinary session held this year with an AI notetaker in the room. Every ER venting call. Every strategy discussion with outside counsel where the bot was on auto-join. Those conversations may already exist as transcripts on a third-party server — outside your control, outside your litigation hold, and within the discovery process of anyone who decides to come after your organization.

The HR executive who wrote that comment was not alone. She was simply the first person in that thread willing to say out loud what the rest of you already knew.

What is your organization’s policy on AI notetakers in sensitive sessions? If you don’t have a clear answer within the next 30 seconds, you have your answer.

Govern the input before it becomes your testimony.

Are you trying to figure out how to lead AI Governance for your organization? Learn about our Intro to AI Governance Workshop

About AI Governance for HR CoLab Workspace

Margaret Spence, author of When AI Breaks the Law, helps HR and talent leaders operationalize AI governance across hiring, performance, and promotion. This CoLab workspace delivers daily frameworks to bridge the gap between compliance documentation and ethical AI principles—the gap where the $365M Mobley lawsuit occurred. You’ll build governance infrastructure that reduces legal, reputational, and EU AI Act compliance exposure before AI-driven talent decisions scale bias into discrimination.

Join our live CoLab: https://bit.ly/4rthpBP