HR Leaders: Using AI as Your Shadow Lawyer or Co-Council? STOP!

Your secret ChatGPT litigation strategy is now Exhibit A.

You thought AI made your lawsuit safer. A federal judge just made it discoverable.

Imagine this.

You’re staring down a lawsuit.

You don’t want to bother your lawyer yet, or you want to be “better prepared” before the next call. So you open Copilot, Claude, Gemini, or ChatGPT and type:

“Here are the facts of my case. Here’s what my lawyer told me. Draft a strategy memo and give me my best defenses.”

You go back and forth with the AI.

You refine arguments, test theories, and rewrite what your attorney said into “plain English.” You save 20–30 pages of beautifully structured legal strategy to your laptop. Then you email some of it to your lawyer, feeling very sophisticated and “future‑ready.”

Fast‑forward: the government executes a search warrant, or opposing counsel serves discovery. They seize your devices, your files, your AI history.

You argue: “That’s privileged. That’s my work with my lawyer.”

And the judge says: No, it isn’t.

Those AI‑generated documents are not protected by the attorney‑client privilege, are not covered by the work-product doctrine, and can be admitted into evidence.

That’s not a hypothetical. That is exactly what just happened in a federal criminal case: United States v. Heppner in the Southern District of New York.

The case that blew up “AI as your quiet co‑counsel”: United States v. Heppner

On February 10, 2026, Judge Jed S. Rakoff of the Southern District of New York issued a ruling from the bench in United States v. Heppner, No. 25‑cr‑503 (JSR).

The defendant, Bradley Heppner, is a financial services executive charged with securities and wire fraud. After being subpoenaed and hiring Quinn Emanuel, he did what far too many sophisticated people are quietly doing:

He went to Anthropic’s Claude and started building his own legal strategy.

He used the consumer version of Claude (not an enterprise, locked‑down instance).

He had Claude help him analyze his legal exposure and draft about 31 documents laying out facts, arguments, and defenses.

He saved those AI‑generated documents on his devices and later sent them to his lawyers.

When federal agents searched his devices, they found the Claude material. Defense counsel tried to protect it by logging the AI docs as:

“Artificial intelligence‑generated analysis conveying facts to counsel for the purpose of obtaining legal advice.”

They asserted attorney‑client privilege and the work‑product doctrine.

Judge Rakoff’s response was brutally clear.

From the bench, he said he was:

“not seeing remotely any basis for any claim of attorney‑client privilege.”

He held that the Claude‑generated materials were neither privileged nor protected work product. The government can use them.

This is, as Debevoise notes, likely the first federal decision squarely holding that using a consumer generative‑AI tool with potentially privileged information can destroy privilege.

Why the judge said: your AI conversations are not privileged

The Heppner ruling doesn’t invent new law. It applies long‑standing privilege rules to a new behavior: treating AI as your shadow lawyer.

1. Claude is not your attorney

Attorney‑client privilege protects confidential communications between a client and a licensed lawyer for the purpose of obtaining legal advice.

Claude is not licensed.

Anthropic’s own documentation and terms make this clear: Claude does not provide legal services, and using the tool does not create an attorney‑client relationship. Judge Rakoff treated Heppner’s interaction with Claude as a communication with a third party, not with counsel.

Once you recognize the AI as a third party, the next domino falls quickly.

2. You can’t claim confidentiality when the terms say “this isn’t confidential.”

Privilege is lost when you disclose your communication to a non‑privileged third party. That’s privilege 101.

Here, the government cited Anthropic’s terms and privacy policy:

Prompts and outputs can be logged.

Debevoise’s write‑up notes that Judge Rakoff viewed this as fatal: Heppner disclosed his would‑be “strategy” to a service whose terms state that the information is not confidential.

In other words, if the tool’s terms state that “we may use this data, share it, and it’s not confidential,” you cannot seriously claim you had a reasonable expectation of confidentiality.

3. You cannot “launder” privilege by emailing AI docs to your lawyer later

Heppner’s team advanced a nuanced argument: these AI‑generated documents were created to help him organize facts and convey them to counsel, and thus should be protected.

Rakoff returned to another basic rule: pre‑existing, unprivileged documents do not become privileged simply because you send them to your lawyer.

Timeline matters:

Heppner created the documents

By the time those documents reached counsel, they had already been:

Created in partnership with a non‑lawyer third party, and

You can’t retroactively “cloak” it in privilege.

4. The work‑product doctrine didn’t save him either

The defense also invoked the work‑product doctrine, which protects materials prepared in anticipation of litigation, particularly when they reflect counsel’s mental impressions or strategy.

Judge Rakoff rejected that, too. Why?

The Claude documents reflected

As Debevoise puts it, this ruling warns that consumer use of generative AI in legal disputes is “fraught with privilege risk.”

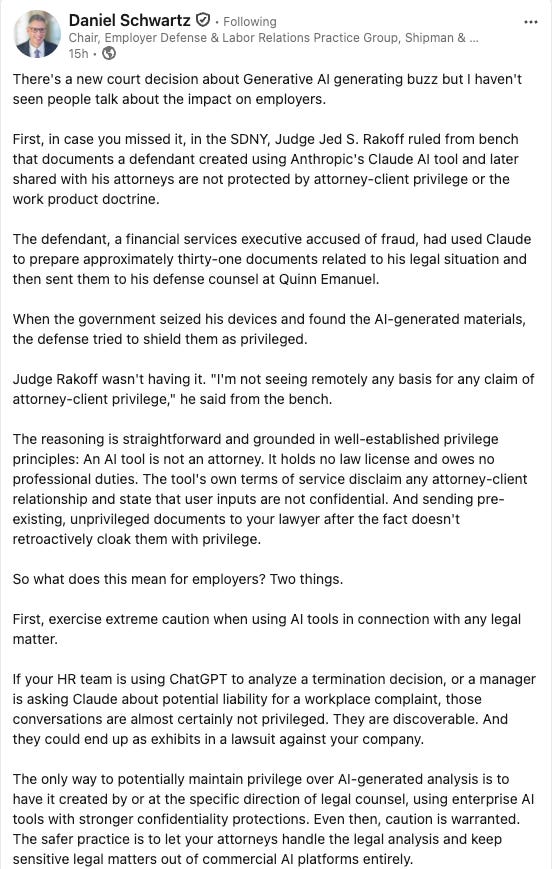

Dan Schwartz’s message to employers: your AI conversations are not privileged

Employment lawyer Dan Schwartz took one look at Heppner and distilled it into a message every employer needs to hear: “Your AI conversations are not privileged.”

In his Connecticut Employment Law Blog article, “A Court Just Confirmed What Employers Need to Hear: Your AI Conversations Are Not Privileged,” he directly connects the dots to HR.

He lays it out plainly:

“If your HR team is using ChatGPT to analyze a termination decision, or a manager is asking Claude about potential liability for a workplace complaint, those conversations are almost certainly not privileged. They are discoverable and could end up as exhibits in a lawsuit against your company.”

He also flips the lens to the plaintiffs:

“Employees are increasingly using AI tools to research their legal rights, draft complaints, and strategize about claims. Those conversations are discoverable, and Judge Rakoff’s ruling makes clear they are not privileged. That prompts an employee enters into ChatGPT or Claude often reveal more than the polished complaint that lands on your desk.”

Heppner gives that argument real teeth. The court didn’t just hint at risk; it opened the door and invited those AI histories into the evidentiary record.

What this means for CHROs, HR, and in‑house counsel

From an AI governance perspective, here’s the uncomfortable truth:

1. Most organizations have leaders and HR professionals quietly doing some version of what Heppner did—just on the civil side.

They ask Copilot, Claude, or ChatGPT things like:

“Is this age discrimination if I terminate this person?”

Under the logic of United States v. Heppner, that content is

Almost certainly

If the AI tool’s public terms state that inputs can be logged, used for training, or shared, assume no confidentiality, no privilege, and no safety net.

That means:

No fact‑specific termination or investigation questions.

This isn’t anti‑AI; it’s recognizing that tools designed to train on the world’s data aren’t appropriate containers for your most sensitive legal risks.

2. Keep legal analysis with licensed counsel, not AI

The safer approach is straightforward and, frankly, old‑school:

If it is truly a legal question, call your lawyer.

Schwartz’s warning is clear: “The safer practice is to let your attorneys handle the legal analysis and keep sensitive legal matters out of commercial AI platforms entirely.”

3. Use enterprise AI under counsel’s direction if you must, but don’t assume magic protection

There is a meaningful difference between:

Public, consumer AI tools with training and data‑sharing baked in, and

Debevoise suggests that where:

The AI is an enterprise tool configured for confidentiality.

There may be a stronger argument that outputs fall within the work‑product doctrine.

But Heppner should dispel the notion that “AI + litigation = automatically privileged.” It doesn’t.

4. Build AI into your discovery strategy—on both sides

Heppner and Schwartz both point to a powerful litigation reality: generative‑AI histories constitute a new, rich class of discoverable evidence.

For employers and defense counsel:

Update litigation hold notices to include AI prompts, responses, and exports related to the dispute.

AI histories discussing the claim,

AI‑drafted complaints or demand letters,

AI‑generated analysis or strategy documents.

For HR governance, this means building AI awareness into how you think about records, e‑discovery, and preservation—not just “innovation.”

5. Train leaders with a single blunt message: “No expectation of privacy. No privilege.”

Every AI training session for executives, HR, and managers should now include a clear, direct explanation of United States v. Heppner:

· A defendant used Claude to draft approximately 31 documents about his case.

Claude is not a lawyer.

The tool’s terms said inputs weren’t confidential.

You can’t make non‑confidential AI documents privileged by emailing them to your lawyer afterward.

Then pair that with Dan Schwartz’s headline point:

“Your AI conversations are not privileged. They are discoverable. And they could end up as exhibits in a lawsuit against your company.”

If a leader wouldn’t put the content in an email to opposing counsel, they should not put it into a consumer AI tool.

About AI Governance for HR CoLab Workspace

Margaret Spence, author of When AI Breaks the Law, helps HR and talent leaders operationalize AI governance across hiring, performance, and promotion. This CoLab workspace delivers daily frameworks to bridge the gap between compliance documentation and ethical AI principles—the gap where the $365M Mobley lawsuit occurred. You’ll build governance infrastructure that reduces legal, reputational, and EU AI Act compliance exposure before AI-driven talent decisions scale bias into discrimination.

New Book Loading: When AI Breaks the Law: AI Governance for Talent Leaders (Release Date April 20, 2026)