Compliance Training Is Not AI Governance

And the Difference Is Costing TD Leaders More Than They Know

Someone told you AI compliance training means AI governance.

Maybe it was a legal team that handed you a policy and told you to turn it into a module. Maybe it was a CHRO who needed a box checked before a tool went live. Maybe it was a vendor who handed you a training kit that included the software license and called it “governance-ready.”

You built the training, deployed it, and checked the box.

Here is the myth buster: you did not build governance. You built compliance training. Those two are not the same.

The Scenario Most TD Leaders Know Intimately

Picture this. Procurement selects an AI-powered learning recommendation engine. IT implements it. Six weeks before go-live, the TD team receives the project handoff and is tasked with building the training program.

Nobody asks whether the tool has been audited. Nobody defines what responsible use looks like for this specific system within this organization. Nobody identifies who is accountable when the tool produces a recommendation that a manager cannot explain or defend.

The TD leader builds the training anyway. That is the job. The timeline is set, and the go-live date is on the calendar, and someone has to make the humans ready to use the thing.

And when the outcomes are dismal, or when the tool recommends development opportunities that consistently favor certain employees over others, and nobody can explain why, the training is questioned first.

The TD leader owns the failure even though they never owned the decision.

This is not hypothetical. This is the current operating reality for talent development professionals in organizations deploying AI at scale.

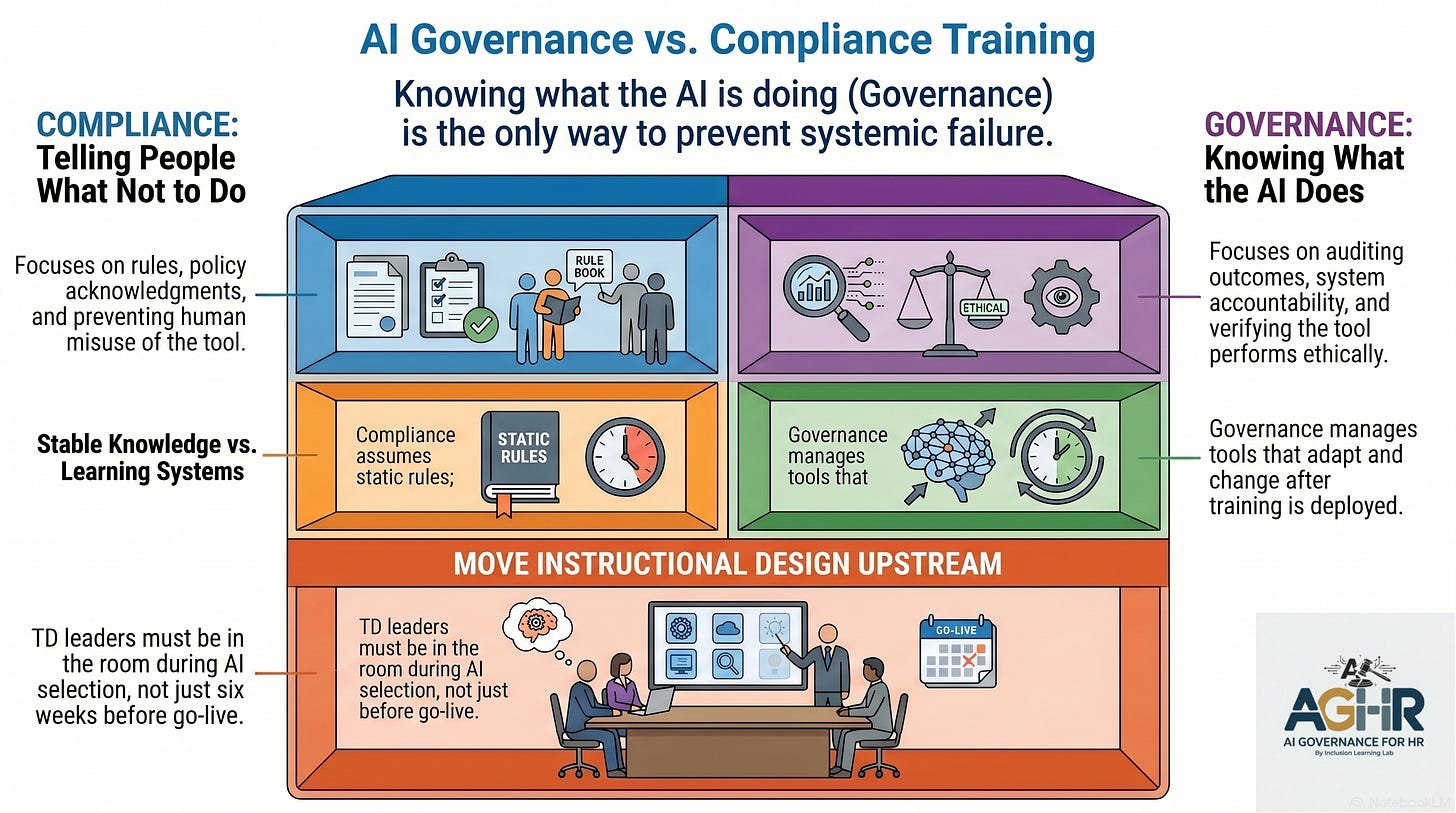

What Compliance Training Actually Does

A 20-minute module on responsible AI use teaches people what not to do.

Don’t put proprietary information into the tool. Don’t use AI to make final decisions without human review. Don’t upload personal employee data without authorization.

These are rules. Rules are necessary. Rules are not governance.

A policy acknowledgment confirms that standards exist and that individuals have read them. An ethical use statement communicates the organization’s values. A handbook entry outlines the consequences of misuse.

None of those things tells you what your AI is actually doing in your systems right now.

Governance is not about instructing people. Governance is about knowing, having a knowledge base. Knowing what your AI is doing to your people, inside your processes, and within your organization. Knowing whether the tool performs as the vendor described. Knowing whether the outcomes it produces are ethical, equitable, and aligned with what you actually deployed it to do.

You cannot know those things from a compliance module. You can only know them through a governance framework built before the tool went live and maintained while the tool operates. A governance framework used to train end users on compliance and to create guardrails to stop the AI from coloring outside the lines.

The Instructional Design Problem Nobody Named

TD leaders are grappling with an issue the field has not yet fully acknowledged.

The instructional design model most practitioners were trained in assumes stable knowledge. You identify the gap. You design the content. You assess the outcome. You update on a cycle that matches the pace of the work.

AI breaks that model.

A tool that learns from its own decisions adapts between your training deployments. The manager you trained six months ago on your performance support AI is now interacting with a system that has shifted in ways your training did not anticipate. The upskilling curriculum you built for your leadership development platform was accurate at the time, but it may no longer reflect what the tool is doing today.

Nobody has provided TD leaders with a governance framework for this problem. Nobody has defined what AI competency looks like for the specific tools in a particular organization’s stack. Nobody has answered the question underlying every AI training request: how do you build human capability for a system you do not fully understand, that was selected without your input, and changing faster than your curriculum can keep up?

Compliance training cannot answer that question. It was never intended to do so.

What AI Governance Actually Requires

Governance requires knowing who owns each AI tool when something goes wrong. Not a department. A named person with the authority and competence to act.

Governance requires knowing whether the tool has been evaluated, audited, and scoped before it influences any talent decision. Not a vendor certification. An organizational verification.

Governance requires a training architecture built in sequence — executives first, then managers, then employees — because training people to use a tool responsibly on top of a governance foundation that does not exist is not upskilling. It is digital hypocrisy.

And governance requires TD leaders to be in the room before the tool is selected, not to be handed a timeline six weeks before go-live.

The learning layer is not a downstream function of AI implementation. It is governance infrastructure. The people who understand how learning shapes behavior at scale, how organizations actually adopt new tools versus how leadership assumes they will, and how to build the organizational capability that underpins a policy — those people belong in the conversation before the contract is signed.

AI Compliance Training As Governance: The Myth Is Expensive

Organizations that treat compliance training as governance are not protected. They are exposed to a false sense of security, which is more dangerous than being aware of the gap.

TD leaders who accept compliance training requests as governance solutions are not serving their organizations. They are assuming accountability for decisions they never made, building on foundations they never assessed, and owning outcomes they were never positioned to prevent.

The myth is that compliance training is governance.

The truth is that governance means knowing what your AI is doing. Compliance training means telling people what not to do.

One of these things is not like the other.

Margaret Spence is the founder of the Inclusion Learning Lab and the author of When AI Breaks the Law: AI Governance for Talent Leaders, set to release in June 2026. She is speaking at ATD 2026 on May 18th: When AI Fails, Organizations Pay. Download the session pre-work from the ATD conference platform.

Start with Module One Free:

By the end of this workshop, you will be able to answer the questions every General Counsel is now asking HR:

Can you defend how your AI systems made that decision?

Do your managers understand that every AI-generated summary of a sensitive conversation is now discoverable in court?

Would your current governance withstand scrutiny — or expose the gap?