Understanding How AI Is Trained, What It Learns, and Why It Matters for Your Organization

The Plain-Language Data Governance Series for HR Leaders — with a Risk Assessment Checklist for Every Step - [Part 1]

There is a question most HR leaders have never been formally asked. Not in a vendor meeting. Not in a compliance review. Not by their board.

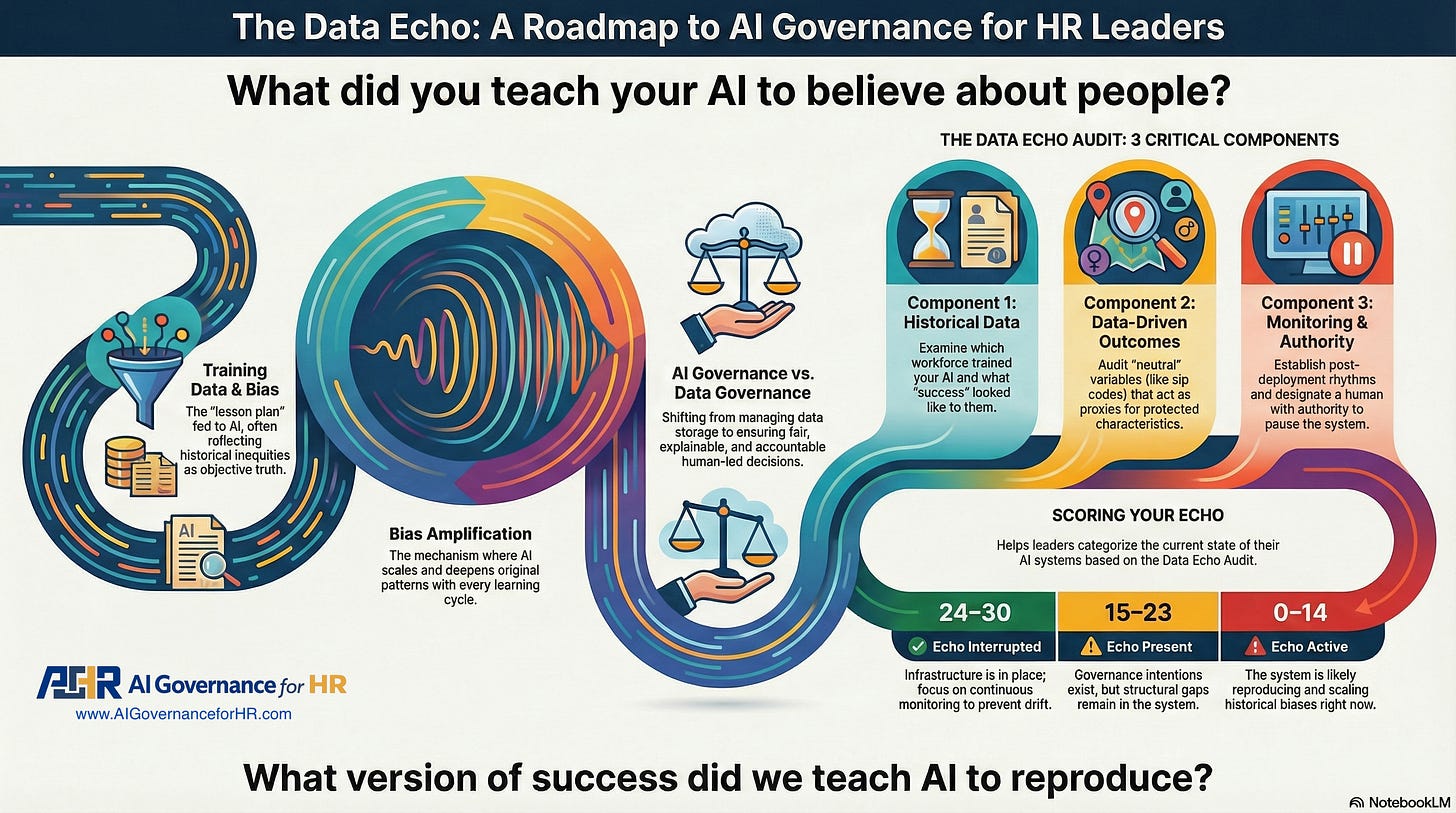

What did you teach your AI to believe about people?

Not what it outputs. Not how fast it runs. Not what the fairness certification says. What it was actually taught — from what data, representing what workforce, shaped by what history — before it ever made a single decision about a single human being.

That question is the center of what I’ve been writing about in recent weeks. And it is the question this diagnostic is built around.

The Data Echo is one of four failure patterns examined in my forthcoming book, When AI Breaks the Law: AI Governance for Talent Leaders, scheduled for publication in May 2026.

Data Governance in HR and the Talent Cycle

Data governance in HR is the discipline of determining who is responsible for the data that flows through your talent systems — what is collected, how it is used, who can access it, how long it is kept, and what happens when it is incorrect.

In the talent cycle, data governance is not abstract. It answers a concrete set of questions at every stage of the employee journey: Who approved the use of this candidate’s resume data in our AI screening tool? Who verified that our performance review data was accurate before it was used to train our promotion algorithm? Who is accountable when our attrition prediction model flags women as flight risks based on historical patterns that reflect the organization’s past, not the individual’s future?

Data governance becomes AI governance the moment data is used to train a system that makes decisions about people. At that point, the stakes shift — because a governance failure is no longer just a data management problem. It is a discrimination problem. It is a legal liability. And it is a human one.

Think of it this way: data governance asks what data do we have and how do we manage it. AI governance in the talent cycle asks what our data taught our systems to believe about people — and who is responsible for that lesson.

The two disciplines are inseparable. AI governance without data governance is a house built on sand. You cannot govern what a system decides if you have never examined what it was trained on.

What Is the Data Echo?

The Data Echo is not a metaphor. It is a mechanism.

When an AI hiring system is trained on historical workforce data, it learns everything that data contains — including every bias, every structural exclusion, and every pattern of inequity that shaped who was hired, promoted, and recognized. An algorithm trained on ten years of promotions in a male-dominated leadership pipeline learns, with perfect fidelity, that leadership looks male. A resume screening tool trained on a workforce that historically excluded certain demographics learns that certain signals predict poor performance.

The machine is not inventing this. It is faithfully reproducing what the data said. The problem is that the data was never a neutral record of human potential. It was a record of who was recognized — within systems that were never fair to begin with.

And here is where the Echo becomes dangerous: the system doesn’t just reproduce that bias at deployment. It amplifies it at a scale no human hiring manager ever could, with each cycle reinforcing the last. Biased outputs become new data, and that data deepens the pattern. The past doesn’t just repeat. It compounds.

This is how Workday’s AI screening system — built with genuine intentions, certified as fair, and deployed by sophisticated organizations — contributed to what its own filings suggest were 1.1 billion application rejections, ultimately resulting in a $365 million discrimination lawsuit that has redefined employer liability in the age of AI.

Nobody programmed discrimination. The data taught it. And nobody audited the lesson.

Data Governance for HR: Why This Matters Right Now

Here is what makes the Data Echo specifically urgent for HR leaders in 2025.

The speed of AI adoption in talent systems has dramatically outpaced institutional capacity to govern what those systems learn. Organizations have deployed tools they don’t fully understand, trained on data they haven’t examined, producing outcomes that nobody is measuring for fairness. And because the outputs feel objective — they are numbers, scores, rankings — the discrimination embedded in the training data is laundered by the appearance of algorithmic neutrality.

The EU AI Act now classifies AI tools used in hiring, promotion, and performance evaluation as high-risk systems, triggering mandatory bias testing, human oversight, and transparency requirements. Courts in the United States are establishing that AI vendors are legal agents of the employers who deploy them — meaning that the algorithm’s discrimination is your organization’s discrimination, regardless of what the contract says.

But compliance is not the reason to care. The reason to care is that every AI hiring system operating without governance makes decisions about people’s futures based on a version of the past that was never examined for fairness.

That is what the Data Echo Audit is designed to interrupt.

Four AI Data Governance Terms HR Leaders Need Before You Audit Your Training Data

Before you begin, it helps to understand exactly which discipline this audit is working within — and why the language matters.

Training Data is the specific dataset fed into an AI model to teach it which patterns to recognize and reward. Not “historical data” in the general sense. Training data is the lesson plan. Whatever is in it — every bias, every exclusion, every structural inequity — becomes part of what the system learns to call normal. When someone says “let’s train it on our best people,” this is what they mean. And this is where the Echo begins.

Training Data Bias is the condition in which training data reflects past inequities, causing the AI model to learn and reproduce them as if they were the objective truth. Also called historical bias or representation bias in machine learning. The algorithm does not know it is biased. It knows only what the data showed it. If the data showed that leadership looks male, that promotions favor youth, and that certain zip codes predict failure, the model would accept those patterns as accurate descriptions of the world and optimize accordingly.

Bias Amplification. The mechanism by which training data bias doesn’t simply replicate at the same level — it scales. Each cycle of the AI’s learning deepens the original pattern. Biased outputs become new data, which reinforces the old bias. The system grows more confident in what it was wrong about from the beginning. This is not a glitch. It is the system working exactly as designed on data that was never examined.

AI Governance is the discipline of ensuring that automated systems make fair, explainable, and legally accountable decisions about people — and that a human being with real authority is responsible for those decisions. This is distinct from data governance, which manages how data is stored and shared across an organization. AI governance, and specifically training data governance, is the practice of auditing what AI systems were taught before deployment, monitoring what they continue to learn after deployment, and building accountability structures to detect and correct bias before it becomes systemic.

The Data Echo is the name for the cycle that connects all four: training data carries bias, the model learns it, bias amplification deepens it with each iteration, and the absence of AI governance ensures no one sees it happening until the damage is done.

Auditing Your AI Training Data: Three Critical Components of the Data Echo Audit

The Data Echo Audit is a free, downloadable 15-minute diagnostic. It poses ten questions mapped directly to the three forces that produce the Echo:

Part 1 — Historical Data. Which workforce trained your AI, and has anyone examined its demographic composition? Does anyone in your organization know what “success” looked like in the dataset that taught your system what to look for?

Part 2 — Data-Driven Outcomes. Can you explain to a candidate why the system rejected them? Has your organization examined whether apparently neutral variables — zip codes, graduation years, personality assessment scores — serve as proxies for protected characteristics?

Part 3 — Data Amplification and the Echo. Is there a defined monitoring rhythm after deployment, or did verification end at launch? Is there a designated person with the authority to pause the system if bias is detected? Do frontline recruiters have a protected, formal path to raise a concern about what they observe?

Each question has three answer options — A, B, or C — scored 3, 1, and 0, respectively. The maximum is 30 points. Now lets complete the audit using the Data Echo Worksheet.

This post is part of an ongoing series on AI governance for HR and Talent leaders. If this is your first time here — welcome. The work we are doing is about making the invisible visible before the invisible becomes a lawsuit. Learn about our AI Governance Courses - Intro to AI Governance for Talent Leaders (Learn More)

Keep reading with a 7-day free trial

Subscribe to AI GOVERNANCE for HR to keep reading this post and get 7 days of free access to the full post archives.